BERT vs GPT: How Encoder-Only and Decoder-Only Models Shape Modern NLP

The way machines understand and generate language changed forever when BERT and GPT arrived. They didn’t just improve on old models-they rewrote the rules. At first glance, they look similar: both use transformers, both were trained on massive text datasets, both exploded in popularity. But beneath the surface, they’re built on opposite philosophies. One reads a sentence backward and forward at once. The other reads it left to right, like a human. And that tiny difference changed everything.

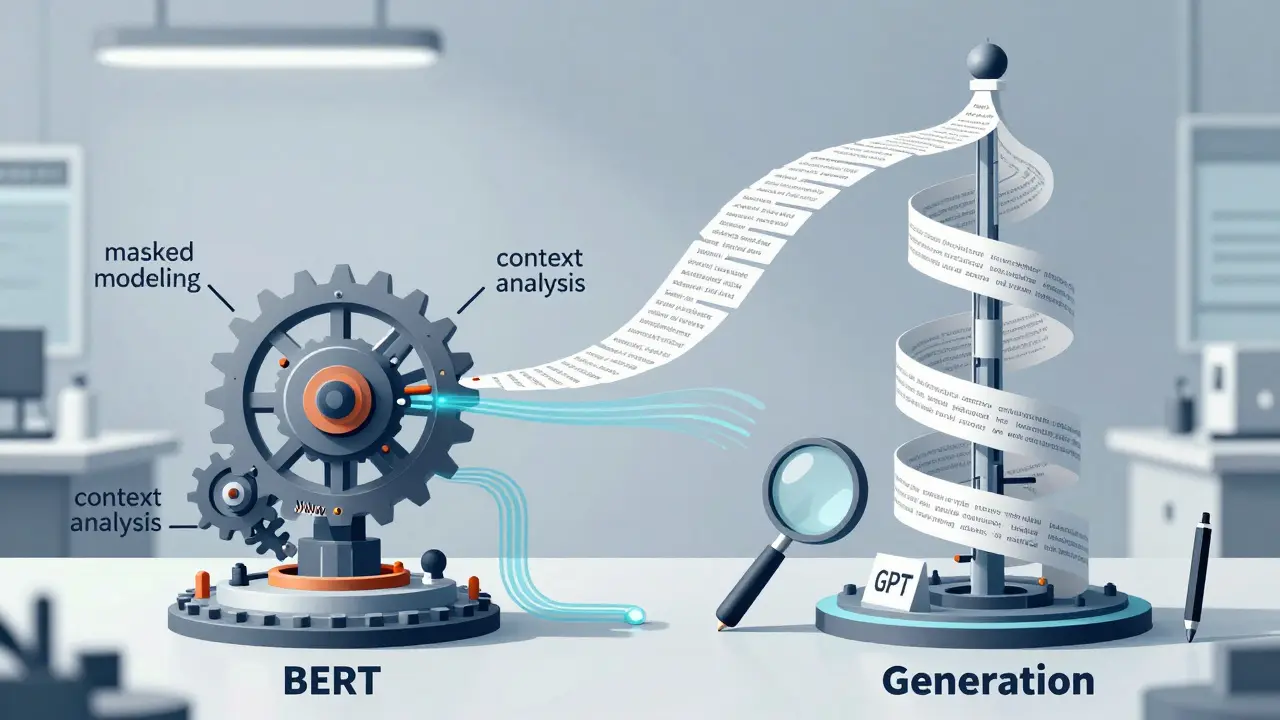

How BERT Reads Language Backward and Forward

BERT is a bidirectional encoder-only transformer. That means when it sees a word, it doesn’t just look at what came before it. It looks at what comes after, too.

Imagine you’re reading this sentence: "I went to the bank to deposit money." If you only saw "bank" and the word before it, you might think it’s a riverbank. But BERT sees the whole sentence. It knows "deposit money" comes after, so it instantly understands this is a financial institution. That’s because BERT uses masked language modeling. During training, it randomly hides 15% of words-like "bank"-and forces itself to guess them using context from both sides. This trains it to deeply understand relationships between words, not just predict what comes next.

That’s why BERT crushes tasks where meaning matters more than output. On the GLUE benchmark, which tests how well models understand language, BERT-large scored 84.8 out of 100. On SQuAD 2.0-a question-answering test where answers aren’t always directly stated-it hit 84.8 F1 score. Companies like Google used BERT to improve search results. By late 2019, it was helping understand 1 in 10 English searches. Suddenly, queries like "can you get a flu shot after a cold?" made sense. BERT didn’t just match keywords. It understood the nuance.

It’s also leaner. BERT-base needs only 4GB of GPU memory to run. You can fine-tune it on a single NVIDIA V100 in a few hours. Developers on GitHub report 92% accuracy on medical text classification after minimal training. Hugging Face’s BERT tutorials have been viewed over 1.2 million times. It’s accessible, reliable, and built for understanding-not generating.

How GPT Reads Only Forward and Builds From Scratch

GPT is a decoder-only, autoregressive transformer. It doesn’t look ahead. It doesn’t see the full context. It sees one word at a time and asks: "What’s the most likely next word?" Then it builds the sentence word by word.

This is how humans naturally write. You don’t plan an entire paragraph before typing. You start with "I think," then "the weather," then "is going to be," and so on. GPT mimics that. Its training uses causal language modeling. It learns to predict the next token based only on what came before. No backward glances. No second chances.

That’s why GPT is unmatched at generating text. GPT-3, with 175 billion parameters, scored 89.9 on perplexity tests-measuring how well it predicts language flow. It outperformed BERT on LAMBADA, a test for long-range context, hitting 57% accuracy while BERT only managed 47.8%. That’s why 92% of commercial chatbots use GPT variants. They sound human. They keep conversations flowing. They write emails, code, stories, and marketing copy.

But there’s a cost. GPT-3 needs 4 NVIDIA A100 GPUs with 80GB of VRAM each to fine-tune. One training run can cost $2,800. It’s not just big-it’s expensive. And because it can’t see the future, it makes mistakes. If you say, "He went to the bank," GPT might keep writing about rivers if the next word is "fishing." BERT would’ve caught that. GPT doesn’t know what’s coming. It only guesses based on what’s already there.

When to Use BERT vs GPT

There’s no winner here. There’s only the right tool for the job.

If you’re building a system that needs to:

- Understand legal documents

- Extract names, dates, or entities from customer support tickets

- Classify sentiment in product reviews

- Improve search engine results

-then BERT is your pick. It’s precise. It’s efficient. It’s what 78% of Fortune 500 companies use for internal analytics. Google uses it in Gmail to suggest smart replies. Amazon uses it to tag product categories from customer reviews.

If you’re building a system that needs to:

- Write blog posts or ad copy

- Run a 24/7 customer chatbot

- Generate code from natural language prompts

- Create personalized content at scale

-then GPT is your pick. It’s fluent. It’s creative. It’s what powers 92% of AI chatbots today. OpenAI’s API handles over 10 billion requests per month. Companies like Duolingo and Shopify use it to generate dynamic user interactions.

The numbers don’t lie. On sentence similarity tasks like MRPC, BERT hits 94.9% accuracy. GPT only manages 86.4%. But on text generation benchmarks, GPT-3 leads. BERT can’t even produce a coherent paragraph. It wasn’t built for that.

Real-World Trade-Offs: Power vs Practicality

Here’s the messy truth: BERT is easier to deploy. GPT is harder to afford.

Small businesses can run BERT-base on a $50/month AWS t3.xlarge instance. It processes 500 customer reviews an hour. No team of AI engineers needed. Hugging Face’s documentation is clear. Code examples are everywhere. You can learn to fine-tune it in two weeks.

GPT-3? Not so much. You need cloud credits, specialized hardware, and someone who knows how to manage distributed inference. Even GPT-4, released in late 2024, still requires massive compute. Companies that use it directly often rely on OpenAI’s API-paying per token. That’s fine for startups. But for enterprises processing millions of requests? Costs add up fast.

And then there’s trust. Users love GPT’s fluency. But they hate its hallucinations. Trustpilot reviews for GPT-powered tools average 4.1/5, but complaints about "factual errors in medical advice" or "wrong legal terms" are common. BERT? 4.3/5. People trust it because it’s not inventing answers. It’s analyzing what’s there.

The Future Isn’t BERT or GPT-It’s Both

The most exciting development in NLP isn’t a new version of BERT or GPT. It’s hybrids.

Models like BART and T5 combine encoder and decoder architectures. They read text bidirectionally like BERT, then generate output like GPT. That’s why they’re dominating benchmarks that require both understanding and generation. Facebook’s BART, for example, outperforms both on summarization, translation, and question answering.

Industry analysts predict that by 2027, 75% of large organizations will use hybrid pipelines. BERT for understanding customer intent. GPT for generating responses. Google’s own Search system now uses BERT for query analysis and GPT-style models for snippet generation.

Even the creators agree. Dr. Ashish Vaswani, co-author of the original Transformer paper, said it plainly: "BERT’s bidirectional encoding is optimal for comprehension. GPT’s autoregressive decoding is optimal for generation. They’re solving different problems."

And that’s the key takeaway. BERT and GPT aren’t rivals. They’re partners. One understands. The other creates. The future of NLP isn’t choosing one. It’s knowing when to use both.

Can BERT generate text like GPT?

No. BERT was never designed to generate text. It predicts masked words in context, but it doesn’t build sentences step by step. While some researchers have hacked BERT to produce output, it’s unnatural, repetitive, and unreliable. For text generation, GPT and its descendants remain the only practical choice.

Is GPT better than BERT for search engines?

Not for understanding queries. Google uses BERT to interpret search intent, especially for complex, conversational phrases. GPT’s unidirectional nature makes it poor at disambiguating context-like understanding whether "apple" refers to the fruit or the company. BERT’s bidirectional processing gives it the edge in search relevance. GPT might help generate the answer snippet, but BERT decides what the user really meant.

Which model is cheaper to run in production?

BERT. BERT-base requires only 4GB of GPU memory and runs efficiently on standard cloud instances. GPT-3 and later versions need multiple high-end GPUs (like NVIDIA A100s) and cost thousands per month just for inference. For most businesses, BERT is the affordable, scalable choice for language understanding tasks.

Why does BERT have a steeper learning curve?

BERT requires understanding of tokenization, attention masks, and fine-tuning on labeled datasets. You need to prepare training data, handle input length limits (512 tokens), and often build custom classifiers. GPT, especially via API, hides this complexity. You just send text and get output. So while BERT is more powerful for custom tasks, GPT is easier to start with if you just want answers or content.

Are BERT and GPT still relevant with newer models like GPT-4 and Llama 3?

Absolutely. Newer models build on their foundations. GPT-4 improves generation but still uses a decoder-only structure. Llama 3 is open-source but follows GPT’s autoregressive design. Meanwhile, models like BERT-Quantized and RoBERTa enhance BERT’s efficiency and accuracy. The core architectures-bidirectional encoding and autoregressive decoding-are still the backbone of nearly all modern NLP systems.

What Comes Next?

The line between understanding and generation is blurring. Hybrid models are rising. But BERT and GPT remain the two pillars. One teaches machines to listen. The other teaches them to speak. You don’t need to pick one. You need to know when each one shines.

For now, if you’re solving a problem of meaning, go with BERT. If you’re solving a problem of expression, go with GPT. And when you’re ready to do both? That’s when the real magic happens.

- Feb, 27 2026

- Collin Pace

- 9

- Permalink

Written by Collin Pace

View all posts by: Collin Pace