Chain-of-Thought in Vibe Coding: Why Explanations Must Come Before Code

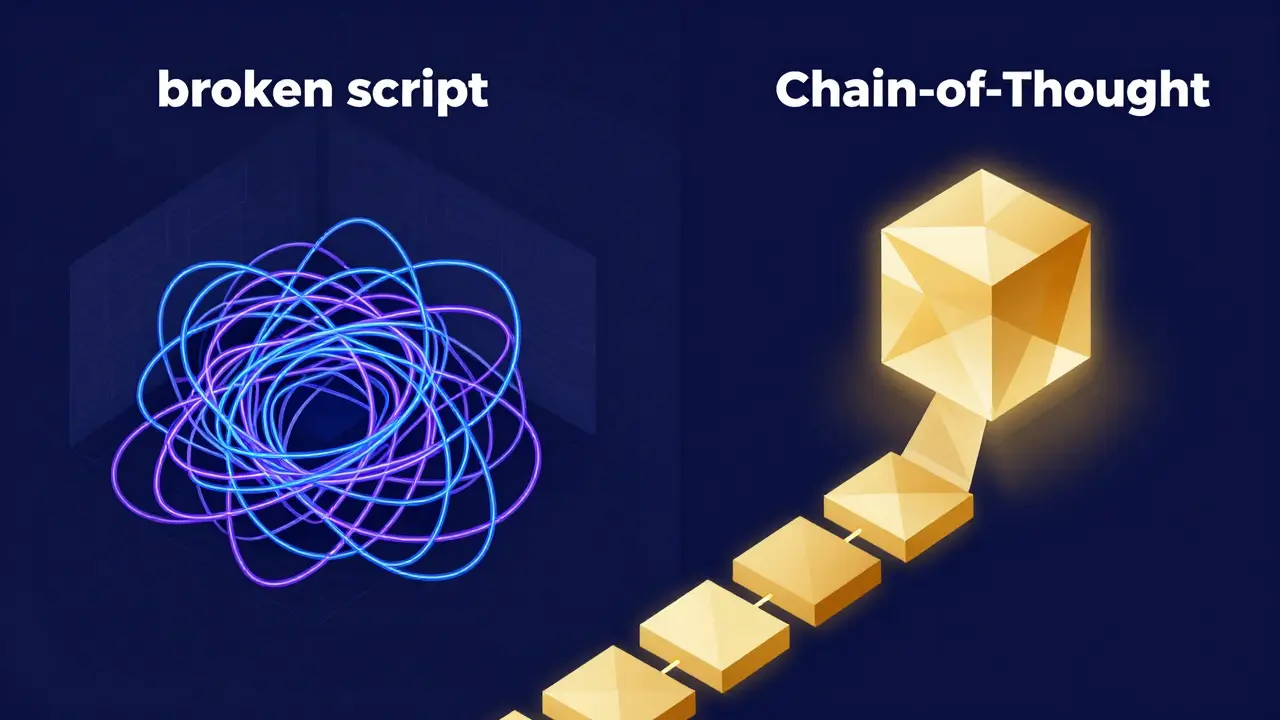

Imagine you're asking an AI to build a complex feature-say, a recursive algorithm to map a nested file system. If you just ask for the code, the AI might jump straight into syntax, hit a logical wall halfway through, and hand you a broken script that looks correct but fails in production. Now, imagine if the AI had to explain its logic, map out the edge cases, and justify its choice of data structures before writing a single line of code. That's the core of Chain-of-Thought prompting, and in the era of "vibe coding"-where we describe the desired feel and outcome rather than rigid specs-it's the difference between a working prototype and a debugging nightmare.

For those unfamiliar, Chain-of-Thought (CoT) is a prompting technique that forces large language models to articulate intermediate reasoning steps before delivering a final answer. First introduced by Google Research in 2022, it moved AI from simple pattern matching to actual problem-solving. In a coding context, this means moving from "Write this function" to "Think through this problem step-by-step, then write the function."

The Mechanics of Explanation-First Coding

When you implement CoT in your workflow, you aren't just adding fluff to a prompt; you're fundamentally changing how the LLM processes the task. Instead of predicting the next token based on a direct request, the model creates a "scratchpad" of logic. This process typically breaks down into three distinct phases:

- Problem Decomposition: The model breaks the coding task into smaller, manageable logical components. Instead of seeing "Build a payment gateway," it sees "Validate API key → Check balance → Execute transaction → Handle timeout."

- Sequential Reasoning: The AI generates a step-by-step explanation of the algorithmic approach. This is where it decides whether a hash map is better than a list for a specific lookup, explaining why before committing to the code.

- Error Prevention: By verbalizing the plan, the model often catches its own logical fallacies. It's essentially a pre-emptive code review performed by the AI on itself.

The impact is measurable. According to data from DataCamp, developers using CoT for complex algorithms saw a 63% reduction in logical errors compared to those who went for direct code generation. It turns out that when an AI explains its work, it's much less likely to hallucinate a library function that doesn't exist.

Why "Vibes" Need Logic: CoT vs. Standard Prompting

Vibe coding is all about high-level intent. You're telling the AI, "Make the checkout flow feel seamless and handle errors gracefully." But "seamless" is a vibe, not a technical specification. Without CoT, the AI guesses what "seamless" means in code. With CoT, the AI defines the logic of "seamlessness" first.

| Feature | Standard Prompting | Chain-of-Thought Prompting |

|---|---|---|

| Approach | Direct: Input → Code | Reasoning: Input → Logic → Code |

| Error Rate | Higher on complex logic | Significantly lower (up to 63% reduction) |

| Token Usage | Low/Efficient | Higher (15-20% increase) |

| Debugging Time | Standard | Up to 47% faster for junior devs |

| Best Use Case | Boilerplate, simple CRUD | Algorithms, System Design, Refactoring |

While the table shows a trade-off in token usage and latency, the ROI is clear. If you're using Claude 3 or GPT-4.5, the cost of a few extra hundred tokens is negligible compared to the hours spent debugging a subtle race condition that a CoT process would have caught early.

Putting CoT Into Practice: A Framework for Developers

You don't need to be a prompt engineer to make this work. The key is to move away from the "black box" request. Instead of asking for a solution, ask for a blueprint first. A highly effective CoT prompt for coding usually includes three specific requirements:

- Problem Restatement: Ask the model to rephrase the challenge in its own words. This ensures the AI hasn't misinterpreted your "vibe."

- Algorithm Justification: Require the model to explain why it chose a specific approach (e.g., "I'm using a Breadth-First Search here because we need the shortest path in an unweighted graph").

- Edge Case Analysis: Force the AI to list at least three ways the code could fail (null pointers, empty strings, API timeouts) before it writes the implementation.

For example, instead of saying "Write a Python script to scrape this site," try: "First, analyze the site's structure and explain your scraping strategy. Identify potential bot-detection hurdles and how to bypass them. Once you've outlined the logic, provide the Python implementation." This shift in sequence forces the model to engage its reasoning capabilities before it starts typing syntax.

The Risks: Reasoning Hallucinations

It's not all magic, though. There is a phenomenon known as "reasoning hallucinations." This happens when the AI's step-by-step explanation looks perfectly logical and confident, but the actual code it produces is wrong-or vice versa: the explanation is flawed, but the code happens to work by accident.

Dr. Emily M. Bender has pointed out that these logical-looking chains can create a false sense of confidence. If the AI says, "Step 1: I will iterate through the list; Step 2: I will find the maximum value," and then produces code that actually finds the minimum, you might overlook the error because the explanation sounded so right. This is why human oversight remains non-negotiable. The CoT output is a tool for verification, not a replacement for it.

The Future of Explanation-First Coding

We are moving toward a world where CoT is invisible. New models, like CodeT5+ and the latest versions of Llama 3, are beginning to integrate structured reasoning directly into their training. Some AI assistants now perform "hidden" CoT, where they reason internally before presenting you with the final code.

Furthermore, IDEs are evolving. Companies like JetBrains are integrating native support for these reasoning patterns, meaning your editor might soon prompt you to "Review the AI's logic」 before it allows the code to be merged into your main branch. This transforms the developer's role from a coder to an architect-someone who reviews logic and steers the "vibe" rather than typing every semicolon.

Does Chain-of-Thought always improve code quality?

Not always. For very simple tasks-like creating a basic HTML boilerplate or a simple CRUD operation-CoT can actually be a hindrance. It increases latency and token costs without providing any real benefit in accuracy. It is most effective for complex algorithmic challenges, system design, and debugging.

Will CoT make my AI requests more expensive?

Yes, typically. Because the model is generating an explanation before the code, it uses more tokens. In some cases, token usage can increase from around 150 to over 400 tokens per request. However, most teams find this cost is offset by a significant reduction in the number of code review iterations and bug-fixing hours.

Can I use CoT with smaller LLMs?

It's less effective. Research from Google indicates that CoT benefits primarily materialize in models with a sufficient number of parameters (typically 100 billion or more). Small models often struggle to maintain a logical chain and may simply repeat the prompt or generate disjointed explanations.

What is the simplest way to start using CoT today?

The easiest way is to add the phrase "Let's think step by step" to your prompt. For better results in coding, specifically ask the AI to "outline the logic and identify potential edge cases before providing the final implementation."

How do I handle "reasoning hallucinations" in CoT?

Always cross-reference the explanation with the implementation. If the AI claims it is handling a specific edge case in its reasoning, search the resulting code for the actual logic that handles that case. If the explanation says "I will use a try-catch block" but the code has no error handling, you've caught a hallucination.

- Apr, 15 2026

- Collin Pace

- 7

- Permalink

Written by Collin Pace

View all posts by: Collin Pace