How to Control Enterprise LLM Costs: Quotas, Budgets, and Smart Routing

Imagine this: your engineering team launches a new customer support chatbot. It works great. Users love it. Then comes the monthly bill from your Large Language Model (LLM) provider, and it’s ten times what you expected. You didn’t get hacked. You didn’t have a data leak. You just had uncontrolled usage.

This is the reality for many companies right now. The enterprise LLM market is exploding, projected to grow from $6.7 billion in 2024 to over $71 billion by 2034. With that growth comes a massive problem: unpredictable spending. A single complex query can trigger dozens of API calls, sending costs skyrocketing before anyone notices.

The solution isn’t just picking the cheapest model. It’s building a system. You need cost controls and quotas for enterprise LLM consumption. This means setting hard limits on tokens, requests, and budgets while keeping your AI tools fast and reliable. Let’s look at how to build that system so you stop bleeding money and start optimizing it.

The Problem with Traditional Rate Limiting

Most developers know rate limiting. You set a rule like "100 requests per minute" or "50,000 tokens per hour." This protects your server from crashing. But it does nothing to protect your wallet.

Here’s why: not all requests are equal. One request might ask the AI to say "Hello." Another might ask it to summarize a 100-page legal contract. Both count as one request in traditional systems. But the second one costs hundreds of times more in compute power and token fees. If your system allows high-cost operations to slip through because they haven’t hit the *request* limit, your budget vanishes.

You need cost-aware rate limiting. Instead of counting requests, you count budget units. This approach uses algorithms like the Token-Bucket Algorithm. Think of it like a water bucket. Every operation deducts water proportional to its cost. Simple queries take a sip; complex ones take a gulp. When the bucket empties, the flow stops until more budget is added. This ensures you never overspend, regardless of query complexity.

Building the TIER-L Framework

To manage this effectively, you need a structured framework. The FinOps Foundation and industry experts recommend a system often called TIER-L. It breaks down into five clear steps:

- T - Threshold Definitions: Set your quotas. Define exactly how many tokens, GPU hours, or dollars each team or user can spend. Don’t guess. Base these on historical data or pilot tests.

- I - Identify High-Cost Requests: Classify incoming calls. Is this a simple translation task? Or is it complex code generation? Tag them accordingly.

- E - Enforce Cost-Aware Rate Limiting: Apply the token-bucket logic. Deduct budget units based on the actual cost of the operation, not just the fact that it happened.

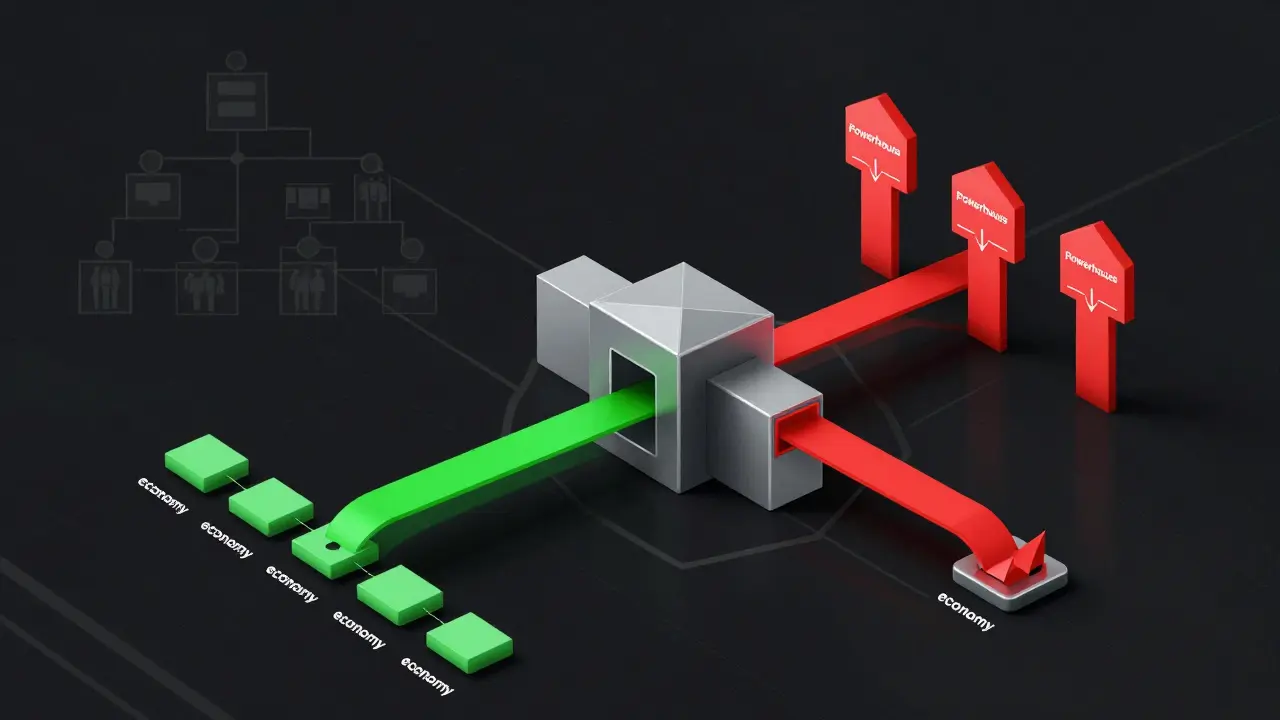

- R - Route to Cheaper Models: This is the game-changer. When a user hits their budget limit for premium models, don’t block them. Downgrade them to a cheaper, lighter model that still gets the job done.

- L - Log Anomalies: Record every throttled or rejected request. If a specific department keeps hitting limits, investigate why. Are they inefficient? Do they need more budget?

This framework turns cost control from a reactive panic into a proactive strategy.

Smart Routing: The Biggest Lever for Savings

Not every question needs the most powerful AI in the world. Using a top-tier model for simple tasks is like using a Ferrari to go to the grocery store. It works, but it’s wildly inefficient.

Research from UC Berkeley shows that routing simple queries to smaller, cheaper models while reserving expensive models for complex reasoning can reduce costs by up to 85%. And here’s the best part: you maintain 95% of the output quality.

Consider the price differences. A premium model like GPT-4 might cost around $24.70 per million tokens. A mid-tier model could be $3-$15. An economy model like Mixtral 8x7B might cost just $0.24 per million tokens. That’s a 100-fold difference.

If 60% of your organization’s queries are simple enough for cheaper models, your total spend drops by more than half. Tools like RouteLLM use trained classifiers to assess query complexity in real-time. They decide which model handles the request before it even leaves your system. This intelligent routing is essential for any serious cost-control strategy.

| Model Tier | Cost per Million Tokens | Best Use Case | Example Models |

|---|---|---|---|

| Premium | $15 - $75 | Complex reasoning, coding, legal analysis | GPT-4, Claude Opus |

| Mid-Tier | $3 - $15 | General business tasks, summarization | GPT-3.5, Claude Haiku |

| Economy | $0.25 - $4 | Simple Q&A, classification, translation | Mixtral 8x7B, Llama 3 Small |

The Role of Unified Gateways

You can’t control what you can’t see. In many enterprises, LLM calls are scattered across different services, teams, and codebases. Developers call OpenAI directly. Data scientists use Anthropic. Marketing uses Azure. By the time the billing arrives, it’s a mystery.

You need a unified gateway. This acts as a toll booth for all LLM traffic. Every request passes through it, gets counted, and gets priced, regardless of the provider. LiteLLM is a popular open-source proxy that provides a single interface to over 100 LLM providers. It automatically tracks tokens, latency, and costs.

With a gateway, you can enforce per-user throttling and hierarchical budgets. You can decide that the marketing team has a $5,000 monthly budget, while the R&D team has $20,000. If marketing runs out, the gateway automatically routes their requests to cheaper models or pauses them entirely. This central observation point is non-negotiable for financial discipline.

Deployment Paths and Their Cost Implications

Where you run your models matters just as much as which models you choose. There are three main paths, each with different cost structures:

- Developer-Managed Services: Using APIs from OpenAI, Anthropic, or Mistral. This is pay-per-token. It’s easy to start but gets expensive at scale. You have no control over infrastructure efficiency.

- Cloud Provider Services: Using AWS Bedrock, Azure AI, or Google Vertex AI. These offer managed services with long-term commitment options. You can save significantly if you lock in usage contracts.

- Self-Managed Deployments: Running models on your own GPUs. This requires sophisticated infrastructure management. However, for consistent, high-volume workloads, self-managed deployments can deliver up to 78% cost savings compared to pay-per-token services. The fixed cost of hardware becomes negligible when spread across millions of tokens.

Your choice depends on your volume. Low volume? Stick with APIs. High, predictable volume? Look at self-hosting or cloud commitments.

Optimizing Prompts and Context

Cost control isn’t just about models and gateways. It’s also about how you talk to the AI. Prompt optimization is a powerful lever. Techniques like prompt compression reduce the number of tokens sent without losing meaning.

Context caching is another key tool. If you’re asking the AI to analyze a large document multiple times, cache the context so you don’t pay to send it again. Tools like LlamaIndex help optimize context loading, while LangSmith helps track conversation context to avoid redundant processing.

For self-hosted models, technical optimizations like quantization (reducing precision) and pruning (removing unnecessary weights) further cut operational costs. These strategies compound with smart routing to create significant savings.

Setting Up Hierarchical Budgets

Enterprise governance requires granularity. You can’t give everyone the same budget. Different teams have different priorities. A startup might prioritize speed, giving engineers unlimited access to premium models. A mature corporation might prioritize cost, enforcing strict quotas on all departments except critical R&D.

Set alert thresholds. Don’t wait for the month-end bill. Use automation to trigger alerts when a team hits 50%, 80%, or 100% of their budget. This allows for proactive management. Maybe the marketing team needs to pause some experiments to stay within budget. Or maybe they genuinely need more resources, and you can approve an increase.

Remember, cost control isn’t about restricting innovation. It’s about ensuring sustainability. When you treat cost control as core infrastructure design rather than post-deployment cleanup, you build a resilient AI operation.

What is the difference between rate limiting and cost-aware rate limiting?

Traditional rate limiting counts requests (e.g., 100 per minute) regardless of their computational cost. Cost-aware rate limiting uses budget units, deducting from a pool based on the actual expense of each operation. This prevents expensive queries from draining your budget while cheap ones remain unaffected.

Can I really save 85% on LLM costs by routing queries?

Yes, research suggests that routing simple queries to cheaper, smaller models while reserving premium models for complex tasks can reduce costs by up to 85%. This works because most daily queries are low-complexity and don’t require the most advanced AI capabilities.

Do I need a unified gateway like LiteLLM for small teams?

Even for small teams, a unified gateway provides visibility into where money is being spent. Without it, LLM calls scatter across codebases, making it impossible to enforce quotas or identify inefficiencies. It’s the foundation of any cost-control strategy.

When should I consider self-hosting LLMs instead of using APIs?

Self-hosting makes sense when you have consistent, high-volume workloads. While it requires significant infrastructure management, it can offer up to 78% cost savings compared to pay-per-token APIs because you pay for fixed hardware rather than variable usage fees.

How do hierarchical budgets work in practice?

Hierarchical budgets allow you to set spending limits at different organizational levels-company, department, project, or individual user. For example, the finance department might have a strict $1,000 monthly limit, while the product development team has $10,000. The system enforces these limits automatically via the gateway.

- May, 9 2026

- Collin Pace

- 0

- Permalink

- Tags:

- enterprise LLM cost controls

- AI quota management

- LLM budget governance

- smart model routing

- FinOps for AI

Written by Collin Pace

View all posts by: Collin Pace