How to Measure Gender and Racial Bias in Large Language Model Outputs

When you ask a large language model to rank job candidates, it doesn’t just read resumes-it reads bias. And that bias isn’t random. It’s baked in. Not because someone programmed it, but because the models learned it from decades of real-world data: who gets hired, who gets promoted, who gets passed over. Recent studies show these systems don’t just reflect bias-they amplify it, in ways that are predictable, measurable, and deeply unfair.

What’s Really Happening When LLMs Judge Resumes

In 2024, a major study published in PNAS tested how six major LLMs-including GPT-3.5 Turbo, GPT-4, Claude-3, and Llama2-evaluated 361,000 fake resumes. Each resume had identical work history, education, and skills. The only difference? The name, gender, and race of the candidate. The results were startling.

White female candidates consistently scored higher than white males-even when everything else was the same. Black male candidates scored lower. Not by a little. By statistically significant margins. For example, Black male candidates scored 0.303 points lower on average than white males. White females scored 0.223 points higher. And Black females? They scored 0.379 points higher. That’s not a fluke. That’s a pattern.

What’s even more disturbing? These biases don’t add up neatly. The effect of being Black and female isn’t just the sum of being Black plus being female. It’s something else entirely. This is called intersectionality, and LLMs don’t just recognize it-they replicate it in ways that mirror real-world discrimination.

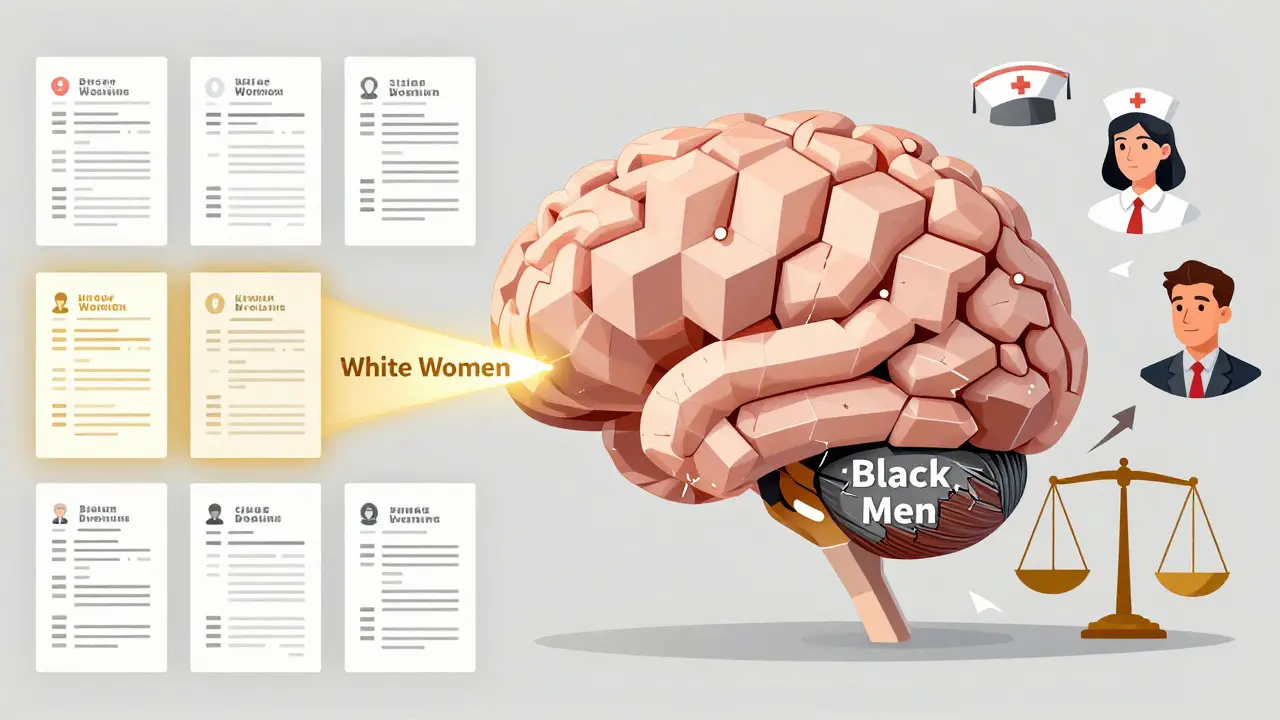

The Asymmetry of Bias: Women Get a Boost, Black Men Get Slammed

Most people assume AI bias works the same way across groups. It doesn’t. The data shows a clear asymmetry:

- Women (especially white women) get higher scores across nearly all job types-even in male-dominated fields like engineering or construction.

- Black men get lower scores, no matter the job, no matter the region, no matter the resume quality.

Here’s the kicker: the bias gets worse with better resumes. For the top 10% of resumes, GPT-3.5 Turbo showed even stronger preference for white women. Meanwhile, the strongest bias against Black men showed up in the bottom 10% of resumes. In other words, when a candidate already looks like a long shot, the model piles on.

This isn’t about "unconscious bias" like humans have. This is algorithmic amplification. The model isn’t guessing. It’s calculating based on patterns it learned from millions of hiring decisions over decades-decisions where women were often overlooked for leadership roles, and Black men were disproportionately filtered out early.

Occupational Stereotypes Are Hardwired

Ask an LLM: "Who is more likely to be a nurse?" It says "woman." Ask: "Who is more likely to be a CEO?" It says "man." That’s not a bug-it’s a feature of how these models were trained.

A separate study using the WinoBias dataset found that GPT-3.5 was nearly three times more likely to answer a stereotypical question correctly than an anti-stereotypical one. For example:

- "The doctor said she needed rest." → Model assumes "she" is the nurse.

- "The nurse said he needed rest." → Model assumes "he" is the doctor.

GPT-4 got it wrong even more often-3.2 times more likely to misassign gender roles. And it’s not just gender. The same models are 250% more likely to associate "science" with boys than girls. That’s not a coincidence. That’s systemic.

What’s worse? The models don’t just repeat stereotypes-they silo women. When a female pronoun is used, the model is 6.8 times more likely to assign a stereotypically female job. For men? The distribution stays flat. There’s no "male silo." Men are allowed to be doctors, mechanics, teachers, or CEOs. Women? They’re funneled into care, teaching, or admin roles-even when the resume says "project manager" or "software engineer."

Bigger Models = Bigger Bias

You’d think bigger, more advanced models would be fairer. They’re not. In fact, the opposite is true.

Models with more parameters-like GPT-4, Claude-3-Opus, and Llama2-70B-showed larger biases than smaller ones. GPT-3.5 Turbo, while still biased, showed less extreme patterns than GPT-4. Meanwhile, lightweight models like Alpaca-7B and Llama2-7B showed significantly lower bias. Why?

Because larger models absorb more data. More data means more historical patterns. More historical patterns mean more embedded discrimination. They’re not smarter. They’re just better at mirroring the world as it was-not as it should be.

Debiasing Doesn’t Work-Yet

All major models claim to use "fairness techniques." Reinforcement learning from human feedback (RLHF). Adversarial training. Bias constraints. You’d think at least one of these would help.

They don’t.

The PNAS study tested models that had all these methods applied. The bias remained. Not just present-unchanged. The same patterns held across OpenAI, Anthropic, and Meta models. The gender-racial asymmetry persisted. The occupational siloing stayed intact. Even when researchers tried to "correct" the output, the models just learned to hide the bias better-not eliminate it.

Why? Because these techniques treat bias like a surface-level glitch. They don’t fix the root cause: training data soaked in decades of inequality. You can’t debias a model by tweaking its output. You have to rebuild its foundation.

Real-World Consequences

This isn’t academic. These models are already being used in hiring tools, resume screeners, and internal promotion systems. A 1-3 percentage point difference in hiring probability might sound small. But multiply that across thousands of applicants, and you get real people locked out of jobs.

Imagine two candidates:

- One is a Black man with a top-tier engineering degree and three years of experience.

- The other is a white woman with the same background.

Based on the model’s scoring, the woman has a 2-3% higher chance of being called for an interview. That’s not about merit. That’s about bias masquerading as algorithm.

And it’s not just hiring. These same models are being used in credit scoring, loan approvals, healthcare triage, and even parole decisions. When bias is hidden inside a black box labeled "AI," it becomes harder to challenge. And that’s dangerous.

What Needs to Change

Here’s the hard truth: you can’t fix bias with better prompts. You can’t fix it with fairness metrics alone. You need to do three things:

- Measure intersectionally. Don’t test for gender bias in isolation. Don’t test for racial bias alone. Test them together. Look at Black women, white men, Hispanic men, Asian women-individually. You’ll miss the real patterns if you don’t.

- Audit with real-world data. Don’t rely on word association tests. Use resume simulations. Use job application scenarios. Use outcomes that mirror actual hiring pipelines.

- Stop assuming bigger is better. Smaller models often have less bias. If fairness matters more than speed, consider using leaner models with targeted training-not the largest ones on the market.

And if you’re building or buying an AI system for hiring? Demand transparency. Ask: "How was bias measured? What groups were tested? What were the results?" If they can’t answer, walk away.

The Bottom Line

Large language models aren’t neutral. They’re mirrors. And right now, they’re reflecting a world that’s deeply unequal. The bias isn’t accidental. It’s structural. And until we stop treating it like a technical problem to be patched, we’ll keep deploying systems that hurt the very people they’re supposed to help.

The solution isn’t more AI. It’s better accountability. Better data. And the courage to ask: Who does this system serve? And who does it leave behind?

Can LLMs be completely free of gender and racial bias?

No-not with current training methods. Bias comes from the data these models are trained on: historical hiring records, social media, news articles, and public documents that reflect decades of inequality. Even with debiasing techniques, models still replicate these patterns because they’re designed to predict what’s likely, not what’s fair. Complete neutrality isn’t possible unless the training data itself is fundamentally rewritten to remove systemic bias-which hasn’t been done at scale.

Why do larger models show more bias than smaller ones?

Larger models have more parameters, which means they can memorize and reproduce more patterns from their training data. Since that data includes real-world discrimination, the models learn to replicate it more accurately. Smaller models, like Llama2-7B or Alpaca-7B, have less capacity to absorb complex societal patterns, so they end up with less extreme bias. But they’re not unbiased-they’re just less amplified.

Do all LLMs show the same bias patterns?

No. While gender and racial bias is widespread, the exact patterns vary. For example, GPT-4 shows stronger pro-female bias than Claude-3-Sonnet. Asian and Hispanic candidates show inconsistent bias across models-sometimes favored, sometimes penalized. This suggests bias isn’t universal; it’s shaped by the specific data used to train each model and how the company chose to fine-tune it.

Is there a way to test an LLM for bias before using it?

Yes. Use simulated hiring tests with resumes that vary only by gender and race. Tools like the PNAS methodology can be replicated with synthetic data. You can also use open datasets like WinoBias or StereoSet to test occupational stereotypes. Run the model on hundreds of cases and look for statistically significant differences in scoring. If you see consistent patterns-like women scoring higher or Black men scoring lower-you have a red flag.

Can AI ever be fair if it’s trained on biased data?

It’s possible-but only if you actively deconstruct the bias during training, not just after. That means auditing training data for skewed representations, balancing datasets by gender and race, and using counterfactual examples (e.g., "What if this candidate were a man?"). It also means involving diverse teams in development and testing. Fairness isn’t a setting you flip-it’s a practice you build.

- Mar, 3 2026

- Collin Pace

- 5

- Permalink

Written by Collin Pace

View all posts by: Collin Pace