Hybrid Search for RAG: Why Combining Semantic and Keyword Retrieval Boosts Accuracy

Your Retrieval-Augmented Generation (RAG) system is smart. It understands context. It grasps nuance. But sometimes, it completely misses the mark on simple facts. You ask it about a specific error code, a rare medical acronym, or a line of Python syntax, and it gives you a vague, confident hallucination instead of the exact document you need. This happens because pure semantic search relies on meaning, not exact matches. It’s like asking a librarian to find books about "the feeling of loss" rather than looking up a specific ISBN number. To fix this, you need Hybrid Search, which combines semantic vector search with keyword-based retrieval to ensure your Large Language Models (LLMs) get both the right context and the right facts.

In 2026, Hybrid Search isn’t just a nice-to-have feature; it is the industry standard for mission-critical RAG implementations. According to Gartner’s February 2025 Emerging Technologies Hype Cycle, nearly 78% of enterprise RAG systems now incorporate hybrid retrieval. Why? Because relying solely on vectors leaves gaps in precision, while relying solely on keywords kills contextual understanding. Hybrid Search bridges that gap, delivering high recall and high precision simultaneously.

The Core Problem: Why Pure Semantic Search Fails

To understand why you need hybrid search, you first have to see where pure semantic search breaks down. Semantic search works by converting text into dense vector representations-usually arrays of numbers between 384 and 1536 dimensions. These vectors capture the *meaning* of words. If you search for "feline companions," a semantic engine will find documents containing "cats" because their vector embeddings are close together in space.

This is great for general conversation but terrible for technical precision. Here is the issue:

- Rare Terms and Acronyms: Vectors struggle with terms that don't appear often in training data. A query for "HbA1c" (a medical marker) might be interpreted as a generic health question, missing specific lab result documents.

- Code Snippets: Programming languages rely on exact syntax. Searching for `np.dot` semantically might return articles about "dot products" in mathematics, but miss the specific NumPy documentation page you actually need.

- Proper Nouns: Unique identifiers, case numbers, or product SKUs often lack semantic "neighbors." They are isolated points in vector space.

As noted in Towards AI’s November 2023 analysis, semantic search can miss results that contain exact keyword matches, especially for these edge cases. This leads to what developers call "zero-result queries" or, worse, irrelevant context being fed to the LLM, causing downstream errors.

How Hybrid Search Works: The Two-Engine Approach

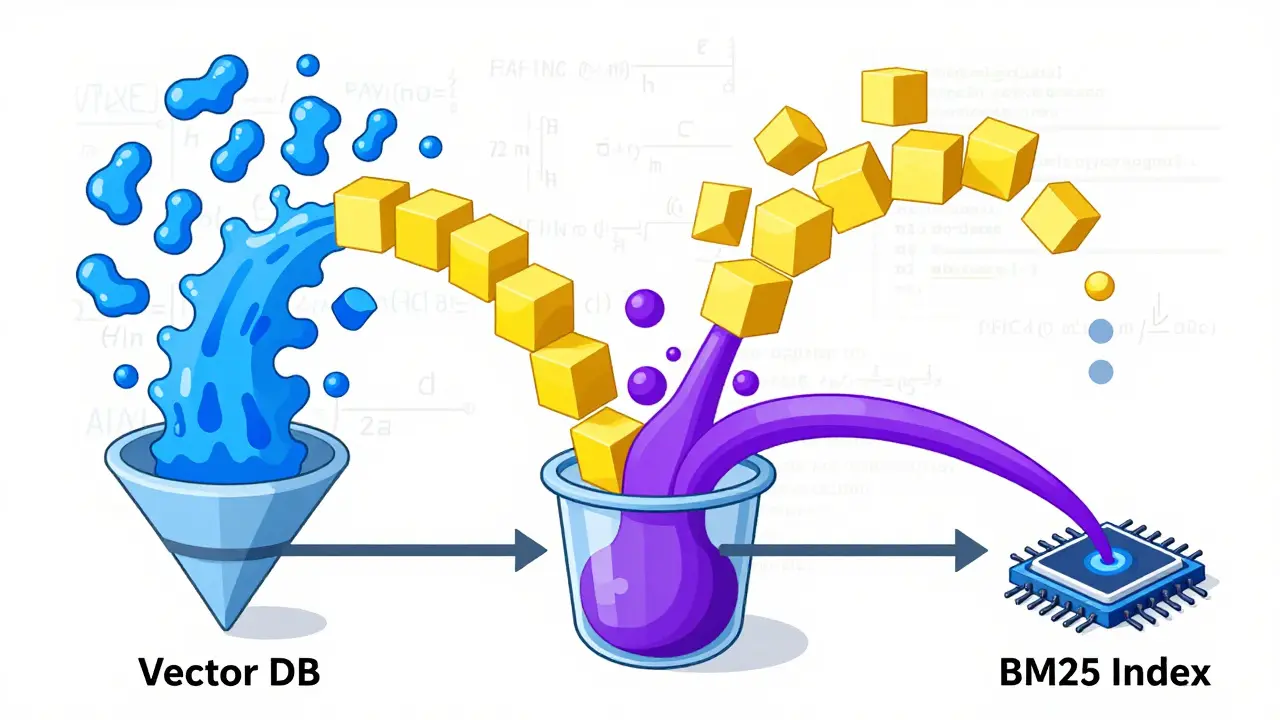

Hybrid Search solves this by running two separate searches simultaneously and then combining the results. Think of it as having two librarians working together: one who understands concepts and one who uses an index card catalog.

Here is the four-stage process:

- Dual Querying: When a user submits a query, the system sends it to two indexes at once. One goes to the Vector Database (like Pinecone, Chroma, or FAISS) for semantic matching. The other goes to a keyword index using the BM25 algorithm.

- Independent Scoring: The vector database returns results based on cosine similarity (how close the meanings are). The keyword index returns results based on term frequency and inverse document frequency (how often the exact words appear).

- Fusion: The system combines these two lists of results using a mathematical formula. This is the most critical step.

- Ranking: The final combined list is ranked and passed as context to the LLM.

The keyword side typically uses the BM25 algorithm, which stands for Best Match 25. It evaluates relevance by measuring how frequent a word is in a specific document compared to how common it is across the entire corpus. This ensures that if a unique term appears in a document, that document jumps to the top of the keyword list, even if its semantic meaning is slightly off.

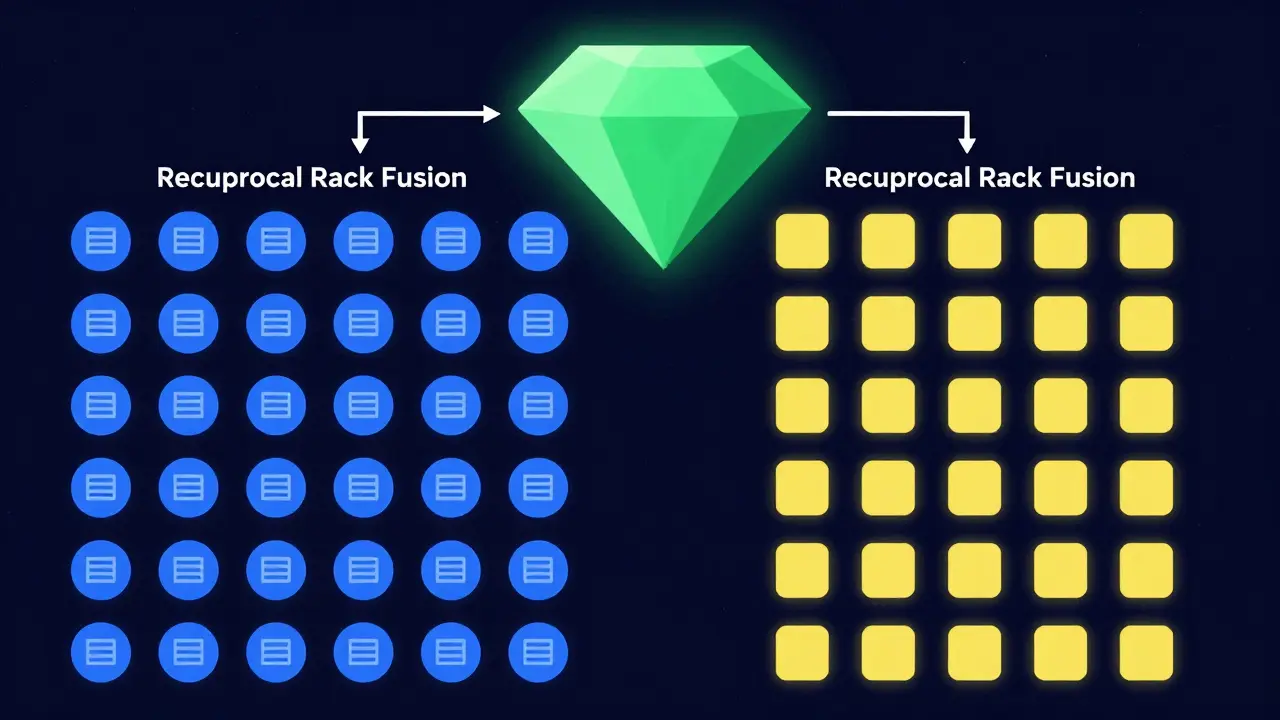

Fusion Techniques: How to Combine the Scores

You can’t just average the scores from semantic and keyword search. The scales are different. Vector similarity scores range from -1 to 1, while BM25 scores can be any positive float. You need a fusion method to normalize and merge them. There are three main approaches used in production today.

| Method | How It Works | Best For | Complexity |

|---|---|---|---|

| Reciprocal Rank Fusion (RRF) | Merges rankings based on position. A document ranked #1 in both lists gets a huge boost. No score normalization needed. | General purpose, robust against outliers. | Low |

| Simple Weighted Fusion | Assigns fixed weights (e.g., 30% semantic, 70% keyword) after normalizing scores. | Domains requiring heavy exact-match precision (Legal, Code). | Medium |

| Linear Fusion Ranking (LFR) | Calculates a weighted sum of transformed scores. Often requires machine learning to tune weights. | Enterprise systems with custom tuning pipelines. | High |

Reciprocal Rank Fusion (RRF) is currently the most popular choice. As documented by Fuzzy Labs in April 2024, RRF uses a mathematical formula to merge rankings, ensuring that even lower-ranked results from one method can contribute if they are consistently relevant in the other. It doesn’t require you to normalize scores, making it easier to implement.

Simple Weighted Fusion is more transparent. You explicitly decide how much you trust each engine. For example, LangChain implementations often use a 30% semantic / 70% keyword split for technical documentation. This forces the system to prioritize exact term matches while still keeping some contextual awareness.

Performance Gains: What the Data Shows

Is the extra complexity worth it? The benchmarks say yes, especially for technical domains. Meilisearch’s June 2024 technical blog reported that properly tuned hybrid systems achieve up to 37% improvement in retrieval accuracy compared to single-method approaches.

Let’s look at specific verticals:

- Healthcare: Hybrid search showed a 35.7% improvement in retrieving documents containing critical medical abbreviations like "COPD" or "HbA1c." Pure semantic search often misinterpreted these acronyms as general health topics.

- Developer Tools: Applications showing code examples saw a 41.2% improvement in retrieving snippets with specific syntax like `lambda` functions or `np.dot`. Exact match is non-negotiable here.

- Legal Compliance: Legal RAG systems achieved 33.4% better retrieval of case-specific references and law codes. Lawyers need the exact statute number, not a paraphrased concept.

However, there is a trade-off. Elastic’s March 2024 benchmark tests found that hybrid search introduces 18-25% higher latency compared to single-method search. You are doing twice the work. For real-time chat applications, this overhead must be managed carefully, often through caching or optimized indexing strategies.

Implementation Challenges and Pitfalls

Setting up hybrid search sounds straightforward, but getting the weights right is tricky. Fuzzy Labs notes that integrating two retrieval systems adds 35-50% more development time to your RAG pipeline. You aren’t just adding a feature; you’re managing two distinct data structures.

Here are the biggest hurdles developers face:

1. Determining Optimal Weights There is no universal setting. Salesforce Trailhead’s October 2024 documentation highlights that Legal applications typically require 80% keyword weighting, while general knowledge domains perform best with 60% semantic weighting. If you get this wrong, you either reintroduce brittleness (too much keyword) or lose precision (too much semantic).

2. Storage Overhead Creating separate semantic and keyword indexes typically adds 30-40% storage overhead. You are storing the same data twice, in different formats. For large corpora over 1 million documents, this cost adds up quickly.

3. Configuration Complexity GitHub issue trackers reveal that LangChain users frequently struggle with hybrid search configuration. Issue #7842 (October 2024) highlighted "difficulty determining optimal weights for domain-specific applications" as a top complaint. Many teams default to RRF because it’s less sensitive to parameter tuning, but this might not be optimal for their specific use case.

Best Practices for Production Deployment

If you are building a RAG system in 2026, here is how you should approach hybrid search to avoid common pitfalls.

Start with Reciprocal Rank Fusion (RRF) Unless you have a strong reason to bias toward keywords or semantics, start with RRF. It is robust, requires no score normalization, and performs well out of the box. Most modern vector databases, including Pinecone and Meilisearch, support RRF natively.

Use Dynamic Weighting Where Possible Meilisearch announced their "Dynamic Weighting" feature in June 2024, which automatically adjusts semantic/keyword weights based on query characteristics. If your platform supports this, enable it. It allows the system to lean heavily on keywords when it detects acronyms or code, and shift to semantic for natural language questions.

Implement Query-Time Fusion For large-scale deployments, Elastic recommends implementing query-time fusion rather than index-time fusion. This means you keep the indexes separate and combine results only when a query comes in. This reduces storage costs and simplifies index management, though it increases compute load during retrieval.

Tune Based on Your Domain Run A/B tests on your specific dataset. Create a test set of 100 difficult queries-ones that failed under pure semantic search. Measure how many of those are retrieved correctly with hybrid search. Adjust your weights until you hit the sweet spot between precision and recall.

The Future: Beyond Static Fusion

Hybrid search is evolving. Research from Stanford’s Center for Research on Foundation Models (April 2025) demonstrated "Adaptive Hybrid Retrieval" systems that use LLMs to determine the optimal retrieval strategy per query. Instead of static weights, the LLM analyzes the query intent and decides whether to prioritize exact match or semantic context.

Additionally, tighter integration with re-ranking models is becoming standard. As noted by 87% of experts in Fuzzy Labs’ survey, re-rankers are the "next evolution" of hybrid search. After the hybrid retriever fetches the top 50 chunks, a lightweight cross-encoder model re-scores them for final precision before sending them to the LLM.

While MIT’s CSAIL lab warned in November 2024 that current hybrid approaches increase pipeline complexity by 3.2x without proportional gains in *general* knowledge domains, the consensus remains clear: for technical, legal, and medical applications, hybrid search is essential. It is the bridge between human-like understanding and machine-like precision.

What is the difference between semantic search and hybrid search?

Semantic search uses vector embeddings to find documents based on meaning and context, ignoring exact wording. Hybrid search combines semantic search with keyword-based search (like BM25) to find documents that match both the meaning and specific terms, such as acronyms or code snippets.

When should I use BM25 in my RAG pipeline?

You should use BM25 when your domain requires exact term matching. This includes technical documentation, legal contracts, medical records, and code repositories. BM25 excels at finding rare terms, proper nouns, and specific identifiers that semantic search might overlook.

What is Reciprocal Rank Fusion (RRF)?

RRF is a method for combining results from different search engines. Instead of averaging raw scores, it looks at the rank of each document in both lists. Documents that appear high in both the semantic and keyword results receive a significantly boosted score, making it a robust way to fuse diverse retrieval methods.

Does hybrid search slow down my application?

Yes, hybrid search typically adds 18-25% latency compared to single-method search because it performs two queries and fuses the results. However, for most RAG applications, this increase is acceptable given the significant boost in accuracy and reduction in hallucinations.

How do I choose the right weight for semantic vs. keyword search?

There is no one-size-fits-all answer. Start with Reciprocal Rank Fusion (RRF) to avoid manual tuning. If you need explicit control, use Simple Weighted Fusion. For technical/legal domains, lean heavier on keywords (e.g., 70-80%). For general conversational AI, lean heavier on semantics (e.g., 60-70%). Test with your specific dataset to find the optimal balance.

Can I use hybrid search with any vector database?

Most modern vector databases like Pinecone, Weaviate, and Qdrant support hybrid search natively. Others, like FAISS or Chroma, may require you to manage the keyword index separately (e.g., using Elasticsearch or OpenSearch) and fuse the results in your application code using libraries like LangChain or LlamaIndex.

- May, 13 2026

- Collin Pace

- 0

- Permalink

Written by Collin Pace

View all posts by: Collin Pace