Hyperparameters That Matter Most in Large Language Model Pretraining

When training a large language model, not all hyperparameters are created equal. Some make a massive difference. Others? You can tweak them all day and barely notice a change. After analyzing over 3,700 model configurations and processing 100 trillion tokens across millions of GPU hours, researchers have pinpointed the learning rate and batch size as the two hyperparameters that dominate training outcomes. Get these right, and your model converges faster, performs better, and costs less. Get them wrong, and you waste weeks - or worse, end up with a broken model.

Why Learning Rate Is the Single Most Important Hyperparameter

The learning rate isn’t just important - it’s the make-or-break factor. Too high, and your training explodes. Too low, and it crawls for days without making progress. The breakthrough came with the Step Law framework, published in March 2025. It showed that the optimal learning rate doesn’t depend on model size alone. It’s a function of both the number of parameters (N) and the size of your training dataset (D).

The formula? η ∝ N^(-0.11) × D^(0.05). That means as your model grows bigger, you need to lower the learning rate - but not as much as you’d think. Meanwhile, as your dataset grows, you can afford to raise it slightly. For example, a 7B parameter model trained on 100 billion tokens needs a learning rate around 1.2e-4. Increase the model to 70B, and you drop it to roughly 8.5e-5. But if you double the dataset to 200 billion tokens, you can raise it back up to 9.8e-5.

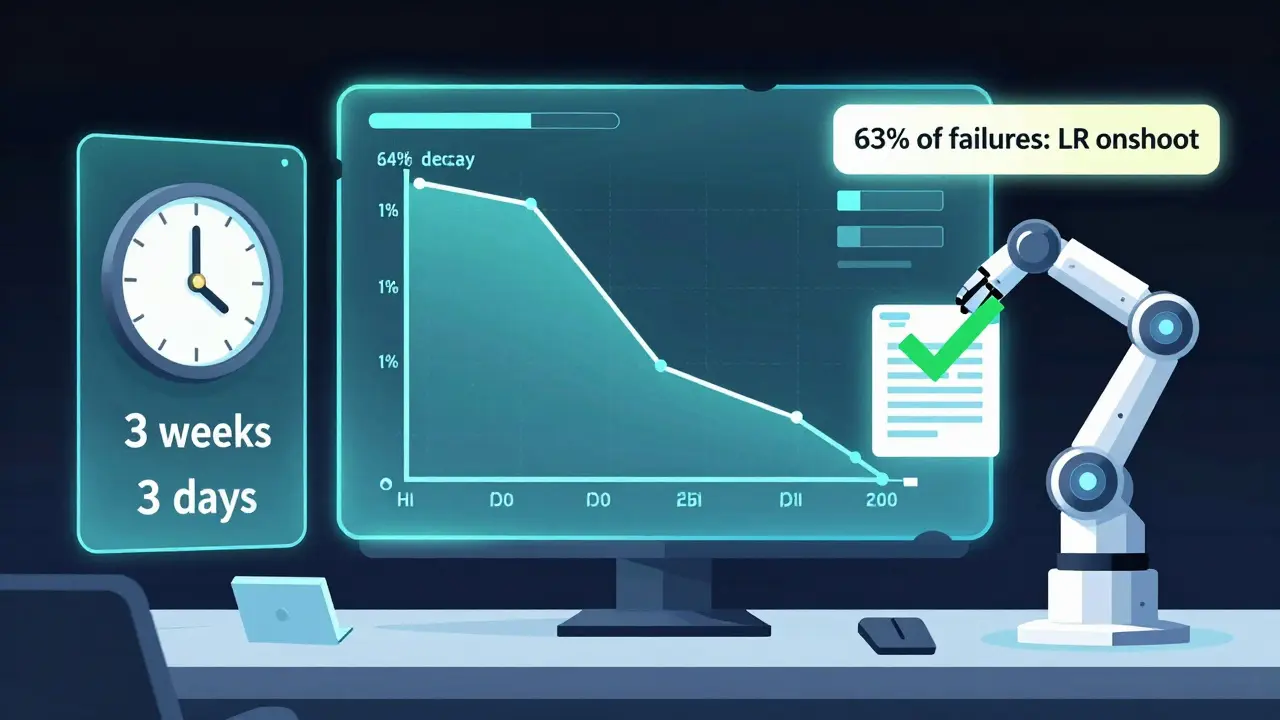

Why does this matter? Because even a 5% deviation from the optimal learning rate can increase your final perplexity by 0.2 to 0.5 points. In practical terms, that’s the difference between a model that writes coherent paragraphs and one that stutters. MoE (Mixture-of-Experts) models are even more sensitive - their optimal learning rates vary by up to 37% depending on how many experts are active at once. Most teams still guess this number. That’s why 63% of failed training runs on GitHub issues cite learning rate overshoot as the root cause.

Batch Size: It’s Not About Model Size - It’s About Data

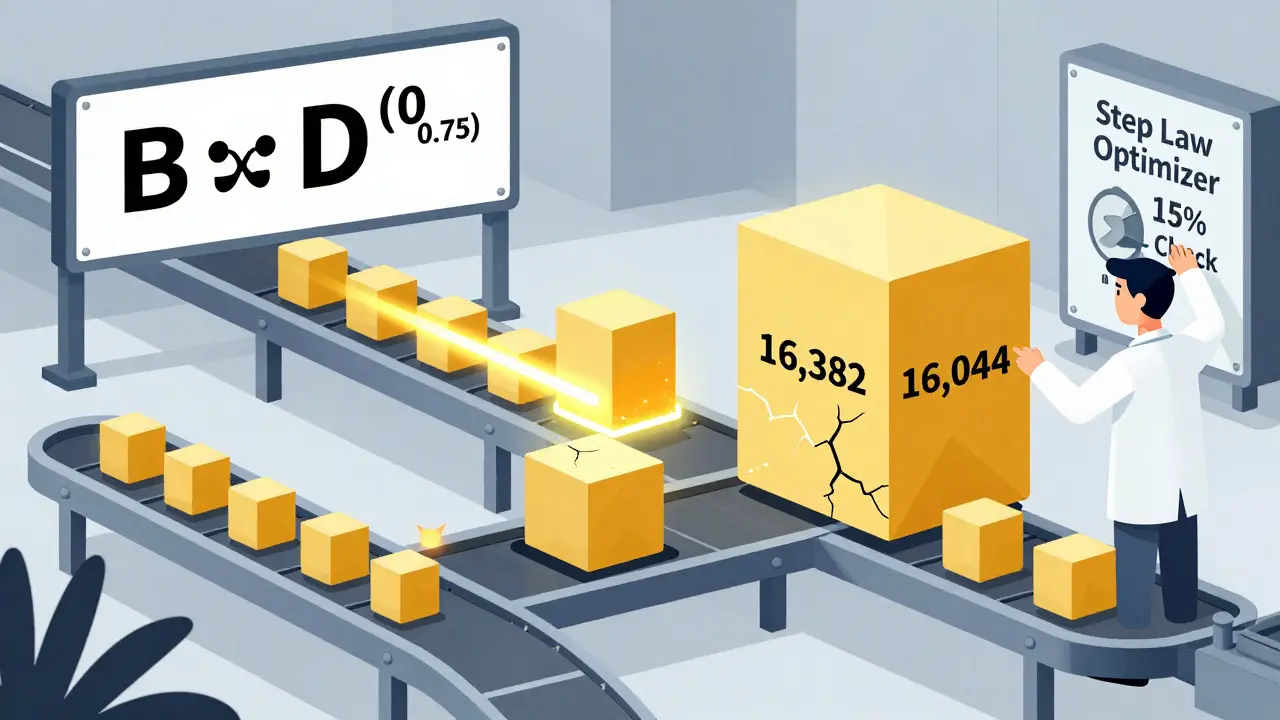

For years, people assumed batch size should scale linearly with model size. If you double the parameters, double the batch. That’s wrong. The Step Law research found that batch size depends almost entirely on dataset size: B ∝ D^(0.75).

Here’s what that looks like in practice:

- For a 7B model on a 100B-token dataset: optimal batch size = 2,048 tokens

- For a 70B model on the same dataset: optimal batch size = 8,192 tokens

Wait - the model got 10x bigger, but the batch size only went up 4x? Yes. Because the dataset didn’t change. The model just got more complex. It needs more data per step to stabilize its gradients, but not proportionally more. This is why earlier methods failed. Kaplan’s 2020 scaling laws ignored dataset size entirely. Teams applying them saw 23-31% higher training loss when working with non-standard data like code or multilingual text.

And here’s the kicker: using too large a batch size doesn’t just slow training - it can hurt final performance. Why? Because large batches reduce gradient noise, which acts as a form of regularization. Too little noise, and the model gets stuck in sharp minima. The sweet spot isn’t the biggest batch your GPU can handle. It’s the one that matches your data size.

The Hidden Trap: Learning Rate Scheduling

Most people think the learning rate is set once and then slowly decays. That’s what most frameworks do by default. But research shows that’s a recipe for failure.

Traditional decay schedules - like reducing the learning rate to 1% of its peak value - cause 12-18% higher final loss. Why? Because when you start with a high peak rate (which you should, to accelerate early training), dropping it too low at the end prevents the model from fine-tuning properly. The model needs to keep nudging itself toward the best solution, even at the last step.

The fix? Use a fixed minimum learning rate. Instead of decaying to 1% of peak, set it to 10% of peak and leave it there. This small change cuts final perplexity by 0.3-0.6 points across benchmarks. It’s not a complex tweak. It’s just a setting you change in your config file. And yet, 87% of open-source training scripts still use the old decay pattern.

Why Grid Search Is Dead (and What Replaced It)

Remember when teams ran 200 different hyperparameter combinations just to find a decent setup? That took 15,000-20,000 GPU hours for a 7B model. It cost tens of thousands of dollars. And even then, you weren’t guaranteed to find the best one.

Step Law changed that. Instead of testing hundreds of configs, you calculate the optimal learning rate and batch size from the formula. Then you test three values: optimal, optimal +15%, and optimal -15%. That’s it. Total cost? 1,500-2,000 GPU hours. A 90% reduction.

Teams that adopted this approach cut their tuning time from 3-4 weeks to 2-3 days. And their final perplexity improved by 4-7%. Reddit users in r/MachineLearning reported the same thing. One engineer wrote: “I used to spend a month tuning. Now I run a script, wait 3 hours, and ship.”

The tools are catching up, too. Optuna 4.2, released in April 2025, now has built-in Step Law integration. Hugging Face’s community library has 87 documented case studies showing how to apply it across 8 different architectures - from Llama 3 to Mixtral. Meanwhile, the official Hugging Face docs still only mention “start with 1e-4 and adjust.” That’s not enough.

What About Other Hyperparameters?

Yes, other parameters matter - but not like these two. Weight decay, dropout, optimizer choice, and even the number of layers? They’re secondary. The Step Law study showed learning rate and batch size account for 68-75% of the variance in final performance across 15 benchmark tasks.

Model depth? It’s not a hyperparameter you tune - it’s a design choice. Each transformer layer adds 0.5-1.2 billion parameters. So if you go from 24 to 32 layers, you’re not just changing depth - you’re changing N, which directly affects your learning rate via the formula. You can’t treat depth separately.

And don’t get fooled by “automated” tools. Bayesian optimization and LLM-assisted tuning have their place, but they’re not replacements. They’re supplements. LLM-assisted tuning outperforms random search by 12-18%, but it still needs a smart starting point. Step Law gives you that. It’s the foundation.

Real-World Pitfalls and What Experts Warn About

Even with Step Law, you’re not off the hook. Dr. Emily Chen from Stanford’s NLP Group warned in her ACL 2025 keynote: “Scaling laws assume homogeneous data.” If you’re training on medical reports, legal documents, or code - not just web text - your optimal learning rate might be 15-25% different.

That’s why the best teams don’t blindly trust the formula. They use it as a starting point, then run a 3-point check. If you’re training on a domain-specific corpus, test ±15% around the predicted value. You’ll often find the best result is slightly higher or lower.

Also, watch out for hardware. Step Law was validated across 12 different GPU platforms, from H800s to A100s. But if you’re using consumer-grade cards or cloud instances with inconsistent memory bandwidth, your effective batch size might vary. Always monitor actual tokens per second during training. Don’t assume your config matches your reality.

The Future: Automation Is Coming - But You Still Need to Understand the Basics

Google DeepMind’s AutoStep, released in May 2025, cuts search cost by 92% by learning how scaling laws change across architectures. It’s impressive. But here’s the truth: no automation replaces understanding. If you don’t know why learning rate scales with N^(-0.11), you won’t know when to override the system.

Regulators are catching up too. The EU AI Office now requires documentation of hyperparameter selection for models over 10B parameters. Why? Because bad configurations can lead to unsafe outputs, unstable behavior, or biased results. You can’t just say “we used the default.” You need to show your math.

By 2028, most teams will automate this entirely. But right now? You’re still in charge. And if you want to train a model that performs, scales, and doesn’t waste millions in compute - you need to master these two numbers.

What are the two most important hyperparameters in LLM pretraining?

The two most important hyperparameters are learning rate and batch size. Together, they account for 68-75% of the variance in final model performance across benchmarks. Learning rate controls how aggressively the model updates its weights, while batch size determines how much data is processed before each update. Getting these wrong leads to slow training, instability, or poor final accuracy.

How do I calculate the optimal learning rate for my LLM?

Use the Step Law formula: η ∝ N^(-0.11) × D^(0.05), where N is the number of model parameters and D is the size of your training dataset in tokens. For example, a 7B parameter model trained on 100 billion tokens should use a learning rate around 1.2e-4. You can plug your values into this formula to get a starting point, then test ±15% around it for fine-tuning.

Should I increase batch size as my model gets larger?

No. Batch size depends primarily on dataset size, not model size. The formula is B ∝ D^(0.75). For a 100B-token dataset, a 7B model works best with a batch size of 2,048 tokens, while a 70B model needs 8,192. Increasing batch size just because the model is bigger can hurt performance by reducing gradient noise, which helps the model escape poor local minima.

Is it better to use learning rate decay or a fixed minimum learning rate?

Use a fixed minimum learning rate. Traditional decay schedules that reduce the rate to 1% of its peak value cause 12-18% higher final loss. Instead, set the minimum to about 10% of your peak learning rate and keep it there. This allows the model to continue fine-tuning in the final stages without getting stuck in suboptimal solutions.

Can I trust automated hyperparameter tuning tools for LLMs?

Automated tools like Bayesian optimization or LLM-assisted tuning can help, but they work best when you give them a smart starting point. Step Law provides that. Use it to calculate your initial learning rate and batch size, then let the automation explore nearby values. Blind automation without this foundation often leads to wasted compute and unreliable results.

Do scaling laws work for domain-specific data like code or medical text?

Scaling laws give you a strong starting point, but they assume homogeneous data. For domain-specific corpora - like code, legal documents, or scientific papers - optimal learning rates can deviate by 15-25%. Always validate by testing a small grid around the predicted value. Don’t assume the formula works perfectly out of the box.

- Mar, 8 2026

- Collin Pace

- 6

- Permalink

Written by Collin Pace

View all posts by: Collin Pace