Prompt Length vs Output Quality: The Hidden Tradeoffs in LLM Decoding

More context doesn't always mean better answers. In fact, feeding a Large Language Model (LLM) too much information often makes it dumber. It sounds counterintuitive, but research confirms that as prompt length increases, output quality frequently drops. This isn't just a minor glitch; it's a fundamental limitation of how these models process data. If you are building AI applications, ignoring this tradeoff can lead to slower responses, higher costs, and hallucinated results.

The industry used to assume that if a model could read 100,000 tokens, it should be given all 100,000 tokens for complex tasks. That assumption is dead. Today’s top performers like GPT-4, Claude 3, and Gemini 1.5 Pro have massive technical capacity, but their reasoning abilities degrade long before they hit those limits. Understanding where that breaking point lies is the single most important skill for anyone working with generative AI right now.

The "Noise" Threshold: When Context Becomes a Liability

You might think that providing every detail ensures the model has what it needs. But LLMs don't work like search engines. They predict the next word based on patterns in the input. When you flood them with irrelevant text, you dilute the signal. A 2023 study by Stanford University and Google AI researchers found that performance degradation begins around 3,000 tokens. This is well below the maximum context windows of modern models, which can exceed 100,000 tokens.

The decline is linear and predictable. Research from PromptPanda in 2023 showed that accuracy drops by approximately 5 percentage points for every additional 500 tokens beyond the optimal range. At 500 tokens, reasoning accuracy sits at 95%. By 2,000 tokens, it falls to 80%. At 3,000 tokens, it hits 70%. This isn't about the model forgetting information; it's about the model struggling to prioritize relevant data amidst a sea of noise.

This phenomenon creates a "Goldilocks" zone for prompt engineering. Too little context, and the model lacks necessary instructions. Too much, and it gets confused. The sweet spot varies by task, but for general reasoning, staying under 2,000 tokens is often the safest bet. Dr. Percy Liang from Stanford’s Center for Research on Foundation Models put it bluntly in his 2024 NeurIPS keynote: "Beyond 2,000 tokens, we're not giving models more context-we're giving them more noise to filter through."

Why Attention Mechanisms Struggle with Length

To understand why longer prompts hurt performance, you need to look at the mechanics of attention mechanisms. Transformers use self-attention to weigh the importance of each token relative to others. This process scales quadratically with the number of tokens. Doubling your prompt length doesn't double the computational load; it roughly quadruples it.

PromptLayer’s 2024 benchmarking revealed that doubling prompt tokens from 1,000 to 2,000 increased processing time by 2.3x for GPT-4-turbo. Extending to 4,000 tokens caused a 5.1x latency increase. As the sequence grows, the model struggles to maintain focus on critical instructions, especially those buried deep in the text. This leads to two major issues: recency bias and attention dilution.

Recency bias means the model disproportionately weights tokens appearing later in the sequence. In a 10,000-token prompt, critical instructions placed in the first 20% received only 12-18% of the model's attention allocation, according to PromptLayer testing. Meanwhile, attention dilution occurs because the fixed amount of "attention budget" is spread thinner across more tokens. The model literally pays less attention to each individual piece of information.

| Token Count | Estimated Accuracy | Latency Impact | Hallucination Risk |

|---|---|---|---|

| 500 | 95% | Baseline | Low |

| 1,000 | 90% | +1.2x | Low |

| 2,000 | 80% | +2.3x | Moderate |

| 3,000 | 70% | +4.0x | High |

| 4,000+ | <65% | +5.1x+ | Very High |

Model Variations: Not All LLMs Are Equal

While the general trend holds true, different models handle length differently. Open-weight models sometimes show surprising resilience. Research by Goldberg et al. in August 2024 found that Llama 3 70B experienced only a 3% accuracy drop between 1,000 and 2,000 tokens, outperforming proprietary counterparts in stability. However, even Llama 3 couldn't escape the degradation curve entirely.

Google’s Gemini 1.5 Pro maintains higher accuracy at 2,000 tokens (88%) compared to GPT-4-turbo (82%), according to MLPerf testing in Q1 2025. Yet, both models exhibit similar degradation beyond that threshold. Anthropic’s internal testing identified 1,800 tokens as the "sweet spot" for Claude 3, with performance declining at 2.3% per additional 100 tokens after that point.

Even advanced techniques like Chain-of-Thought (CoT) prompting fail to fully mitigate these issues. CoT improved reasoning accuracy by 19% at 1,000 tokens but provided only 6% improvement at 2,500 tokens. The underlying architectural bottleneck remains. No amount of parameter scaling or clever prompting can completely overcome the quadratic complexity of attention mechanisms.

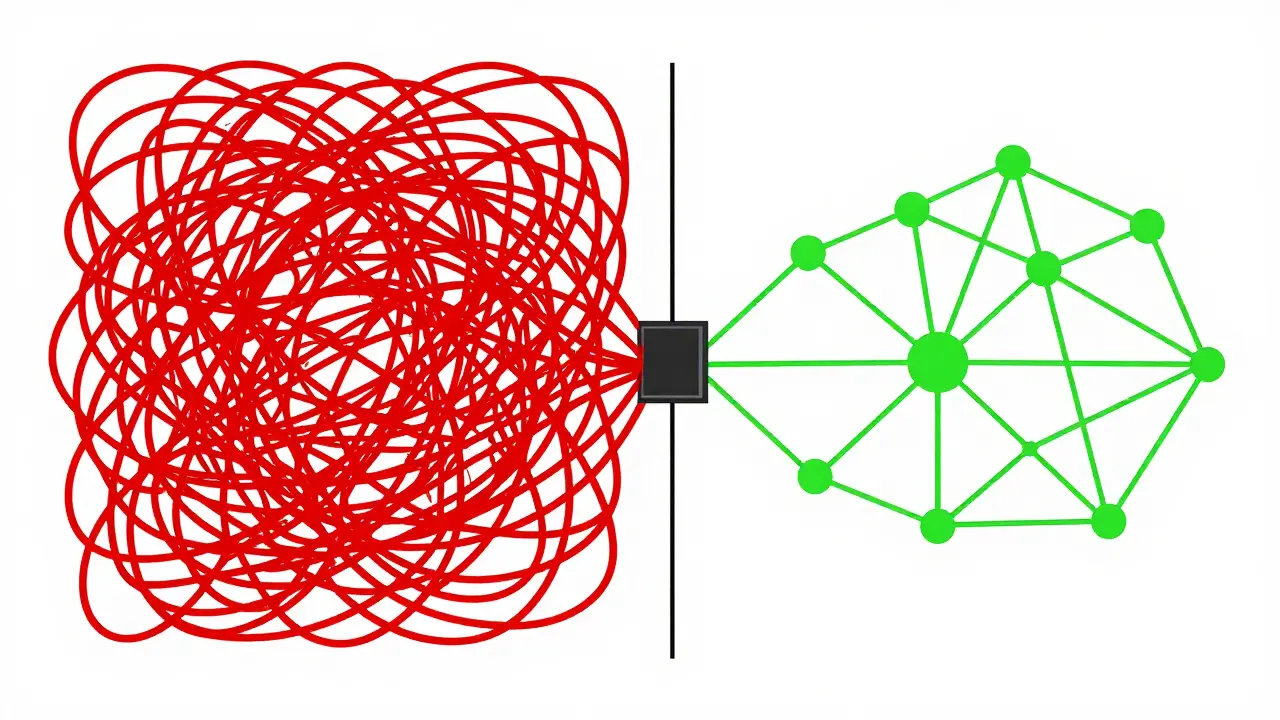

The Rise of Retrieval-Augmented Generation (RAG)

If brute-force context dumping doesn't work, what does? The answer is Retrieval-Augmented Generation (RAG). Instead of stuffing an entire document into the prompt, RAG retrieves only the most relevant snippets and feeds them to the model. This approach keeps the prompt concise while preserving access to vast amounts of information.

A PromptLayer case study showed that a well-structured 16K-token RAG implementation outperformed a monolithic 128K-token prompt by 31% in accuracy. It also reduced latency by 68%. This confirms that strategic context management consistently beats raw volume. By filtering out noise before it reaches the model, you allow the attention mechanism to focus on high-value signals.

RAG isn't perfect, though. It requires additional infrastructure for vector databases and retrieval logic. But for enterprise applications, the tradeoff is worth it. An Altexsoft case study in 2023 demonstrated that appropriate prompt length optimization via RAG reduced cloud computing costs by 37% while improving output accuracy by 22% in customer service chatbots. For businesses, that’s a direct impact on the bottom line.

Practical Strategies for Optimizing Prompt Length

You don’t need to guess your ideal prompt length. Start with empirical testing. The MLOps Community’s Prompt Engineering Guide recommends starting with 500-700 tokens for simple classification tasks and 800-1,200 tokens for complex reasoning. Never exceed 2,000 tokens without rigorous validation.

Here are three actionable steps to optimize your prompts:

- Iterative Pruning: Start with your full context and remove sentences one by one. Test the output after each removal. If the quality doesn't drop, keep it cut. Most developers spend 3-5 hours mastering this technique, but it yields immediate improvements.

- Repeat Critical Instructions: To combat recency bias, place key instructions at both the beginning and the end of your prompt. HackerNews discussions in March 2025 revealed that 73% of developers had ignored instructions due to recency bias in long prompts. Repetition helps anchor the model’s focus.

- Use Automated Tools: Tools like PromptLayer’s "PromptOptimizer" feature automate length testing. In January 2025, 83% of users achieved optimal results within 2-3 iterations using this tool. Don’t rely on intuition alone.

Documentation quality matters here. OpenAI’s guidelines receive high marks for clarity, while Meta’s Llama documentation scores lower for prompt engineering guidance. Lean on community resources like PromptPanda’s "Prompt Length Calculator," used by over 47,000 developers, to determine optimal lengths based on specific model parameters.

Exceptions to the Rule

Are there cases where longer prompts actually help? Yes, but they are rare. Specialized tasks requiring extensive cross-referencing, such as legal contract analysis or medical documentation, may benefit from context lengths of 32,000+ tokens. A Nature study in April 2025 noted marginal benefits in healthcare LLM applications for highly specialized scenarios.

However, these represent only about 8% of tested scenarios, according to PromptLayer’s usage analytics. For the vast majority of use cases-customer support, content generation, code assistance-the shorter-is-better rule applies. Even in legal contexts, RAG often outperforms monolithic prompts because it allows the model to focus on specific clauses rather than scanning thousands of pages blindly.

What is the optimal prompt length for most LLM tasks?

For most reasoning and classification tasks, the optimal prompt length is between 800 and 1,200 tokens. Performance typically degrades significantly beyond 2,000 tokens, so aim to stay under that threshold unless empirical testing proves otherwise for your specific use case.

Why does increasing prompt length reduce output quality?

Increasing prompt length reduces quality due to attention dilution and recency bias. As the number of tokens grows, the model's attention mechanism spreads its focus thinner across all inputs, causing it to miss critical details. Additionally, models tend to overweight recent information, ignoring earlier instructions.

How does RAG improve upon long prompts?

Retrieval-Augmented Generation (RAG) improves performance by retrieving only the most relevant snippets of data and feeding them to the model. This keeps the prompt concise, reducing noise and latency while maintaining access to large datasets. Studies show RAG can outperform monolithic prompts by up to 31% in accuracy.

Do all LLMs suffer from the same prompt length limitations?

Most LLMs follow a similar degradation curve, but there are nuances. Open-weight models like Llama 3 70B show slightly better stability at mid-lengths, while proprietary models like Gemini 1.5 Pro maintain higher accuracy at 2,000 tokens. However, no current model escapes the fundamental bottleneck of quadratic attention complexity.

Can Chain-of-Thought prompting fix long-prompt issues?

Chain-of-Thought (CoT) prompting helps with reasoning but cannot fully mitigate length-related degradation. While CoT improves accuracy by 19% at 1,000 tokens, its benefit drops to just 6% at 2,500 tokens. It addresses logical gaps, not attention bottlenecks.

- May, 8 2026

- Collin Pace

- 0

- Permalink

- Tags:

- prompt length optimization

- LLM decoding tradeoffs

- context window limits

- prompt engineering best practices

- retrieval-augmented generation

Written by Collin Pace

View all posts by: Collin Pace