Tag: LLM bias

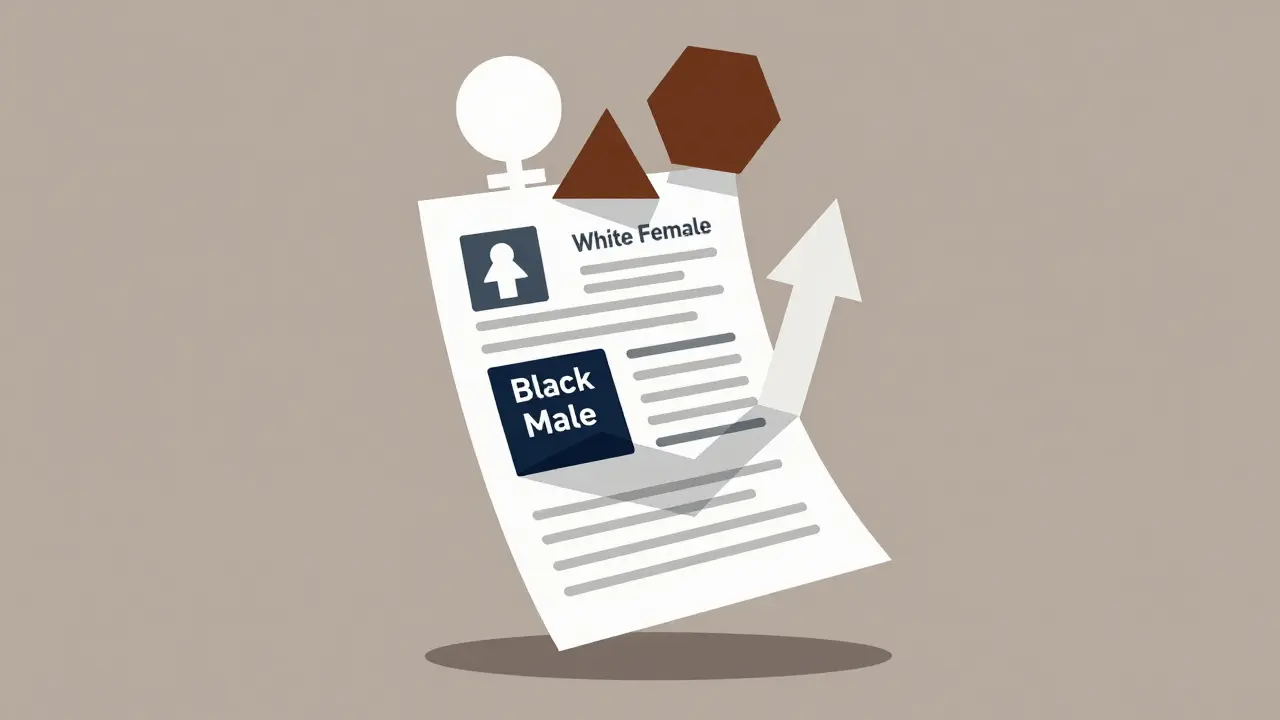

How to Measure Gender and Racial Bias in Large Language Model Outputs

Large language models show measurable gender and racial bias in hiring simulations, favoring white women and penalizing Black men. Despite debiasing efforts, these biases persist-and they have real consequences for employment outcomes.

- Mar 3, 2026

- Collin Pace

- 0

- Permalink