How LLM Attention Works: Key, Query, and Value Projections Explained

When you ask an AI to summarize a long article or write code, it doesn't just read the text linearly like a human might. It looks at every word simultaneously, deciding which parts matter most for the current task. This ability comes from a specific mathematical structure inside Large Language Models (LLMs) called the attention mechanism, which relies on three critical components: Key, Query, and Value projections that allow the model to understand context dynamically. These matrices are not just abstract math; they are the engine that drives modern AI's reasoning capabilities.

Understanding what these matrices learn is crucial for anyone working with transformer-based models. They transform raw text into meaningful relationships, allowing the model to connect distant ideas, resolve pronouns, and maintain coherence across thousands of words. Without this system, AI would struggle with basic language tasks, losing track of meaning as sentences grow longer.

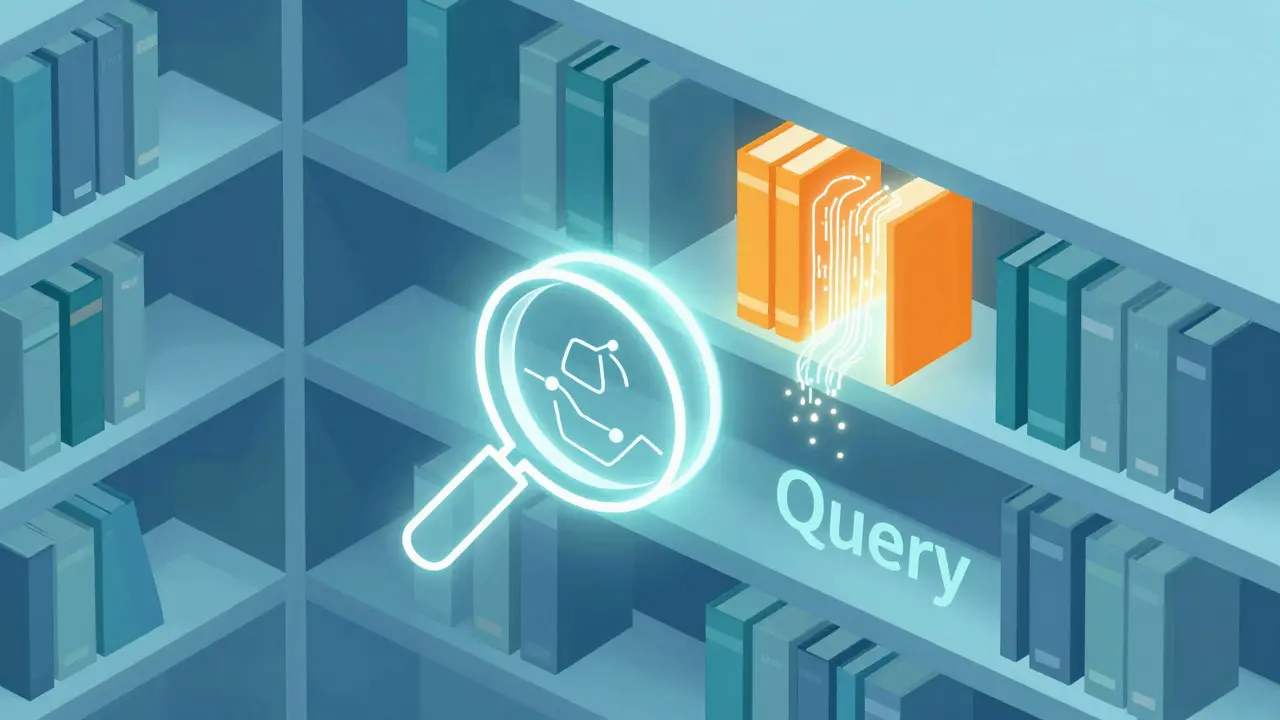

The Database Lookup Analogy

To grasp how Key, Query, and Value (QKV) work, imagine a massive library where every book represents a word in your input sentence. You want to find information relevant to a specific concept. In this analogy:

- The Query (Q) is your search request. It asks, "What am I looking for right now?" For example, if the current word is "bank," the query might be searching for clues about whether it means a financial institution or a river edge.

- The Key (K) is the metadata tag on each book. It answers, "How do I identify myself?" The word "river" has a key that matches the "river bank" query, while "money" matches the "financial bank" query.

- The Value (V) is the actual content inside the book. Once the model finds a match between Query and Key, it retrieves the Value-the semantic information needed to update its understanding of the current word.

This separation of concerns allows the model to perform complex lookups efficiently. Instead of reading every word fully to see if it's relevant, the model uses lightweight Keys to filter candidates, then pulls detailed Values only for the most relevant matches. This process happens millions of times per second during inference.

Mathematical Foundations of QKV Projections

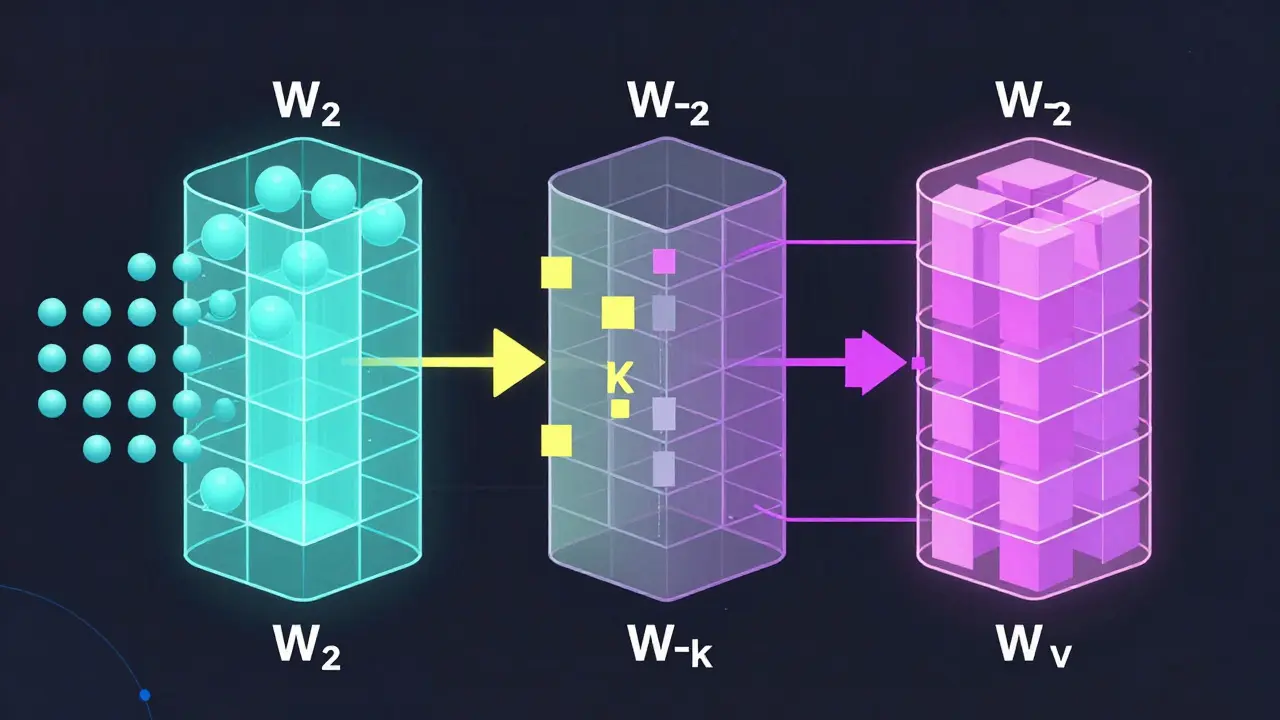

At the core of this mechanism are learned weight matrices. When text enters an LLM, it first becomes embeddings-numerical vectors representing each token. These embeddings are then projected through three separate linear transformations using weight matrices denoted as $W_q$, $W_k$, and $W_v$.

Each matrix serves a distinct purpose:

- $W_q$ (Query Weight Matrix): Transforms the input embedding into a vector that emphasizes dimensions useful for searching relationships. It learns which aspects of a word’s meaning should be used to probe other words.

- $W_k$ (Key Weight Matrix): Projects the input into a searchable space. It encodes distinctive characteristics so that dot products with Queries can reveal semantic or syntactic similarities.

- $W_v$ (Value Weight Matrix): Carries the actual informational content. These vectors are optimized to be combined via weighted averages based on attention scores.

The computation begins by calculating the dot product between the Query vector of one token and all Key vectors in the sequence. Mathematically, this is represented as $QK^T$. To prevent these values from growing too large-which could destabilize training-the results are scaled by dividing by $ rac{1}{ ext{sqrt}(d)}$, where $d$ is the dimension of the Query vector. This scaling factor, introduced in the original 2017 Transformer paper by Vaswani et al., ensures numerical stability during gradient descent.

After scaling, a softmax function converts these dot products into normalized attention weights that sum to 1. These weights determine how much each Value vector contributes to the final output representation for the current token.

| Component | Role | Analogy | Learning Objective |

|---|---|---|---|

| Query (Q) | Search Request | Library Search Term | Emphasize relevant dimensions for relationship detection |

| Key (K) | Metadata/Identity | Book Index Tag | Encode distinctive traits for discoverability |

| Value (V) | Content Payload | Book Content | Carry semantic info for weighted combination |

What the Matrices Learn During Training

During training, the QKV matrices don’t just memorize patterns-they develop specialized functions. Through backpropagation, the model adjusts $W_q$, $W_k$, and $W_v$ to minimize prediction errors. Over time, these matrices learn context-aware token representation mapping.

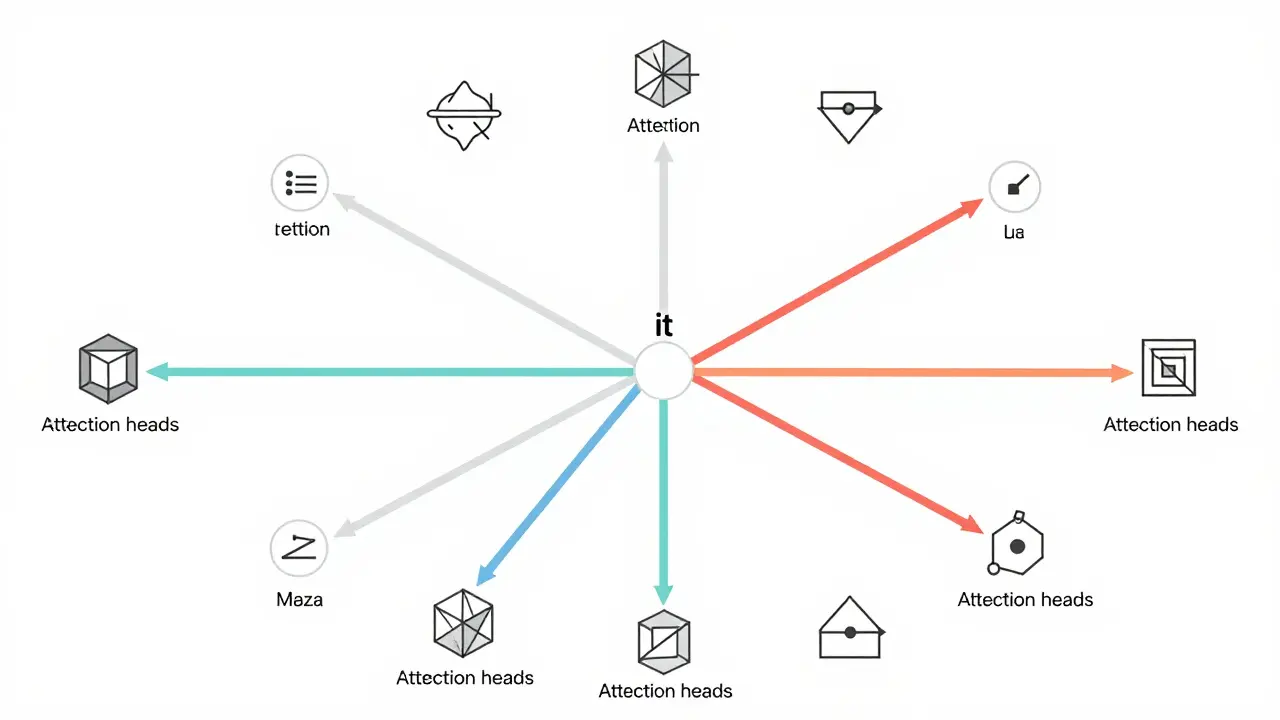

Query vectors learn to highlight specific features of a token that are most predictive of its relationships with others. For instance, in the sentence "The dog chased the ball because it was fast," the Query for "it" learns to focus on grammatical subject roles and proximity to verbs. Key vectors learn to encode syntactic positions and semantic categories, making them easily retrievable by matching Queries. Value vectors learn to carry nuanced meanings, such as distinguishing between physical speed and metaphorical quickness.

This tri-part decomposition enables simultaneous learning of multiple linguistic phenomena. Different layers and attention heads specialize in different tasks. Early layers often capture local syntax and part-of-speech tags, while deeper layers handle discourse structure, coreference resolution, and logical reasoning. Multi-head attention amplifies this effect by running parallel QKV computations, allowing the model to track diverse relationships concurrently.

Advantages Over Recurrent Architectures

Before transformers dominated AI, recurrent neural networks (RNNs) and Long Short-Term Memory (LSTM) units were the standard for sequence modeling. However, they processed inputs sequentially, creating bottlenecks for long-range dependencies. If a word appeared early in a document, its influence had to pass through many hidden states before reaching later tokens, leading to information loss.

Transformers solve this by processing all tokens in parallel. Every token can attend to every other token directly, regardless of distance. This full-context awareness eliminates the vanishing gradient problem common in RNNs. As a result, LLMs can maintain coherence over tens of thousands of tokens, enabling applications like code generation, legal document analysis, and scientific summarization.

The efficiency gain is substantial. While LSTM layers scale linearly with sequence length, naive attention scales quadratically. Yet, thanks to hardware optimizations and sparse attention techniques, modern LLMs achieve superior performance without prohibitive computational costs. The shift from sequential to parallel processing marked a turning point in natural language processing history.

Practical Implications for Model Design

For developers and researchers, understanding QKV dynamics informs better model design choices. Adjusting the dimensionality of $d$ affects capacity versus compute trade-offs. Larger dimensions allow finer-grained representations but increase memory usage. Tuning the number of attention heads balances specialization against redundancy.

Additionally, monitoring attention patterns helps diagnose model behavior. Visualizing attention weights reveals whether the model focuses on relevant keywords or gets distracted by noise. Techniques like attention rollout interpretability methods help build trust in high-stakes applications like healthcare diagnostics or autonomous decision-making systems.

As models grow larger, optimizing QKV projections becomes increasingly important. Efficient implementations use kernel fusion, mixed precision arithmetic, and approximate attention algorithms to reduce latency. These engineering improvements ensure that theoretical advantages translate into real-world usability.

Conclusion

The Key, Query, and Value projections form the backbone of modern AI intelligence. By separating search intent, identity encoding, and content retrieval, they enable efficient, context-aware processing at scale. Their learned representations power everything from chatbots to creative writing assistants, demonstrating why transformer architecture remains the gold standard in machine learning.

Mastering these concepts opens doors to advanced topics like prompt engineering, fine-tuning strategies, and custom model development. Whether you're building enterprise solutions or exploring academic research, grasping how QKV matrices shape meaning is essential for navigating the future of artificial intelligence.

Why are there three separate matrices (Q, K, V) instead of one?

Separating queries, keys, and values allows the model to decouple the act of searching from the content being retrieved. This modularity enables more flexible and efficient attention mechanisms, where different aspects of a token’s role (searching vs. providing info) can be optimized independently.

How does scaling affect attention calculations?

Scaling prevents dot products from becoming too large, which would push softmax outputs toward extreme values (near 0 or 1). This leads to unstable gradients and poor convergence. Dividing by sqrt(d) keeps activations in a healthy range for training.

Can attention mechanisms replace traditional NLP techniques?

Yes, in most cases. Attention-based models outperform older rule-based or statistical approaches in accuracy, adaptability, and generalization. They handle ambiguity, context shifts, and unseen data far better than legacy systems.

What happens if I remove the Value matrix?

Without Values, the model couldn’t retrieve any actual content-it would only know which tokens are related but not what those relationships mean. The entire purpose of attention breaks down without a payload to aggregate.

Are QKV matrices fixed after training?

Yes, once trained, the weight matrices remain static unless fine-tuned further. During inference, they apply consistently across new inputs, leveraging previously learned patterns to generate coherent responses.

- May, 3 2026

- Collin Pace

- 0

- Permalink

- Tags:

- LLM attention mechanism

- Key Query Value matrices

- transformer architecture

- neural network training

- AI model design

Written by Collin Pace

View all posts by: Collin Pace