Sparse and Dynamic Routing: How MoE is Scaling Modern LLMs

The Problem with Dense Scaling

In a traditional dense transformer, if you double the number of parameters to make the model smarter, you roughly double the amount of math the computer has to do for every token. This is a quadratic climb toward impossibility. By 2024, researchers realized that adding more parameters to dense models was yielding diminishing returns. You'd get a slightly better answer, but it would cost exponentially more to train and run.

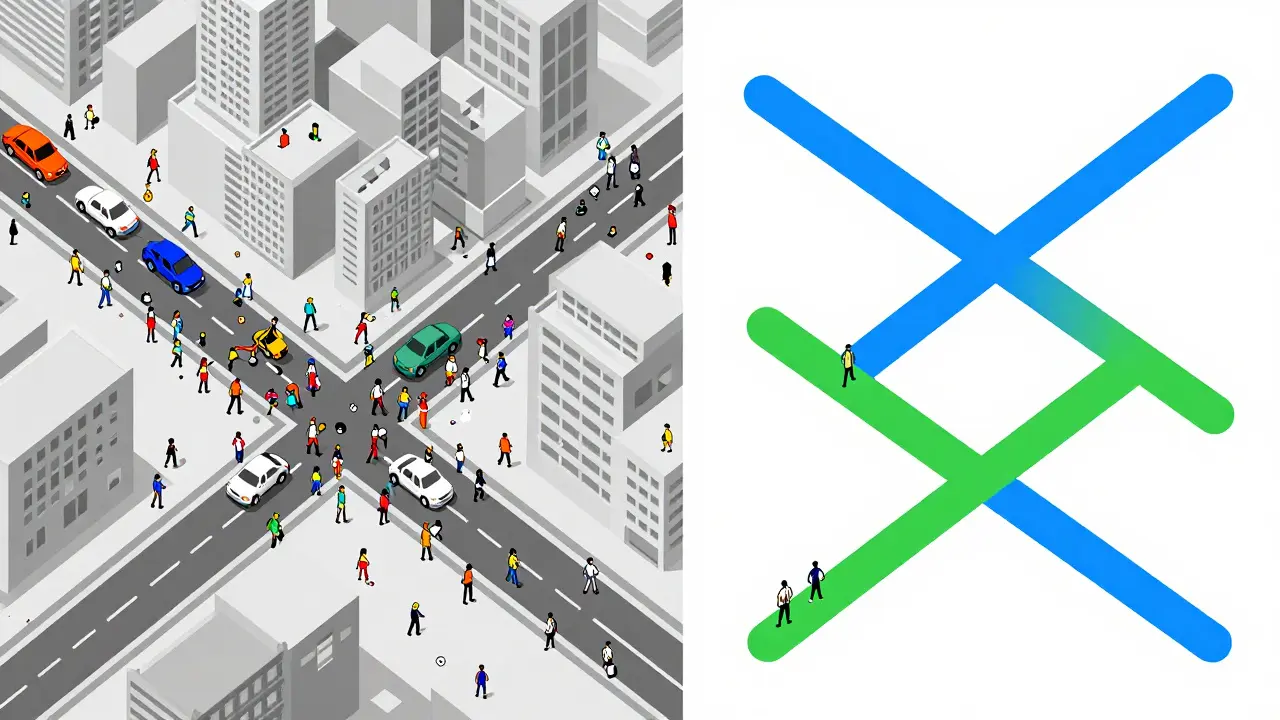

The solution wasn't just to make models smaller, but to make them smarter about which parts of their brain they used. This is where sparse and dynamic routing comes in. Instead of one giant block of logic, the model is split into many smaller, specialized experts. A "router" or gating network then decides which expert is best suited for the specific token being processed. It's like having a hospital with a triage nurse (the router) who sends you to a cardiologist or a dermatologist, rather than forcing every doctor in the building to examine you.

How Dynamic Routing Actually Works

At the heart of this system is the routing mechanism. When a token enters the network, the router computes a probability distribution over all available experts. Usually, the system uses a "top-k" selection process, where k is typically 1 or 2. If a model has 128 experts, but only 2 are activated, the computational cost per token drops significantly while the model retains the representational capacity of all 128.

Recent innovations have pushed this further with RouteSAE, which is a Route Sparse Autoencoder that dynamically integrates multi-layer residual streams to decide which layer's activation is most relevant. Unlike older versions that looked at one layer at a time, RouteSAE uses sum pooling to create a condensed representation of the input across multiple layers, then picks the best layer based on the highest probability. This allows the model to pull high-level concepts from deep layers and basic syntax from shallow layers simultaneously, improving interpretation scores by over 22% compared to single-layer methods.

| Feature | Dense Architecture | Sparse MoE Architecture |

|---|---|---|

| Parameter Activation | 100% for every token | Typically 12.5% to 25% |

| Computational Cost | Scales quadratically with size | Scales approximately linearly |

| Memory Requirement | Moderate (fits active params) | Very High (must store all experts) |

| Training Stability | High / Predictable | Lower (risk of expert collapse) |

The Hardware Trade-off: Compute vs. Memory

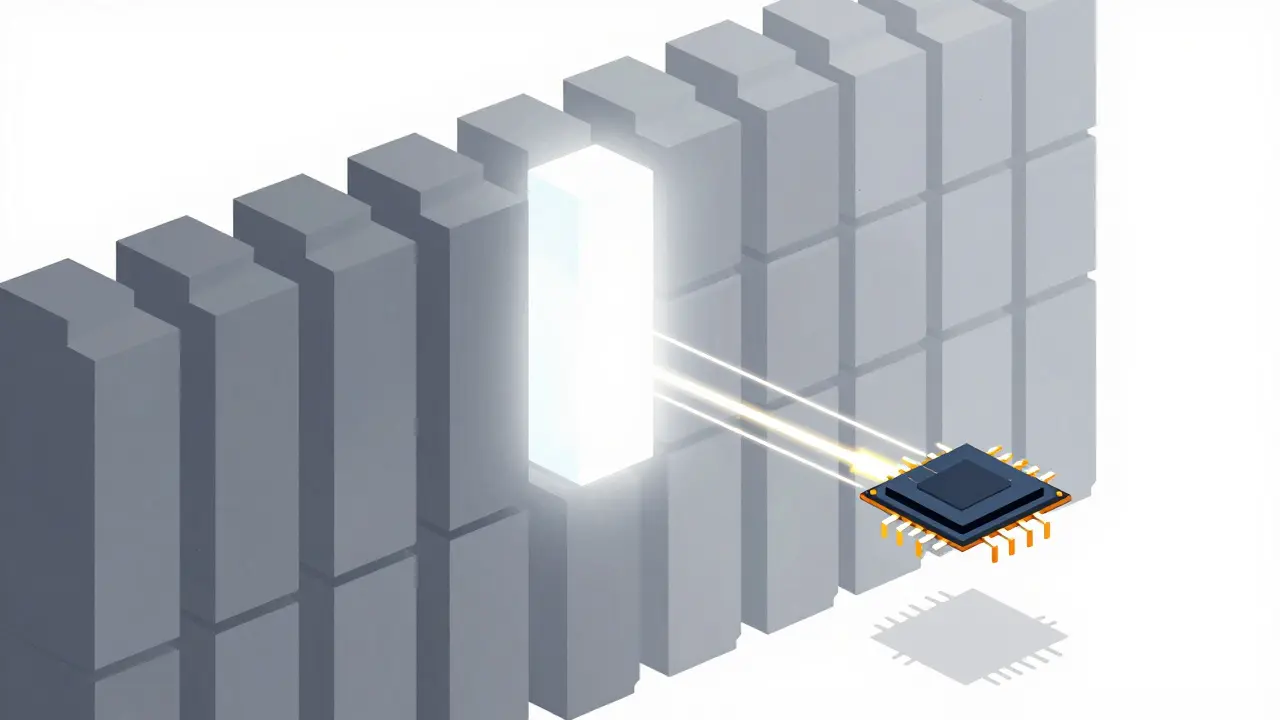

If MoE is so much faster, why isn't every single model one? Because there's a catch: memory. While a sparse model only uses a few parameters for the math, the hardware still needs to store all the experts in VRAM. This creates a massive memory bandwidth challenge. You might only be doing the work of a 10-billion parameter model, but you need the memory capacity of a 1-trillion parameter model to keep the experts ready.

This is why companies like NVIDIA is a global leader in GPU and AI acceleration hardware that develops the infrastructure required to handle the random memory access patterns of MoE and Cerebras are focusing so heavily on the hardware-software co-design. Moving massive amounts of data from memory to the processor (the "memory wall") becomes the primary bottleneck when you're dynamically swapping experts in and out for every single word in a sentence.

Common Pitfalls: Expert Collapse and Load Balancing

Routing isn't perfect. One of the biggest headaches for AI engineers is "expert collapse." This happens when the router develops a favorite. If the model decides that Expert #4 is slightly better than the others, it will start sending more tokens to Expert #4. Because Expert #4 gets more training, it gets even better, and the router sends even more tokens there. Eventually, the other 127 experts are ignored and never learn anything.

To stop this, developers use auxiliary loss functions. These are basically "penalties" the model pays if it ignores certain experts, forcing the router to distribute the workload more evenly. If you don't get this balance right, you end up with a massive model that performs like a tiny one because most of its capacity is sitting idle.

The Future of Sparsity in 2026

We are moving beyond simple top-k routing. The next frontier is adaptive sparsity, where the model decides how many experts it needs based on the difficulty of the prompt. If you ask a model "What is 2+2?", it might only activate one small expert. If you ask it to analyze a complex legal contract, it might activate ten experts to ensure accuracy.

The trajectory is clear: to reach the "trillion-parameter era" without bankrupting the companies building them, sparsity is the only viable path. We're seeing this in the Llama series from Meta and various Mistral models, which have already embraced these techniques to deliver high-performance AI that can actually fit on a cluster of GPUs without requiring a dedicated power plant.

Does MoE make the model smarter or just faster?

Both. By having more total parameters, the model has a larger "knowledge base" (representational capacity) to store facts and nuances. Because it only activates a few experts at a time, it can maintain that intelligence while remaining fast enough for real-time use.

What is the main difference between dense and sparse models?

A dense model uses all its weights for every single input. A sparse model (like MoE) uses a routing layer to select only a small subset of weights (experts) to process each input, drastically reducing the compute needed per token.

Why is memory a problem for sparse models?

Even though only a few experts are active, the entire set of experts must be accessible in memory. This means you need enough VRAM to store a trillion parameters, even if you're only "calculating" with a few billion at a time.

What is "Expert Collapse"?

Expert collapse is a training failure where the router repeatedly chooses the same few experts, leaving the others underutilized and untrained. This wastes the model's capacity and is usually fixed with auxiliary load-balancing losses.

How does RouteSAE improve on standard routing?

RouteSAE allows the model to route across different layers of the network rather than just within one. By analyzing the residual streams of multiple layers, it can combine low-level and high-level features, which significantly boosts how well we can interpret what the model is actually doing.

- Apr, 8 2026

- Collin Pace

- 7

- Permalink

Written by Collin Pace

View all posts by: Collin Pace