Tag: Mixture of Experts

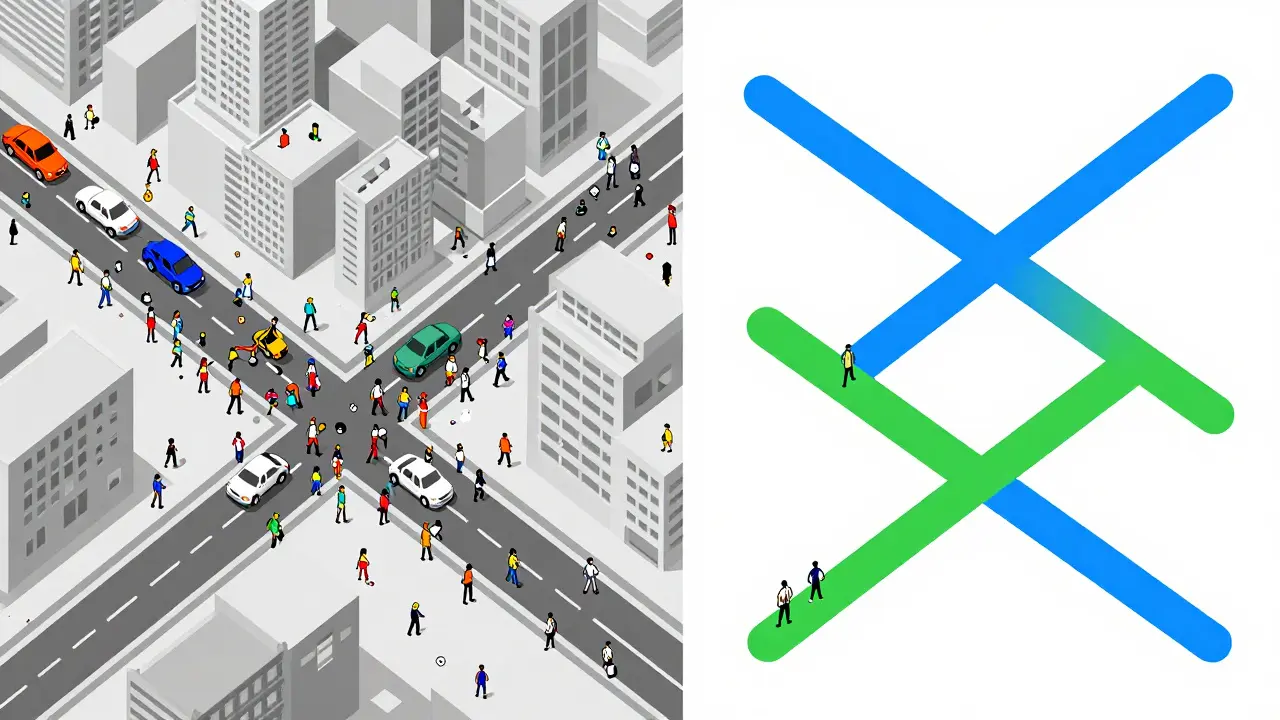

Sparse and Dynamic Routing: How MoE is Scaling Modern LLMs

Explore how Sparse and Dynamic Routing (MoE) allows LLMs to scale to trillions of parameters without exploding computational costs. Learn about RouteSAE and expert collapse.

- Apr 8, 2026

- Collin Pace

- 1

- Permalink