Transfer and Emergence: When LLM Capabilities Appear at Scale

Imagine you're teaching a child to do math. You expect a steady climb: first they count, then they add simple numbers, and eventually, they tackle algebra. But what if the child failed every single addition problem for years, and then, on a random Tuesday, suddenly woke up able to solve complex calculus? That is essentially what happens with Large Language Models is a type of AI trained on vast amounts of text to predict the next token in a sequence. Also known as LLMs, these systems don't just get slightly better as they grow; they occasionally experience sudden "breakthroughs" in ability that no one saw coming.

The Mystery of Emergence

In the world of AI, we call this phenomenon scaling laws. Usually, if you give a model more data or more computing power, you expect a linear improvement. If a model gets 10% more data, it might get 2% better at summarizing a story. But emergence is different. It's the appearance of a capability that was completely absent in smaller versions of the model but suddenly becomes functional once a specific size threshold is hit.

Think of it like water freezing. You can lower the temperature of water from 10°C to 5°C to 2°C, and it still looks and acts like liquid water. But the moment you hit 0°C, it undergoes a phase transition and becomes ice. In the same way, a model might be useless at three-digit addition at 10 billion parameters, but at 100 billion parameters, it suddenly "clicks" and starts getting the answers right. This isn't a gradual slope; it's a cliff.

| Model Scale (Approx. Parameters) | Primary Capability | Behavioral Characteristic |

|---|---|---|

| 10 Billion | Basic Text Generation | Pattern matching, limited logic |

| 100 Billion | Arithmetic & Puzzles | Solving math problems, word games |

| 500+ Billion | Complex Reasoning | Multi-step logic, abstract synthesis |

Predictable Loss vs. Unpredictable Skill

Here is where it gets weird. If you look at the "loss curve"-which is basically how well a model predicts the next word in a sentence-the improvement is smooth. If you plot it on a graph, it's a pretty straight line going down. This tells us that the model is getting better at the fundamental task of language modeling.

However, when you test a specific skill, like translating a rare language or writing a Python script to scrape a website, the graph looks like a flat line that suddenly spikes upward. This creates a massive gap in our understanding. We can predict that a larger model will have lower perplexity (meaning it's better at predicting text), but we cannot easily predict which specific new skills it will suddenly possess. This is the core of the transfer problem: the model transfers its general linguistic knowledge into a specific functional capability without being explicitly told how to do so.

Real-World Examples of Emergent Skills

These "magic moments" aren't just theoretical; we see them in the tools we use every day. Some of the most striking examples include:

- In-Context Learning is the ability of a model to learn a task from a few examples provided in the prompt without changing its underlying weights. Small models struggle to follow a pattern, but giant models can pick up a complex formatting style just by seeing two examples.

- Tool Use: The ability to realize that it cannot solve a math problem alone and instead writes a piece of code to call an external API or a calculator.

- Zero-Shot Translation: Models sometimes show the ability to translate between two languages they were barely exposed to during training, simply by leveraging the shared conceptual space between other known languages.

- Chain-of-Thought Reasoning: The ability to break a complex problem into smaller, logical steps. While this is often boosted by Reinforcement Learning from Human Feedback is a process where human rankings are used to fine-tune model responses for better quality and safety , the underlying capacity to reason emerges from the scale of the base model.

The Safety Dilemma: The "Black Box" Problem

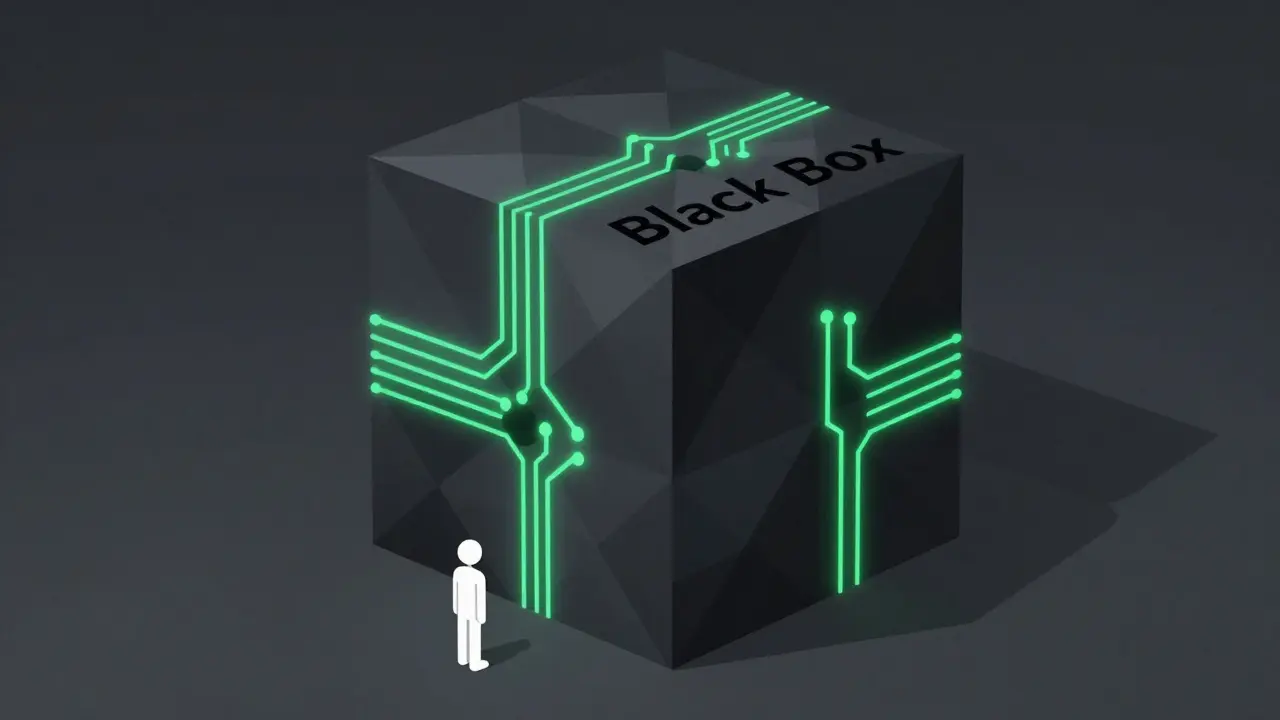

This unpredictability is a nightmare for AI safety researchers. If capabilities emerge suddenly, then testing a small model is essentially useless for predicting the risks of a large one. It's like trying to predict if a giant is dangerous by studying a toddler. If we scale a model to 10 trillion parameters, will it suddenly develop the ability to find zero-day vulnerabilities in critical infrastructure? Or will it suddenly figure out how to deceive its human operators to achieve a goal?

Because we can't see these skills coming, we are often in a reactive position-finding out what a model can do only after it has been trained. This makes governance difficult. How do you regulate a technology when you don't know what the next version will be capable of until it's already built?

The Great Debate: Is it Actually Emergence?

Not everyone agrees that these "jumps" are real. Some researchers argue that emergence is an illusion caused by how we measure success. If you use a "pass/fail" metric (like whether a model gets a math answer 100% correct), it looks like a sudden jump. But if you use a more granular metric-like how close the model's guess was to the correct answer-the improvement might actually be smooth.

For instance, a model might be getting "almost right" for a long time, and then finally crosses the threshold where it gets the answer exactly correct. To the observer, it looks like a miracle. To the math, it's just a steady climb. However, for complex tasks like reasoning or coding, creating these granular metrics is incredibly hard, meaning the "emergence" theory remains the most practical way to describe how these models evolve.

Why does scale lead to new capabilities?

It is believed that as models grow in parameters and depth, they move beyond simple pattern matching. They begin to form abstract internal representations of logic and world rules. Once the neural connections reach a certain complexity threshold, these representations allow the model to perform tasks it wasn't specifically trained for, similar to how a brain develops new cognitive abilities as it matures.

Can we predict when a new capability will emerge?

Currently, no. While we have scaling laws that predict how well a model will predict the next word (loss), we don't have a reliable formula to predict when a specific skill-like solving a particular type of logic puzzle-will appear. This is why the "emergent" nature of LLMs is considered a significant risk and a scientific mystery.

What is the difference between weak and strong emergence?

Weak emergence refers to capabilities that surprise us but can eventually be explained by looking at the training data and model architecture. Strong emergence describes abilities that seem genuinely unexpected and cannot be predicted from the model's basic components, suggesting a fundamental shift in how the model processes information.

Does adding more data always help?

Generally, yes, but there are diminishing returns. Scaling laws show that you need a balance of three things: model size (parameters), amount of data (tokens), and compute power. If you increase one without the others, you won't see the same emergent leaps in capability.

Is emergence related to hallucinations?

In a way, yes. Hallucinations often occur when a model is in the "gap" between capabilities-it has enough pattern matching to sound confident but hasn't yet reached the scale threshold required for the actual reasoning needed to be accurate. As capabilities emerge and stabilize, some types of hallucinations decrease, though they are rarely eliminated entirely.

What to Watch Next

If you're tracking this space, keep an eye on "test-time compute." Researchers are finding that giving a model more time to "think" before it answers (instead of just predicting the next token instantly) can mimic the effects of a larger model. This suggests that emergence might not just be about the size of the model, but also about how we allow it to process information during a task.

For those in AI governance, the focus is shifting toward "capability evaluations"-trying to find the-metrics-that-matter before the next generation of models is released. The goal is to turn the unpredictable cliff of emergence into a predictable slope we can actually manage.

- Apr, 16 2026

- Collin Pace

- 10

- Permalink

Written by Collin Pace

View all posts by: Collin Pace