Attention Mechanisms in Generative AI: From Self-Attention to Flash Attention

You’ve probably heard that Generative AI is the engine behind everything from chatbots to code assistants. But what actually makes these models smart? It’s not just the sheer number of parameters-it’s how they process information. At the heart of this capability lies a concept called attention mechanisms. These are the neural network components that allow models to weigh the importance of different words in a sentence, enabling them to understand context and generate coherent responses.

For years, building these models was limited by memory constraints. The math required to calculate attention grew quadratically with sequence length, meaning longer contexts meant exponentially more memory usage. That bottleneck changed dramatically with the introduction of Flash Attention, an algorithmic optimization that drastically reduces memory footprint while maintaining exact mathematical results. In this article, we’ll break down how attention evolved from early machine translation experiments to the high-speed kernels powering today’s largest language models.

The Origins of Attention in Neural Networks

To understand where we are, we need to look at where we started. Before Transformers dominated the landscape, researchers were struggling with recurrent neural networks (RNNs). RNNs processed data sequentially, which made them slow and prone to forgetting earlier parts of long sequences-a problem known as vanishing gradients.

The breakthrough came in 2014 when Dzmitry Bahdanau, Kyunghyun Cho, and Yoshua Bengio published their paper on "Neural Machine Translation by Jointly Learning to Align and Translate." They introduced additive attention, allowing the decoder to look back at specific parts of the encoder’s output rather than relying on a single fixed vector representing the entire input sentence. This approach improved BLEU scores significantly on English-French translation tasks.

In 2015, Minh-Thang Luong and colleagues refined this further with multiplicative (dot-product) attention. By simplifying the computation to a dot product between decoder and encoder states, they made the mechanism faster and easier to implement. These early works established the core idea: instead of compressing all information into one bottleneck, let the model dynamically focus on relevant pieces of input for each output step.

Self-Attention and the Transformer Revolution

The real game-changer arrived in 2017 with the publication of "Attention Is All You Need" by Ashish Vaswani and his team at Google Brain. This paper introduced the Transformer architecture, which replaced recurrence entirely with self-attention layers. Unlike previous models that attended across two different sequences (encoder and decoder), self-attention allows a sequence to attend to itself.

Mathematically, scaled dot-product attention computes queries ($Q$), keys ($K$), and values ($V$) from the input embeddings. For each position $i$, the model calculates:

Attention($q_i$, $K$, $V$) = $ ext{softmax}(\frac{q_i \cdot k_j^T}{\sqrt{d_k}}) v_j$

This operation has a time complexity of $O(n^2 \times d_k)$ and requires $O(n^2)$ memory to store the attention matrix, where $n$ is the sequence length. While efficient for short sequences, this quadratic scaling became a major hurdle as models like GPT-3 (with 175 billion parameters) began handling contexts of thousands of tokens.

The original Transformer used multi-head attention, splitting the embedding dimension into multiple heads to capture diverse relationships-such as syntactic dependencies or semantic similarities-in parallel. This design proved highly effective, achieving state-of-the-art results on translation benchmarks and laying the groundwork for all subsequent large language models.

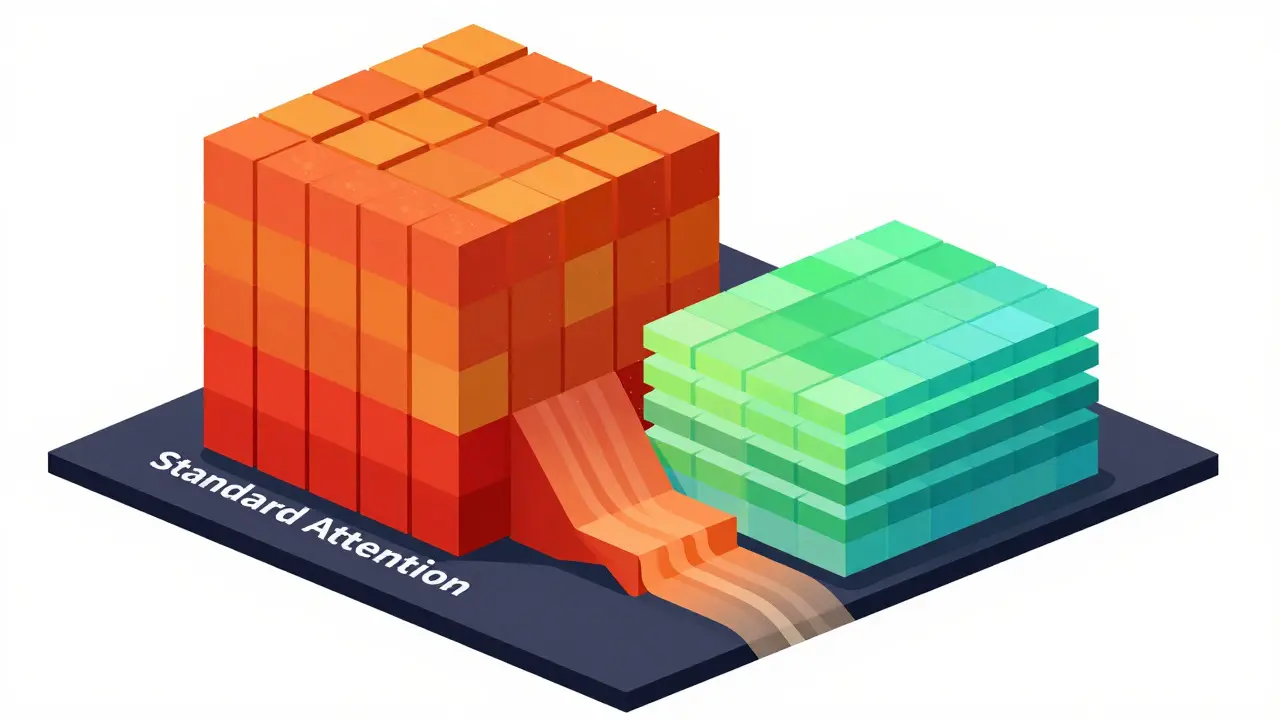

The Memory Bottleneck of Standard Attention

As generative AI moved beyond translation to general-purpose text generation, context windows expanded rapidly. GPT-3 supported 2,048 tokens, while later models like Claude 2 pushed past 100,000 tokens. However, standard self-attention struggles under these conditions due to its memory requirements.

Consider a sequence of 4,096 tokens. The attention matrix alone contains over 16 million elements per head. With 96 heads in GPT-3, storing these matrices in GPU high-bandwidth memory (HBM) consumes hundreds of megabytes per layer before accounting for gradients during training. On GPUs like the NVIDIA A100, which have 80GB of HBM2e, this quickly becomes unsustainable.

Traditional implementations load query, key, and value tensors from HBM to SRAM, compute the full $n \times n$ score matrix, write it back to HBM, apply softmax, and then multiply again with values. This involves multiple passes over large intermediate matrices, creating a severe memory bandwidth bottleneck. Compute units often sit idle waiting for data, leading to poor hardware utilization.

Efficient Approximations vs. Exact Optimizations

Between 2019 and 2021, researchers proposed various approximations to reduce attention costs. Methods like Reformer used locality-sensitive hashing to approximate nearest-neighbor attention in $O(n \log n)$ time. Linformer projected keys and values into low-rank representations for linear memory complexity. Performer utilized random feature maps to achieve linear-time softmax approximation.

While these approaches offered speedups, they introduced trade-offs. Approximate methods often resulted in slight drops in accuracy metrics such as perplexity or BLEU scores. For production-grade generative models, even small deviations can alter output quality significantly. Therefore, there was a strong demand for optimizations that maintained mathematical exactness while improving efficiency.

Flash Attention: An IO-Aware Solution

In 2022, Tri Dao and colleagues introduced FlashAttention, an exact implementation of standard attention designed specifically for GPU memory hierarchies. Rather than approximating the math, FlashAttention optimizes how data moves through the system.

The key insight is tiling. Instead of computing the entire $n \times n$ matrix at once, FlashAttention breaks queries, keys, and values into smaller blocks that fit into on-chip SRAM. It processes one tile at a time using fused CUDA kernels that combine matrix multiplication, softmax, and dropout in a single pass. Crucially, it employs an online softmax algorithm that maintains running maximums and sums of exponentials, producing numerically identical results to standard softmax without ever materializing the full attention matrix in HBM.

Benchmarks reported up to 2-4× speedups and 10-20× lower memory usage for sequences of 4,096-8,192 tokens on NVIDIA A100 GPUs. Importantly, validation accuracy remained unchanged compared to standard attention trained with identical hyperparameters.

Evolution to FlashAttention-2 and Beyond

Building on the initial success, FlashAttention-2 released in July 2023 further optimized tiling strategies and pipelining techniques. It improved effective TFLOPs/s from roughly 150-200 to over 300 on specific configurations, doubling performance for long-context causal attention.

By March 2026, reports emerged about Flash Attention 4, claiming 1,605 TFLOPs/s on NVIDIA B200 GPUs with 71% hardware utilization. These advancements demonstrate how closely attention kernel development is tied to hardware evolution. As new accelerators offer higher memory bandwidth and compute density, IO-aware algorithms continue to push closer to theoretical peak performance.

| Method | Complexity | Exactness | Primary Benefit |

|---|---|---|---|

| Standard Self-Attention | $O(n^2)$ | Exact | Simplicity |

| Reformer | $O(n \log n)$ | Approximate | Long-sequence scalability |

| Linformer | $O(n)$ | Approximate | Linear memory usage |

| FlashAttention | $O(n^2)$ | Exact | IO-aware, reduced HBM traffic |

Integration into Mainstream Frameworks

FlashAttention has been widely adopted across the deep learning ecosystem. PyTorch 2.0, released in March 2023, integrated `torch.nn.functional.scaled_dot_product_attention`, which automatically dispatches to optimized backends including FlashAttention when available. Hugging Face’s `transformers` library added support via the `flash-attn` backend, enabling open-source models like LLaMA and Mistral to run efficiently on consumer and enterprise hardware alike.

Practitioners report significant gains in throughput. Enabling FlashAttention can yield 2×-3× tokens-per-second improvements on RTX 4090 GPUs and 3×-4× gains on A100 clusters. Cloud providers see corresponding cost reductions, with fine-tuning jobs completing in fewer hours on expensive instances like AWS p4d.24xlarge.

Challenges and Considerations

Despite its benefits, integrating FlashAttention isn’t always straightforward. Users frequently encounter build issues related to CUDA version mismatches or incompatibilities with certain PyTorch releases. Developers must ensure their environment aligns with specific GPU architectures-for example, SM80 for A100 or SM90 for H100.

Additionally, while FlashAttention solves the memory bandwidth problem, KV-cache size remains a constraint during autoregressive inference. Storing keys and values for long contexts still consumes substantial memory, prompting complementary techniques like quantization (reducing precision to 8-bit or 4-bit) and sparse patterns.

Future Directions

Looking ahead, attention mechanisms will likely evolve along three axes: longer context windows, higher efficiency, and better reasoning capabilities. Research into test-time compute scaling suggests models may dynamically allocate more internal computation to challenging tokens. Meanwhile, multimodal extensions leverage cross-attention to align text with vision features, as seen in models like DALL·E 2.

With ongoing co-design efforts between software kernels and hardware architectures, we can expect future iterations of FlashAttention to support contexts exceeding one million tokens. Combined with advances in positional encoding extrapolation and block-sparse patterns, attention-based Transformers remain poised to dominate generative AI for the foreseeable future.

What is the difference between self-attention and cross-attention?

Self-attention operates within a single sequence, allowing each token to attend to other tokens in the same sequence. Cross-attention, on the other hand, involves two distinct sequences-typically an encoder output and a decoder state-where the decoder attends to the encoder’s outputs to condition its generation.

Why does standard attention scale quadratically with sequence length?

Standard attention computes pairwise interactions between every token in the sequence. For a sequence of length $n$, this results in an $n \times n$ attention matrix requiring $O(n^2)$ memory and computational operations.

Is FlashAttention mathematically equivalent to standard attention?

Yes, FlashAttention produces numerically identical results to standard softmax attention up to floating-point rounding errors. Its advantage lies in optimizing data movement rather than altering the underlying mathematics.

Can I use FlashAttention on my local GPU?

If you’re using recent versions of PyTorch or Hugging Face Transformers with compatible NVIDIA GPUs (like RTX 30xx/40xx series or A100/H100), FlashAttention is often enabled automatically or via simple configuration flags.

How does FlashAttention improve training speed?

By minimizing reads and writes to high-bandwidth memory (HBM) and maximizing use of on-chip SRAM, FlashAttention reduces memory bottlenecks. This allows compute units to operate closer to their peak performance, resulting in faster iteration times during training.

- May, 17 2026

- Collin Pace

- 0

- Permalink

Written by Collin Pace

View all posts by: Collin Pace