Tag: attention mechanisms

Attention Mechanisms in Generative AI: From Self-Attention to Flash Attention

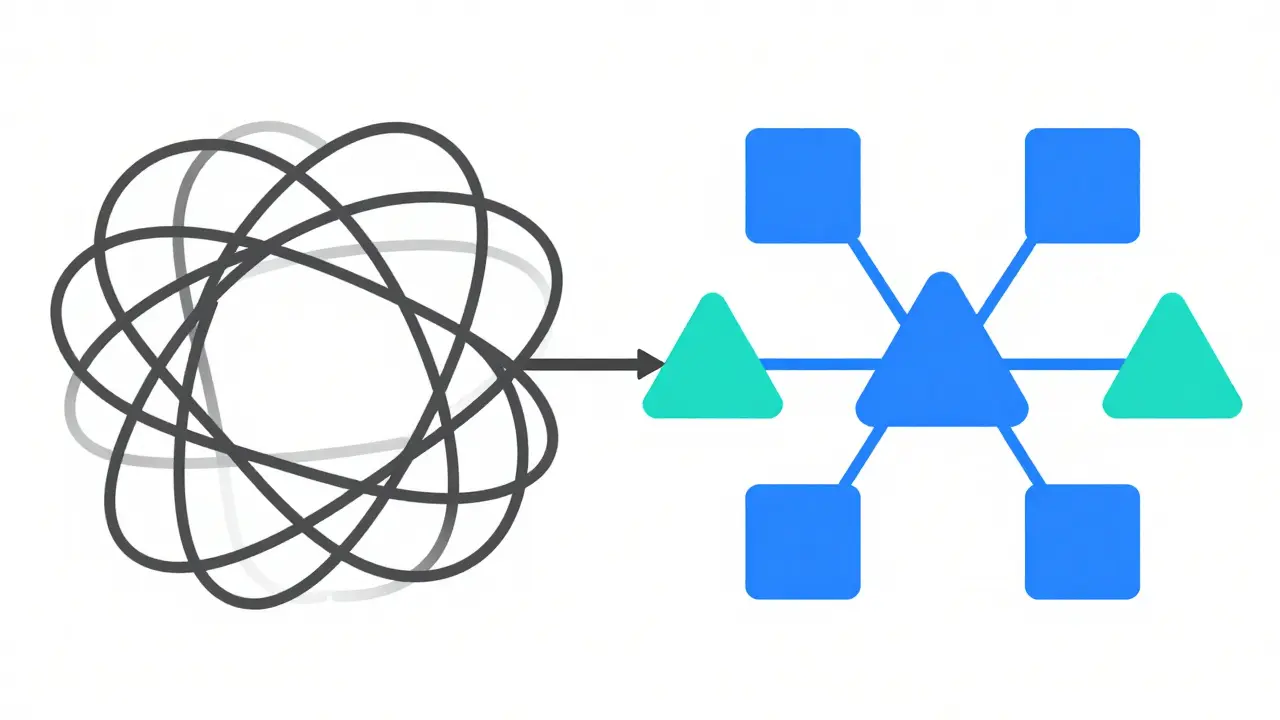

Explore how attention mechanisms power modern generative AI, from early self-attention concepts to the memory-efficient Flash Attention algorithm that enables scalable language model training.

- May 17, 2026

- Collin Pace

- 0

- Permalink