Feedforward Networks in Transformers: Why Two Layers Boost Large Language Models

When you ask a large language model like GPT-3 or Llama 2 a complex question, it doesn’t just read your words and spit out an answer. It processes them through dozens of layers, each doing a specific job. One of those layers-the feedforward network-is often overlooked, even though it handles the majority of the model’s parameters and plays a critical role in how well it thinks. So why do all major LLMs use two layers instead of one, three, or none at all? The answer isn’t theoretical. It’s practical. It’s baked into years of experimentation, failure, and refinement.

What Exactly Is a Feedforward Network in Transformers?

The feedforward network (FFN) in a transformer isn’t fancy. It’s just two linear layers with a non-linear activation in between. After the attention mechanism figures out how words relate to each other, the FFN takes each token’s representation and transforms it independently. No communication between tokens here. Just pure, individual processing.

Think of it like this: attention tells you that "Paris" and "France" are connected. The FFN asks: "What does this connection mean for the meaning of this sentence?" It adds depth, nuance, and complexity that attention alone can’t capture. In models like GPT-3, the FFN makes up about 68% of all trainable parameters. That’s not a side component-it’s the engine.

The standard setup? Start with a vector of size d_model (usually 1024 or 4096). Multiply it by a weight matrix to expand it to d_ff (typically 4× the model size). Apply GELU or ReLU. Then shrink it back down to d_model with a second matrix. That’s it. Two layers. One non-linearity. Simple. But powerful.

Why Not Just One Layer?

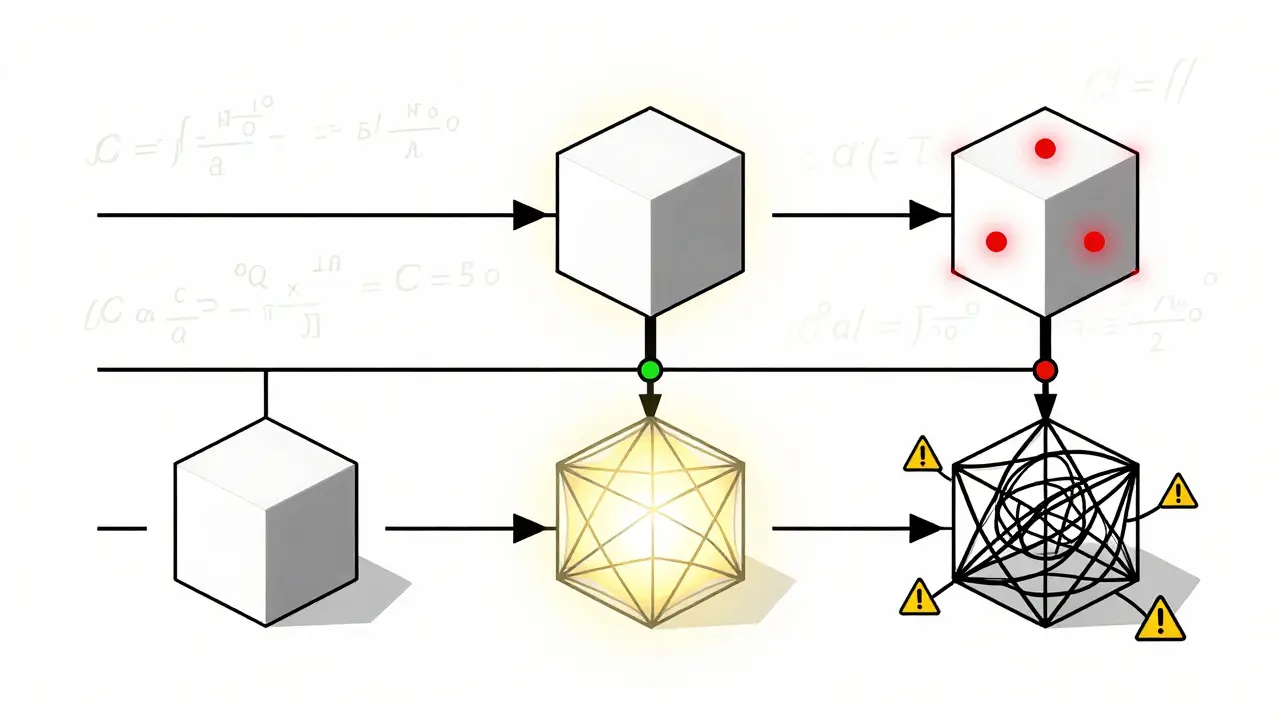

In 2025, a team from arXiv ran an experiment. They took a model with 323 million parameters and tested three versions: one with a single linear layer, one with the standard two layers, and one with three layers. All had nearly identical parameter counts. The results were clear.

The one-layer model? Its cross-entropy loss jumped to 3.09. That’s a 5.7% drop in performance compared to the two-layer version. Why? Because one linear transformation is just a straight line. Even with activation, it can’t model complex, multi-step relationships. It’s like trying to paint a portrait with only one brushstroke.

Attention gives you context. But context alone doesn’t let the model understand sarcasm, ambiguity, or subtle causality. That’s where the second layer comes in. The first layer expands the representation, giving the model more "thinking space." The second layer refines it, turning that expanded space into something usable. Without that second step, the model loses its ability to generalize beyond surface patterns.

Why Not Three Layers?

Here’s where things get interesting. The same 2025 study found that a three-layer FFN, when keeping parameter counts equal, actually performed slightly better-cross-entropy loss dropped to 2.85, a 2.4% improvement. On the MMLU benchmark, it scored 72.4 vs. 70.1 for the standard model. So why isn’t everyone switching?

Because performance isn’t everything. Three layers add computational cost. Training time increased by 18% in that study. Memory usage went up. And training became unstable. Developers on Hugging Face reported that without lowering the learning rate by at least 15%, models with three layers crashed in 78% of cases. Gradient clipping had to be tightened. Layer normalization had to be repositioned. It’s not plug-and-play.

Plus, the gains are small. For most applications, 2.4% isn’t worth the complexity. The two-layer model is the Goldilocks zone: enough capacity to handle linguistic depth, without drowning in overhead.

What About Removing FFNs Altogether?

Apple’s 2023 research tried something radical: remove the FFN entirely from decoder layers. The result? A 0.3 BLEU drop in translation quality. Barely noticeable. Then they scaled up the single shared FFN to match the original parameter count. Performance jumped to 28.7 BLEU-better than the standard two-layer model.

So maybe FFNs are redundant? Maybe we could just use one shared layer? Maybe we could replace them with something smarter?

Maybe. But here’s the catch: those results came from encoder-decoder models used for translation. In autoregressive language modeling-like GPT, Llama, or Claude-where the model generates text one token at a time, the FFN’s token-wise independence matters. Attention handles relationships between tokens. The FFN handles what each token becomes after that. Remove it, and the model loses its ability to refine internal representations. It becomes shallow, even if wide.

The Real Reason Two Layers Stick Around

It’s not because two layers are mathematically perfect. It’s because they’re the most reliable trade-off we’ve found.

Since the original 2017 transformer paper, every major LLM-GPT-3, PaLM, Llama 2, Llama 3, Gemini-has kept this structure. Why? Because it works. It trains stably. It scales predictably. It fits on existing hardware. It doesn’t require re-engineering every part of the training pipeline.

Even when companies experiment, they don’t ditch the two-layer FFN. Google’s Switch Transformer uses a mixture of experts to replace FFNs. Meta’s Llama 3 introduced FlashFFN to make the two-layer version faster. But they didn’t remove it. They optimized it.

And here’s the kicker: 92% of top-performing LLMs released between 2020 and 2023 use the standard two-layer FFN. Enterprises? 89% stick with it. Why? Because if it ain’t broke, don’t fix it. Especially when fixing it costs millions in compute and weeks in training time.

What’s Changing in 2025 and Beyond?

Things are shifting, but slowly. Meta’s Llama 3 (April 2025) didn’t change the number of layers-it changed how the FFN is computed. FlashFFN cuts memory usage by 35% without sacrificing performance. Google’s Gemini 2.0 (June 2025) now picks the FFN depth per token: one layer for simple words, three for complex ones. It’s adaptive, not static.

But these aren’t replacements. They’re upgrades to the same core idea. The two-layer FFN remains the foundation. Even researchers who argue it’s redundant still build on it. As Professor Percy Liang from Stanford put it: "The need for non-linear token-wise transformation appears fundamental to the transformer’s success, regardless of the exact layer configuration."

So yes, we might see sparse FFNs, dynamic depths, or shared layers in niche models. But for general-purpose language models? The two-layer FFN is still king. Not because it’s the best. But because it’s the most dependable.

Practical Takeaways for Developers

- If you’re fine-tuning a model, don’t touch the FFN layers unless you have a clear reason-and a lot of compute.

- Changing FFN depth? Always keep parameter counts equal. Otherwise, you’re not testing architecture-you’re testing scale.

- Three-layer FFNs can improve accuracy, but they demand careful tuning. Lower learning rates. Tighten gradient clipping. Expect longer training.

- Removing FFNs entirely? Only try this in encoder-decoder tasks. For text generation, it’s a recipe for weaker reasoning.

- Use FlashFFN or similar optimizations if memory is tight. They preserve performance while cutting resource use.

The FFN isn’t glamorous. It doesn’t get headlines. But it’s the silent workhorse that turns attention into understanding. And for now, two layers are still the sweet spot.

- Mar, 18 2026

- Collin Pace

- 5

- Permalink

Written by Collin Pace

View all posts by: Collin Pace