Sliding Windows and Memory Tokens: Extending LLM Attention

Imagine trying to read a 500-page novel while only remembering the last three sentences. You would miss plot twists, forget character names, and lose the thread of the argument entirely. For years, this was the reality for Large Language Models (LLMs). They were brilliant at short conversations but struggled with long documents because their "attention"-the mechanism that connects words across a text-was computationally expensive to maintain over long distances.

The standard way transformers work is by calculating relationships between every single word in a sequence. If you have a document with 1,000 words, the model checks how each word relates to all other 999 words. This creates a massive calculation load that grows exponentially as the text gets longer. To solve this, researchers developed Attention Window Extensions. These are architectural tweaks that allow models to process much longer texts without crashing your GPU or taking hours to generate a response. Two of the most prominent methods are Sliding Window Attention and Memory Tokens.

Why Standard Attention Fails at Scale

To understand why we need extensions, we first need to look at the bottleneck. In a standard transformer, the attention mechanism uses what we call Self-Attention. Every token (a chunk of text, usually a word or sub-word) generates three vectors: a query, a key, and a value. The query asks, "What am I looking for?" The key says, "Here is what I contain." The value provides the actual information.

When the sequence length is small, this works fine. But when you feed an entire legal contract or a full software codebase into the model, the number of calculations becomes prohibitive. The computational cost scales quadratically ($O(N^2)$), meaning if you double the input length, the processing time roughly quadruples. This isn't just slow; it often exceeds the memory limits of standard hardware. Engineers needed a way to keep the model's understanding global without paying the quadratic price tag.

How Sliding Window Attention Works

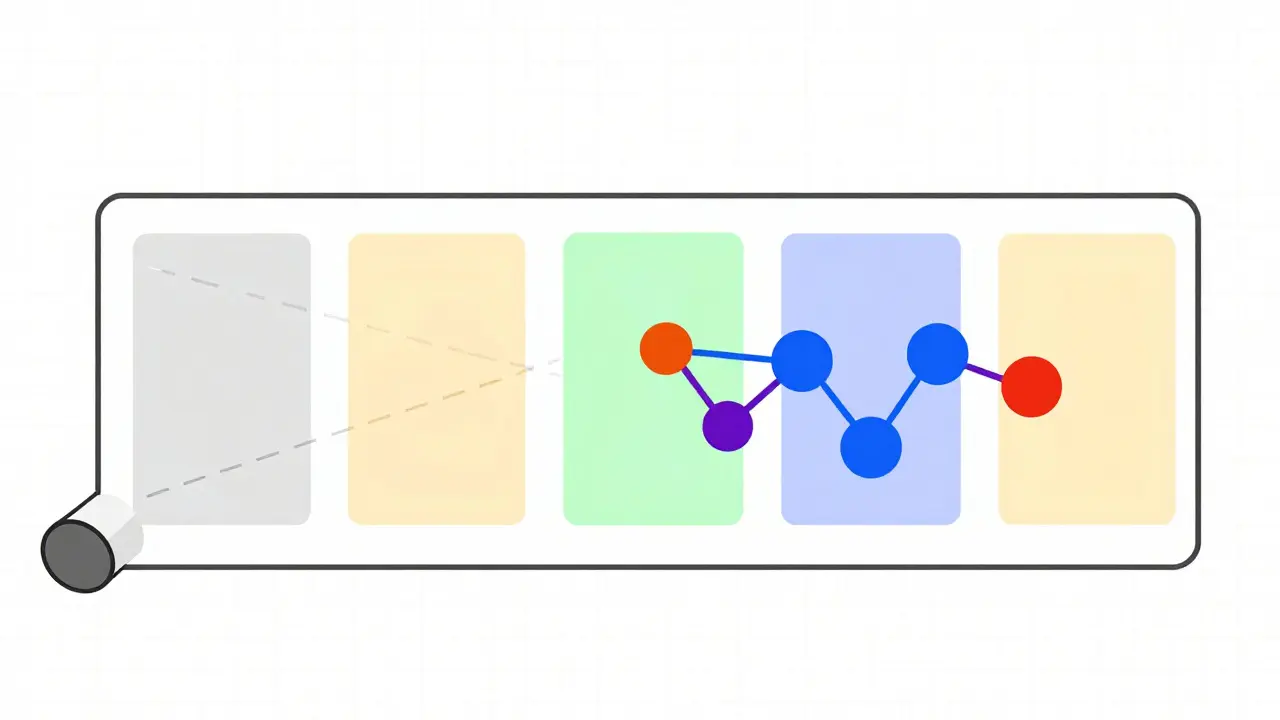

Sliding Window Attention (SWA) is one of the most effective solutions for this problem. Instead of letting every word see every other word, SWA restricts each token to attending only to its immediate neighbors within a fixed "window." Think of it like reading through a tube: you can only see the words directly around the current one.

This approach reduces the computational complexity from quadratic to linear ($O(Nw)$), where $N$ is the total sequence length and $w$ is the window size. This makes processing extremely fast. However, there is a catch: if the window is too small, the model loses the broader context. It might understand the sentence perfectly but miss the paragraph's main point.

To fix this, modern implementations use overlapping windows. As the model processes the text, the window slides forward, but it retains some overlap with the previous segment. This ensures that connections aren't abruptly cut off. Recent research suggests that training models with variable-length sliding windows during pre-training significantly improves performance. Models trained this way can handle sequences much longer than their fixed window size, effectively learning to bridge gaps between local contexts.

The Role of Memory Tokens

While sliding windows handle local efficiency, they struggle with global coherence. This is where Memory Tokens come in. Memory tokens act as a summary buffer or a "scratchpad" for the model. They are special tokens that are not part of the original input text but are updated dynamically as the model processes the sequence.

As the model reads through a long document using sliding windows, it compresses important information into these memory tokens. Later, when generating a response or continuing the text, the model attends to these memory tokens alongside the current local window. This allows the model to retain high-level themes, character traits, or logical arguments from hundreds of pages ago, even though those specific words are no longer in the active attention window.

This hybrid approach combines the speed of local attention with the recall power of global memory. It mimics human reading: we focus on the sentence we are currently reading (local window) but hold the main idea of the chapter in our mind (memory tokens).

Other Advanced Extension Techniques

Sliding windows and memory tokens are not the only tools in the box. Researchers have developed several other sophisticated methods to extend attention capabilities:

- Large Window Attention (LWA): This method extracts a larger context patch for each query window and then averages it down to a manageable size before performing attention. It allows the model to see further than a standard window without the full cost of global attention.

- Varied-Size Window Attention (VSA): Instead of a fixed window, VSA dynamically adjusts the window size based on the content. If a section is dense with complex logic, the window expands. If it's simple narrative, it shrinks. This data-driven adaptation ensures computation is spent where it matters most.

- Top-K Window Attention: This technique identifies the K most relevant context windows and focuses computation only on them. It ignores irrelevant sections entirely, drastically reducing unnecessary calculations.

- Frequency Domain Filtering: By using Fast Fourier Transform (FFT) operations, some models filter global context in the frequency domain. This achieves genuine global awareness without the quadratic scaling penalty of traditional attention.

Practical Implications for Developers

If you are building applications that rely on long-context understanding, choosing the right attention mechanism matters. Here is a breakdown of when to use which approach:

| Mechanism | Best Use Case | Computational Cost | Context Retention |

|---|---|---|---|

| Standard Self-Attention | Short texts, high precision needs | High ($O(N^2)$) | Global (Perfect) |

| Sliding Window (SWA) | Long documents, real-time streaming | Low ($O(Nw)$) | Local (Limited) |

| Memory Tokens | Summarization, long-term consistency | Medium | Global (Compressed) |

| Varied-Size (VSA) | Complex reasoning, mixed density texts | Variable | Adaptive |

For example, if you are building a chatbot that needs to remember user preferences from weeks ago, memory tokens are essential. If you are processing a live news feed where only the last few minutes matter, sliding window attention is more efficient. Many modern production systems use a combination of both, layering sliding windows for immediate processing and memory tokens for long-term state management.

Training Considerations

Implementing these mechanisms is not just about changing the inference code; it requires careful training strategies. Research indicates that optimal performance is achieved when the training sequence length is approximately four times the training window size. This exposes the model to longer token sequences during pre-training, allowing it to learn how to bridge gaps between windows effectively.

Additionally, different evaluation window sizes produce varying model performance. A model trained with a 1,024-token window might perform poorly if evaluated with a 32,768-token window unless it has been specifically fine-tuned for long-context tasks. Positional embeddings also play a critical role. Since sliding windows break the continuous flow of position IDs, models must use relative positional encoding or learned absolute positions to ensure that the model understands the distance between tokens even when they are far apart in the original sequence.

Future Directions

The landscape of attention window extensions is evolving rapidly. We are seeing a move toward more dynamic and adaptive systems. Future models will likely integrate quantization and distillation techniques with these attention mechanisms to further reduce costs. Multimodal architectures, which process text, images, and audio simultaneously, are also adopting these principles. For instance, vision transformers are using cyclic shifting of multi-scale windows to improve tracking accuracy and robustness to object boundaries.

As hardware continues to improve, the pressure to optimize attention mechanisms may decrease slightly, but the demand for longer contexts will only grow. Users expect AI to read entire books, analyze full year-end financial reports, and debug massive codebases in a single pass. Sliding windows and memory tokens provide the foundational architecture to make this possible without requiring supercomputers for every query.

What is the difference between Sliding Window Attention and standard Self-Attention?

Standard Self-Attention calculates relationships between every token in a sequence, leading to high computational costs ($O(N^2)$). Sliding Window Attention restricts each token to attend only to its immediate neighbors within a fixed window size, reducing complexity to linear ($O(Nw)$). This makes it much faster for long sequences but limits the model's ability to see distant context unless combined with other techniques.

How do Memory Tokens help with long-context understanding?

Memory tokens act as a compressed summary buffer. As the model processes text, it updates these tokens with key information. During generation, the model attends to these memory tokens along with the current local window. This allows the model to retain high-level context from earlier in the document, even if those specific words are no longer in the active attention window.

Which attention mechanism is best for real-time applications?

Sliding Window Attention is generally best for real-time applications like live transcription or streaming chat because of its low latency and linear computational cost. It processes tokens as they arrive without needing to wait for the entire sequence to be available, making it highly efficient for interactive systems.

Can Sliding Window Attention handle very long documents?

Yes, but with limitations. On its own, sliding windows may lose global context. To handle very long documents effectively, it is often combined with memory tokens or hierarchical attention mechanisms. Training with variable-length windows also helps the model learn to bridge gaps between local segments, improving performance on long-range dependencies.

What is Varied-Size Window Attention (VSA)?

Varied-Size Window Attention (VSA) is a dynamic approach where the model learns to adjust the size and location of the attention window for each head and token. This allows the model to expand its focus for complex, information-dense sections and shrink it for simpler parts, optimizing computational resources based on content relevance.

- May, 1 2026

- Collin Pace

- 0

- Permalink

- Tags:

- sliding window attention

- memory tokens

- large language models

- transformer architecture

- context window

Written by Collin Pace

View all posts by: Collin Pace