Tag: AI efficiency

Scaling Behavior Across Tasks: How Bigger LLMs Actually Improve Performance

Larger LLMs improve performance predictably-but not uniformly. Scaling boosts efficiency, reasoning, and few-shot learning, but gains fade beyond certain sizes. Task complexity, data quality, and inference strategies matter as much as model size.

- Mar 17, 2026

- Collin Pace

- 7

- Permalink

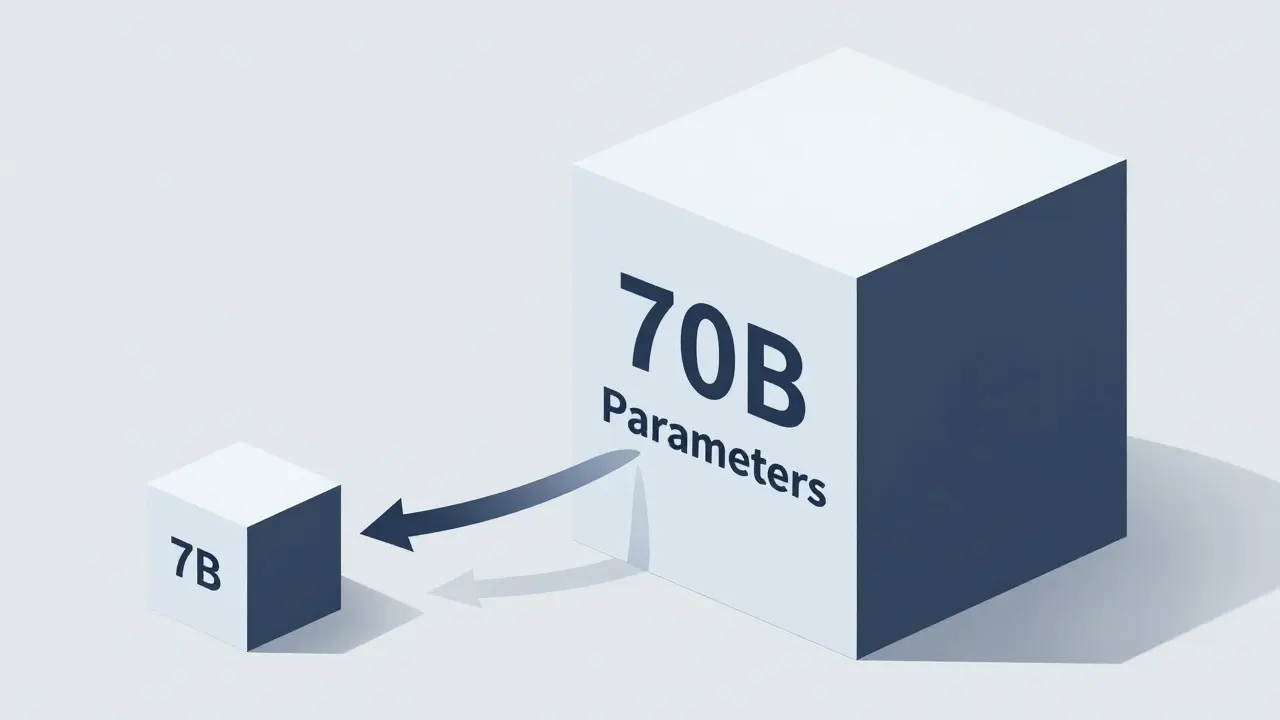

When to Compress vs When to Switch Models in Large Language Model Systems

Learn when to compress a large language model versus switching to a smaller one. Discover practical trade-offs in cost, accuracy, and hardware that shape real-world AI deployments.

- Mar 2, 2026

- Collin Pace

- 9

- Permalink