Category: Cybersecurity

Security Vulnerabilities and Risk Management in AI-Generated Code

AI-generated code is now common in software development, but it introduces serious security risks like SQL injection, hardcoded secrets, and XSS. Learn how to detect and prevent these vulnerabilities with automated tools, code reviews, and policy changes.

- Feb 20, 2026

- Collin Pace

- 7

- Permalink

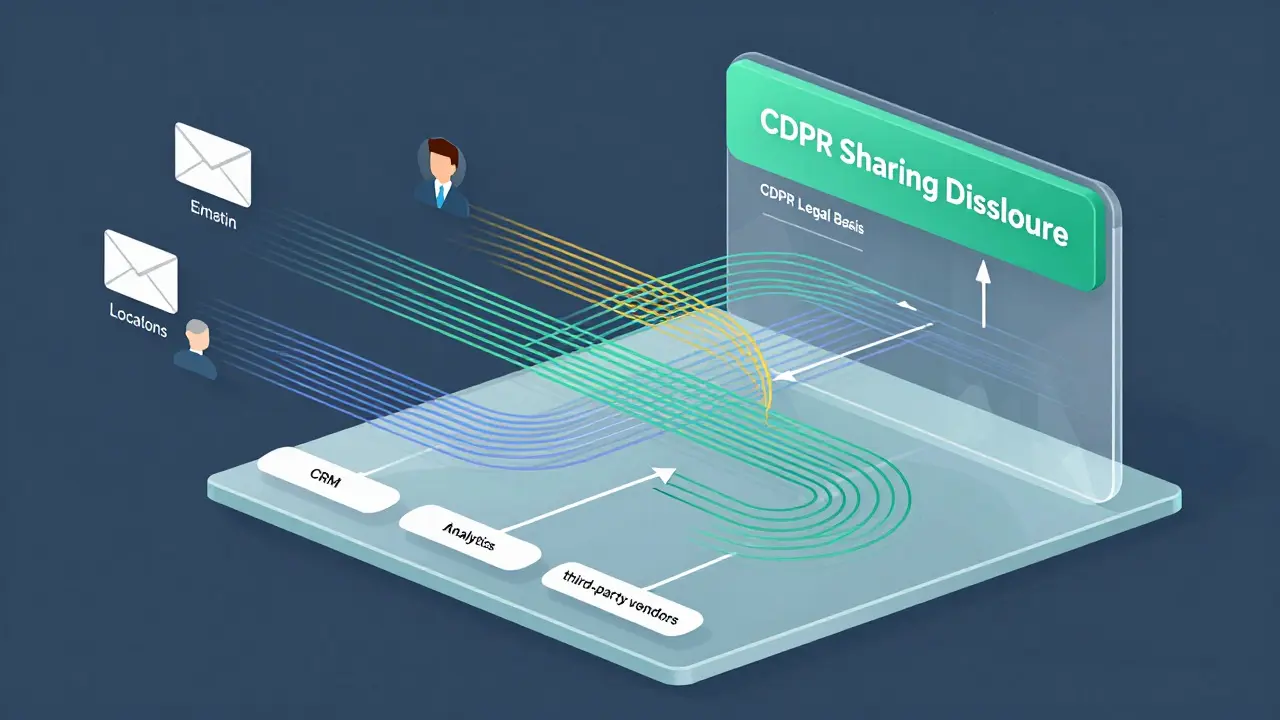

GDPR and CCPA Compliance in Vibe-Coded Systems: Data Mapping and Consent Flows

GDPR and CCPA require detailed data mapping and consent management to avoid fines and ensure compliance. Learn how to build systems that track data flows, document legal bases, and honor user rights-without relying on guesswork.

- Feb 17, 2026

- Collin Pace

- 7

- Permalink

Supply Chain Security for LLM Deployments: Securing Containers, Weights, and Dependencies

LLM supply chain security is critical but often ignored. Learn how to secure containers, model weights, and dependencies to prevent breaches before they happen.

- Jan 16, 2026

- Collin Pace

- 10

- Permalink

Input Validation for LLM Applications: How to Sanitize Natural Language Inputs to Prevent Prompt Injection Attacks

Learn how to prevent prompt injection attacks in LLM applications by implementing layered input validation and sanitization techniques. Essential security practices for chatbots, agents, and AI tools handling user input.

- Jan 2, 2026

- Collin Pace

- 9

- Permalink

How to Reduce Memory Footprint for Hosting Multiple Large Language Models

Learn how to reduce memory footprint when hosting multiple large language models using quantization, model parallelism, and hybrid techniques. Cut costs by 65% and run 3-5 models on a single GPU.

- Nov 29, 2025

- Collin Pace

- 7

- Permalink

Security KPIs for Measuring Risk in Large Language Model Programs

Learn the essential security KPIs for measuring risk in large language model programs. Track detection, response, and resilience metrics to prevent prompt injection, data leaks, and model manipulation in production AI systems.

- Aug 23, 2025

- Collin Pace

- 5

- Permalink