Tag: large language models

How Context Length Affects Output Quality in Large Language Model Generation

Context length in large language models doesn't guarantee better output. Beyond a certain point, longer inputs hurt accuracy due to attention dilution and the 'Lost in the Middle' effect. Learn how to optimize context for real-world performance.

- Mar 21, 2026

- Collin Pace

- 3

- Permalink

Feedforward Networks in Transformers: Why Two Layers Boost Large Language Models

Feedforward networks in transformers are the hidden force behind large language models. Despite their simplicity, the two-layer design powers GPT-3, Llama, and Gemini by balancing depth, efficiency, and stability. Here’s why no one has replaced it.

- Mar 18, 2026

- Collin Pace

- 5

- Permalink

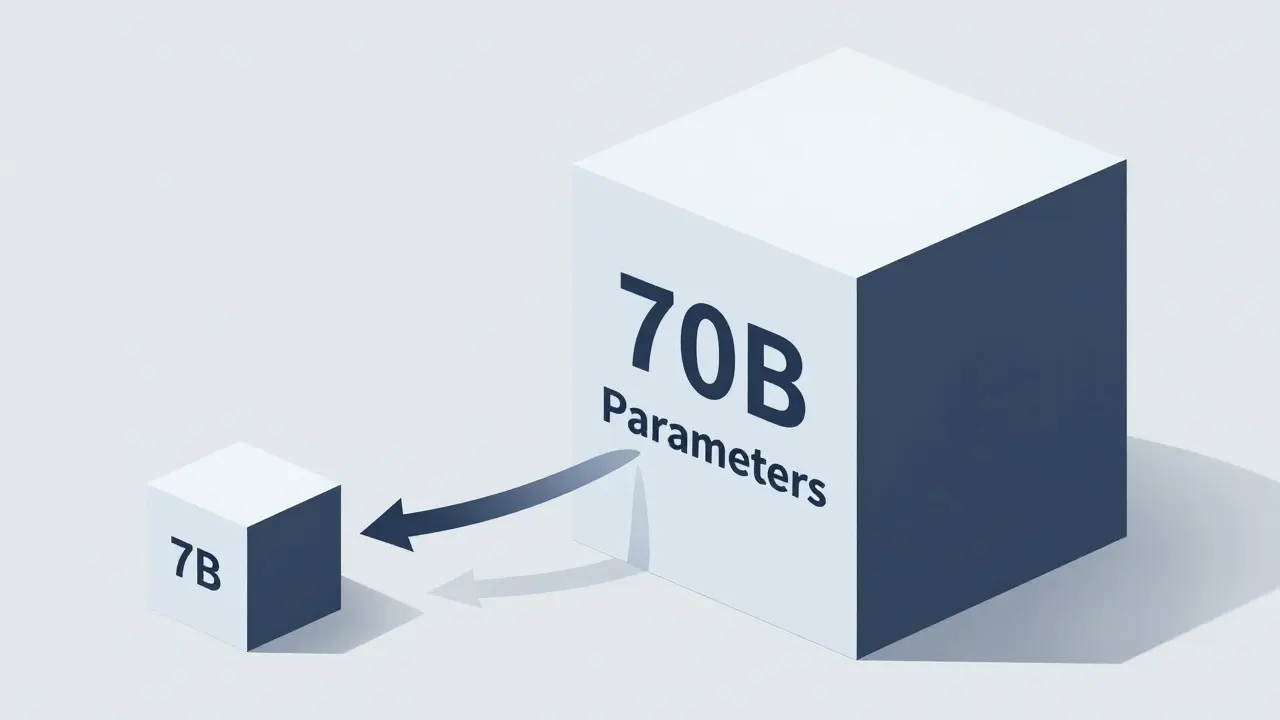

Scaling Behavior Across Tasks: How Bigger LLMs Actually Improve Performance

Larger LLMs improve performance predictably-but not uniformly. Scaling boosts efficiency, reasoning, and few-shot learning, but gains fade beyond certain sizes. Task complexity, data quality, and inference strategies matter as much as model size.

- Mar 17, 2026

- Collin Pace

- 7

- Permalink

How Context Windows Work in Large Language Models and Why They Limit Long Documents

Context windows limit how much text large language models can process at once, affecting document analysis, coding, and long conversations. Learn how they work, why they're a bottleneck, and how to work around them.

- Feb 23, 2026

- Collin Pace

- 0

- Permalink

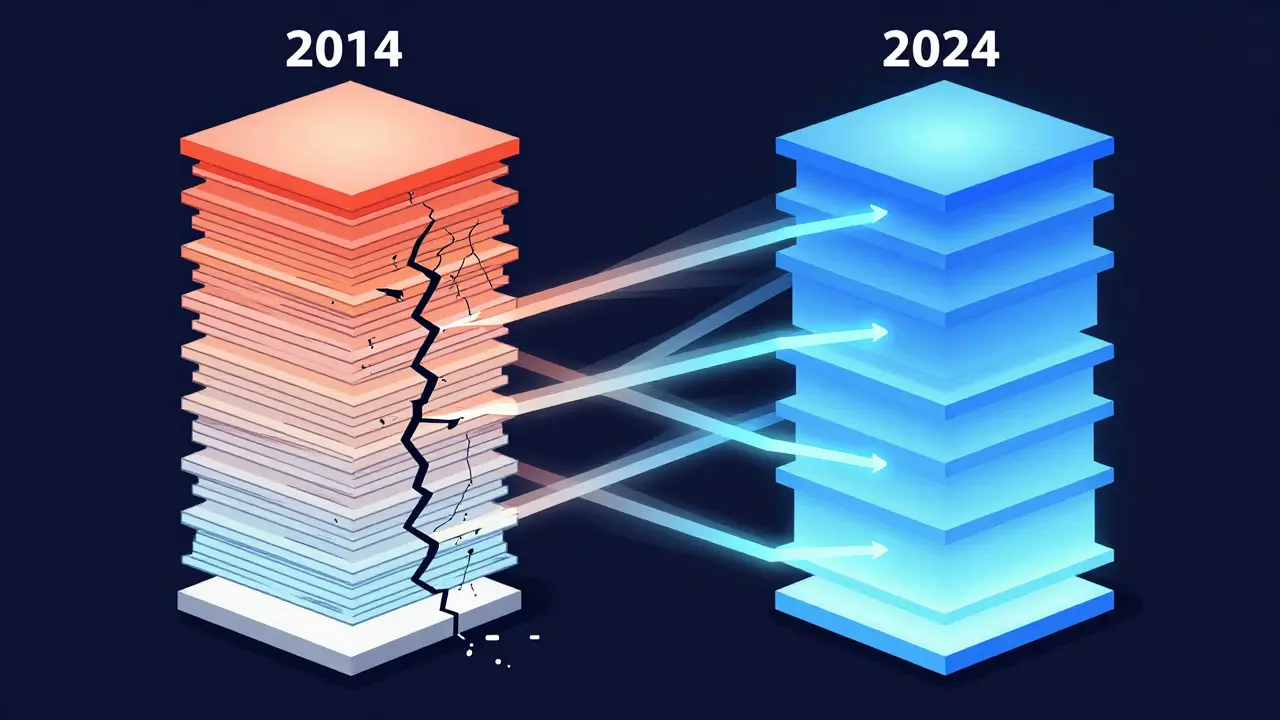

Transformer Pre-Norm vs Post-Norm Architectures: Which One Powers Modern LLMs?

Pre-Norm and Post-Norm are two ways to structure layer normalization in Transformers. Pre-Norm powers most modern LLMs because it trains stably at 100+ layers. Post-Norm works for small models but fails at scale.

- Oct 20, 2025

- Collin Pace

- 6

- Permalink

Contextual Representations in Large Language Models: How LLMs Understand Meaning

Contextual representations let LLMs understand words based on their surroundings, not fixed meanings. From attention mechanisms to context windows, here’s how models like GPT-4 and Claude 3 make sense of language - and where they still fall short.

- Sep 16, 2025

- Collin Pace

- 0

- Permalink

How to Use Large Language Models for Marketing, Ads, and SEO

Learn how to use large language models for marketing, ads, and SEO without falling into common traps like hallucinations or lost brand voice. Real strategies, real results.

- Sep 5, 2025

- Collin Pace

- 8

- Permalink