Category: Artificial Intelligence - Page 3

Chain-of-Thought Prompting in Generative AI: Master Step-by-Step Reasoning for Complex Tasks

Chain-of-thought prompting improves AI reasoning by making models show their work step by step. Learn how it boosts accuracy on math, logic, and decision tasks-and when it's worth the cost.

- Jan 29, 2026

- Collin Pace

- 7

- Permalink

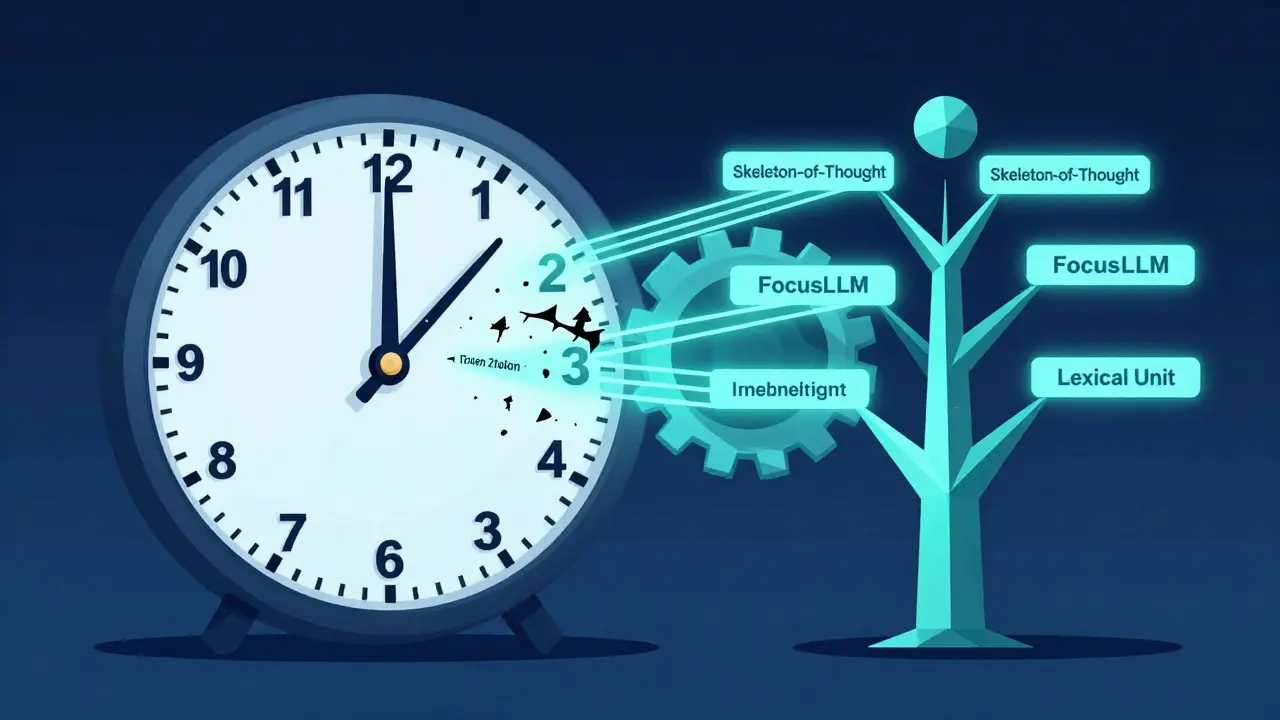

Parallel Transformer Decoding Strategies for Low-Latency LLM Responses

Parallel decoding strategies like Skeleton-of-Thought and FocusLLM cut LLM response times by up to 50% without losing quality. Learn how these techniques work and which one fits your use case.

- Jan 27, 2026

- Collin Pace

- 7

- Permalink

Low-Latency AI Coding Models: How Realtime Assistance Is Reshaping Developer Workflows

Low-latency AI coding models deliver real-time suggestions in IDEs with under 50ms delay, boosting productivity by 37% and restoring developer flow. Learn how Cursor, Tabnine, and others are reshaping coding in 2026.

- Jan 26, 2026

- Collin Pace

- 7

- Permalink

Understanding Tokenization Strategies for Large Language Models: BPE, WordPiece, and Unigram

Learn how BPE, WordPiece, and Unigram tokenization work in large language models, why they matter for performance and multilingual support, and how to choose the right one for your use case.

- Jan 24, 2026

- Collin Pace

- 5

- Permalink

Code Generation with Large Language Models: How Much Time You Really Save (and Where It Goes Wrong)

LLMs like GitHub Copilot can cut coding time by 55%-but only if you know how to catch their mistakes. Learn where AI helps, where it fails, and how to use it without introducing security flaws.

- Jan 22, 2026

- Collin Pace

- 7

- Permalink

Search-Augmented Large Language Models: RAG Patterns That Improve Accuracy

RAG (Retrieval-Augmented Generation) boosts LLM accuracy by pulling real-time data from your documents. Discover how it works, why it beats fine-tuning, and the advanced patterns that cut errors by up to 70%.

- Jan 20, 2026

- Collin Pace

- 7

- Permalink

Value Capture from Agentic Generative AI: End-to-End Workflow Automation

Agentic generative AI is transforming enterprise workflows by autonomously executing end-to-end processes-from customer service to supply chain-cutting costs by 20-60% and boosting productivity without human intervention.

- Jan 19, 2026

- Collin Pace

- 10

- Permalink

Reusable Prompt Snippets for Common App Features in Vibe Coding

Reusable prompt snippets help developers save time by reusing tested AI instructions for common features like login forms, API calls, and data tables. Learn how to build, organize, and use them effectively with Vibe Coding tools.

- Jan 17, 2026

- Collin Pace

- 6

- Permalink

Accuracy Tradeoffs in Compressed Large Language Models: What to Expect

Compressed LLMs save cost and speed but sacrifice accuracy in subtle, dangerous ways. Learn what really happens when you shrink a large language model-and how to avoid costly mistakes in production.

- Jan 14, 2026

- Collin Pace

- 9

- Permalink

How to Use Cursor for Multi-File AI Changes in Large Codebases

Learn how to use Cursor 2.0 for multi-file AI changes in large codebases, including best practices, limitations, step-by-step workflows, and how it compares to alternatives like GitHub Copilot and Aider.

- Jan 10, 2026

- Collin Pace

- 10

- Permalink

Long-Context Transformers for Large Language Models: How to Extend Windows Without Losing Accuracy

Long-context transformers let LLMs process huge documents without losing accuracy. Learn how attention optimizations like FlashAttention-2 and attention sinks beat drift, what models actually work, and where to use them - without wasting money or compute.

- Jan 9, 2026

- Collin Pace

- 5

- Permalink

Evaluation Protocols for Compressed Large Language Models: What Works, What Doesn’t, and How to Get It Right

Compressed LLMs can look perfect on perplexity scores but fail in real use. Learn the three evaluation pillars-size, speed, substance-and the benchmarks (LLM-KICK, EleutherAI) that actually catch silent failures before deployment.

- Dec 8, 2025

- Collin Pace

- 10

- Permalink