Category: Artificial Intelligence - Page 2

Chain-of-Thought in Vibe Coding: Why Explanations Must Come Before Code

Discover how Chain-of-Thought prompting transforms vibe coding by requiring LLMs to explain logic before writing code, reducing errors and speeding up debugging.

- Apr 15, 2026

- Collin Pace

- 7

- Permalink

Best Vibe Coding Tools and Platforms for 2025: A Complete Guide

Explore the top vibe coding tools of 2025. Learn how platforms like Cursor, Lovable, and v0 are turning natural language prompts into functional software.

- Apr 13, 2026

- Collin Pace

- 10

- Permalink

Generative AI Market Structure: Foundation Models, Platforms, and Apps

Explore the 2026 structure of the Generative AI market, from massive foundation models and cloud platforms to specialized vertical apps and agentic AI.

- Apr 4, 2026

- Collin Pace

- 7

- Permalink

Human Feedback in the Loop: Scoring and Refining AI Code Iterations

Discover how Human Feedback in the Loop improves AI-generated code quality through structured scoring systems. Learn implementation strategies, tool comparisons, and real-world impact statistics for 2026.

- Mar 29, 2026

- Collin Pace

- 10

- Permalink

Safety Layers in Generative AI Architecture: Building Resilient Systems with Filters and Guardrails

Explore the critical architecture of Generative AI safety layers. Learn how content filters, runtime guardrails, and API gateways protect LLMs from injection attacks and data leaks.

- Mar 28, 2026

- Collin Pace

- 5

- Permalink

Domain Adaptation in NLP: Fine-Tuning Large Language Models for Specialized Fields

Learn how to adapt Large Language Models for specialized fields. This guide covers DAPT, SFT, and the DEAL framework to boost accuracy in NLP.

- Mar 27, 2026

- Collin Pace

- 6

- Permalink

Real Estate Marketing with Generative AI: Listings, Tours, and Neighborhood Guides

Generative AI is transforming real estate marketing by automating listings, creating immersive virtual tours, and generating data-rich neighborhood guides. Agents now save hours, boost buyer engagement, and close deals faster using AI tools that write, visualize, and predict with precision.

- Mar 24, 2026

- Collin Pace

- 8

- Permalink

How Context Length Affects Output Quality in Large Language Model Generation

Context length in large language models doesn't guarantee better output. Beyond a certain point, longer inputs hurt accuracy due to attention dilution and the 'Lost in the Middle' effect. Learn how to optimize context for real-world performance.

- Mar 21, 2026

- Collin Pace

- 7

- Permalink

How Generative AI Is Transforming QBR Decks and Renewal Strategies in Customer Success

Generative AI is transforming QBRs from data-heavy presentations into strategic renewal tools. By automating data collection and personalizing narratives, customer success teams are doubling renewal rates while saving hours per review.

- Mar 20, 2026

- Collin Pace

- 8

- Permalink

Sales Enablement with Generative AI: Proposal Drafting, CRM Notes, and Personalization

Generative AI is transforming sales enablement by automating proposal drafting, generating accurate CRM notes, and delivering hyper-personalized content-cutting admin time by 75% and boosting conversion rates by up to 30%.

- Mar 19, 2026

- Collin Pace

- 7

- Permalink

Feedforward Networks in Transformers: Why Two Layers Boost Large Language Models

Feedforward networks in transformers are the hidden force behind large language models. Despite their simplicity, the two-layer design powers GPT-3, Llama, and Gemini by balancing depth, efficiency, and stability. Here’s why no one has replaced it.

- Mar 18, 2026

- Collin Pace

- 5

- Permalink

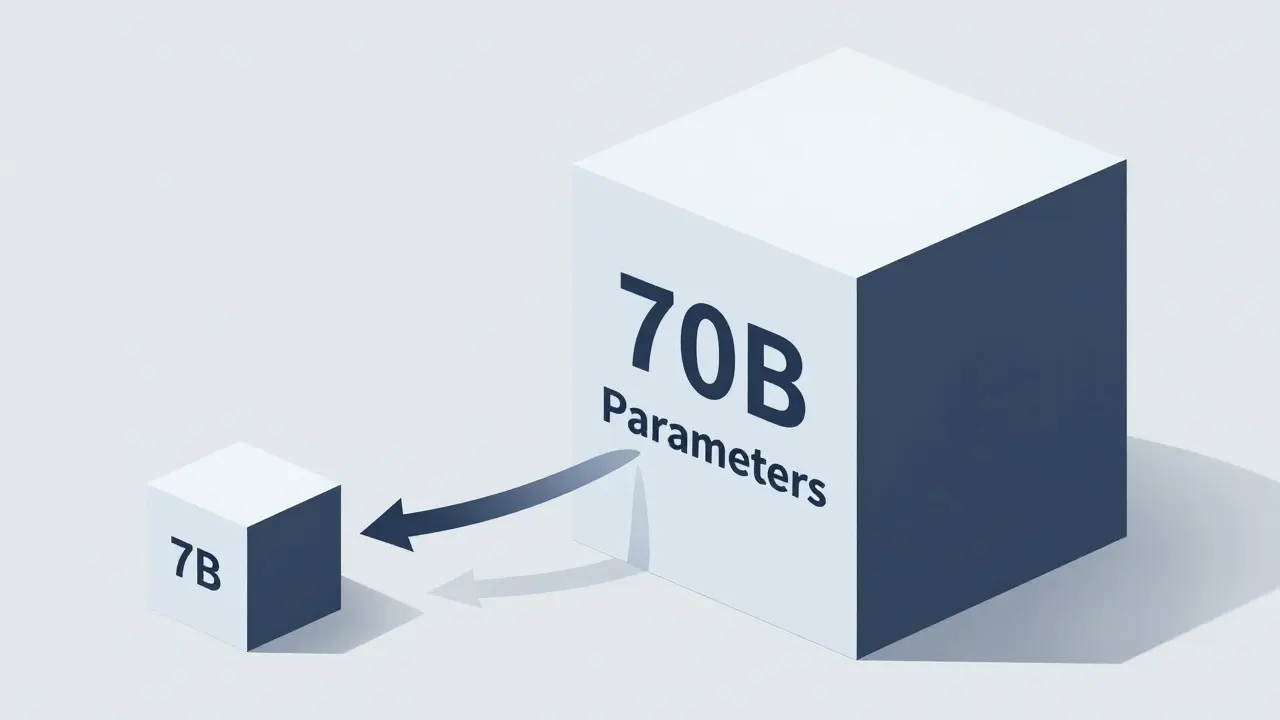

Scaling Behavior Across Tasks: How Bigger LLMs Actually Improve Performance

Larger LLMs improve performance predictably-but not uniformly. Scaling boosts efficiency, reasoning, and few-shot learning, but gains fade beyond certain sizes. Task complexity, data quality, and inference strategies matter as much as model size.

- Mar 17, 2026

- Collin Pace

- 7

- Permalink