Generative Innovation Hub

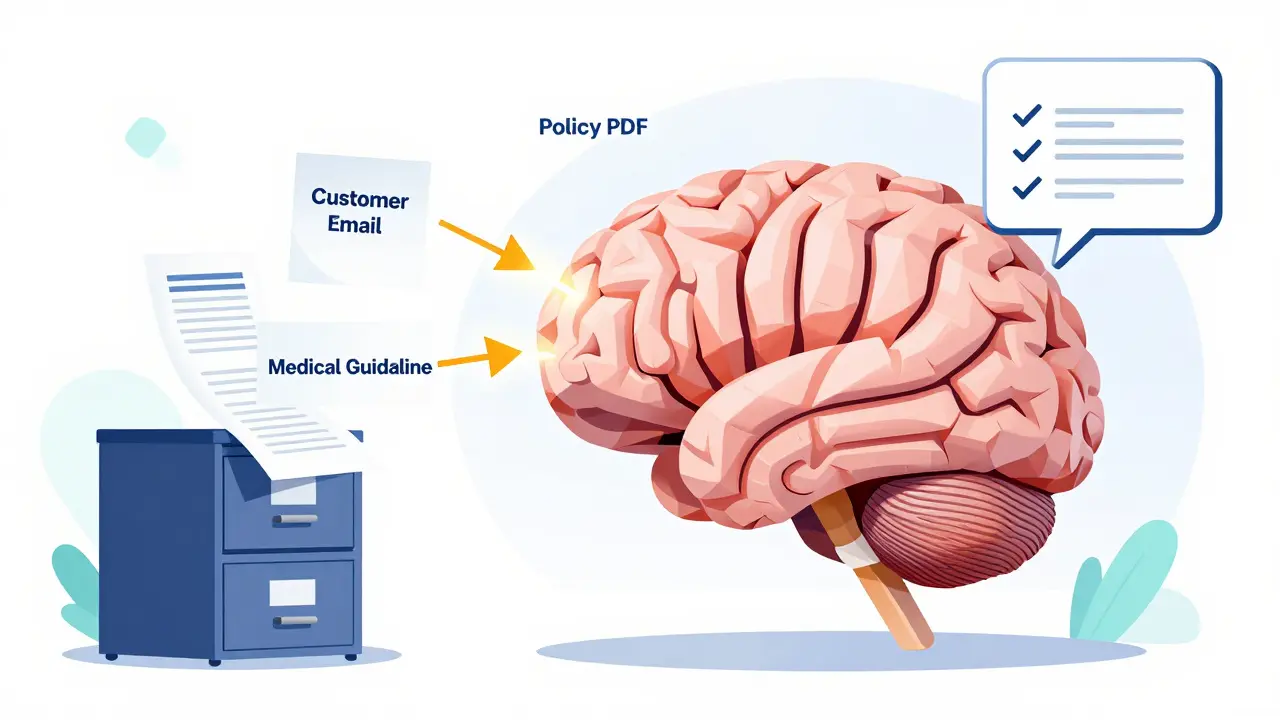

Search-Augmented Large Language Models: RAG Patterns That Improve Accuracy

RAG (Retrieval-Augmented Generation) boosts LLM accuracy by pulling real-time data from your documents. Discover how it works, why it beats fine-tuning, and the advanced patterns that cut errors by up to 70%.

- Jan 20, 2026

- Collin Pace

- 0

- Permalink

Value Capture from Agentic Generative AI: End-to-End Workflow Automation

Agentic generative AI is transforming enterprise workflows by autonomously executing end-to-end processes-from customer service to supply chain-cutting costs by 20-60% and boosting productivity without human intervention.

- Jan 19, 2026

- Collin Pace

- 1

- Permalink

Reusable Prompt Snippets for Common App Features in Vibe Coding

Reusable prompt snippets help developers save time by reusing tested AI instructions for common features like login forms, API calls, and data tables. Learn how to build, organize, and use them effectively with Vibe Coding tools.

- Jan 17, 2026

- Collin Pace

- 2

- Permalink

Supply Chain Security for LLM Deployments: Securing Containers, Weights, and Dependencies

LLM supply chain security is critical but often ignored. Learn how to secure containers, model weights, and dependencies to prevent breaches before they happen.

- Jan 16, 2026

- Collin Pace

- 2

- Permalink

Accuracy Tradeoffs in Compressed Large Language Models: What to Expect

Compressed LLMs save cost and speed but sacrifice accuracy in subtle, dangerous ways. Learn what really happens when you shrink a large language model-and how to avoid costly mistakes in production.

- Jan 14, 2026

- Collin Pace

- 5

- Permalink

How to Use Cursor for Multi-File AI Changes in Large Codebases

Learn how to use Cursor 2.0 for multi-file AI changes in large codebases, including best practices, limitations, step-by-step workflows, and how it compares to alternatives like GitHub Copilot and Aider.

- Jan 10, 2026

- Collin Pace

- 10

- Permalink

Long-Context Transformers for Large Language Models: How to Extend Windows Without Losing Accuracy

Long-context transformers let LLMs process huge documents without losing accuracy. Learn how attention optimizations like FlashAttention-2 and attention sinks beat drift, what models actually work, and where to use them - without wasting money or compute.

- Jan 9, 2026

- Collin Pace

- 5

- Permalink

Input Validation for LLM Applications: How to Sanitize Natural Language Inputs to Prevent Prompt Injection Attacks

Learn how to prevent prompt injection attacks in LLM applications by implementing layered input validation and sanitization techniques. Essential security practices for chatbots, agents, and AI tools handling user input.

- Jan 2, 2026

- Collin Pace

- 9

- Permalink

Model Compression Economics: How Quantization and Distillation Cut LLM Costs by 90%

Learn how quantization and knowledge distillation slash LLM inference costs by up to 95%, making powerful AI affordable for small teams and edge devices. Real-world results, tools, and best practices.

- Dec 29, 2025

- Collin Pace

- 6

- Permalink

Energy Efficiency in Generative AI Training: Sparsity, Pruning, and Low-Rank Methods

Sparsity, pruning, and low-rank methods slash generative AI training energy by 40-80% without sacrificing accuracy. Learn how these techniques work, their real-world results, and why they're becoming mandatory for sustainable AI.

- Dec 17, 2025

- Collin Pace

- 10

- Permalink

Evaluation Protocols for Compressed Large Language Models: What Works, What Doesn’t, and How to Get It Right

Compressed LLMs can look perfect on perplexity scores but fail in real use. Learn the three evaluation pillars-size, speed, substance-and the benchmarks (LLM-KICK, EleutherAI) that actually catch silent failures before deployment.

- Dec 8, 2025

- Collin Pace

- 10

- Permalink

How to Reduce Memory Footprint for Hosting Multiple Large Language Models

Learn how to reduce memory footprint when hosting multiple large language models using quantization, model parallelism, and hybrid techniques. Cut costs by 65% and run 3-5 models on a single GPU.

- Nov 29, 2025

- Collin Pace

- 7

- Permalink