Author: Collin Pace - Page 6

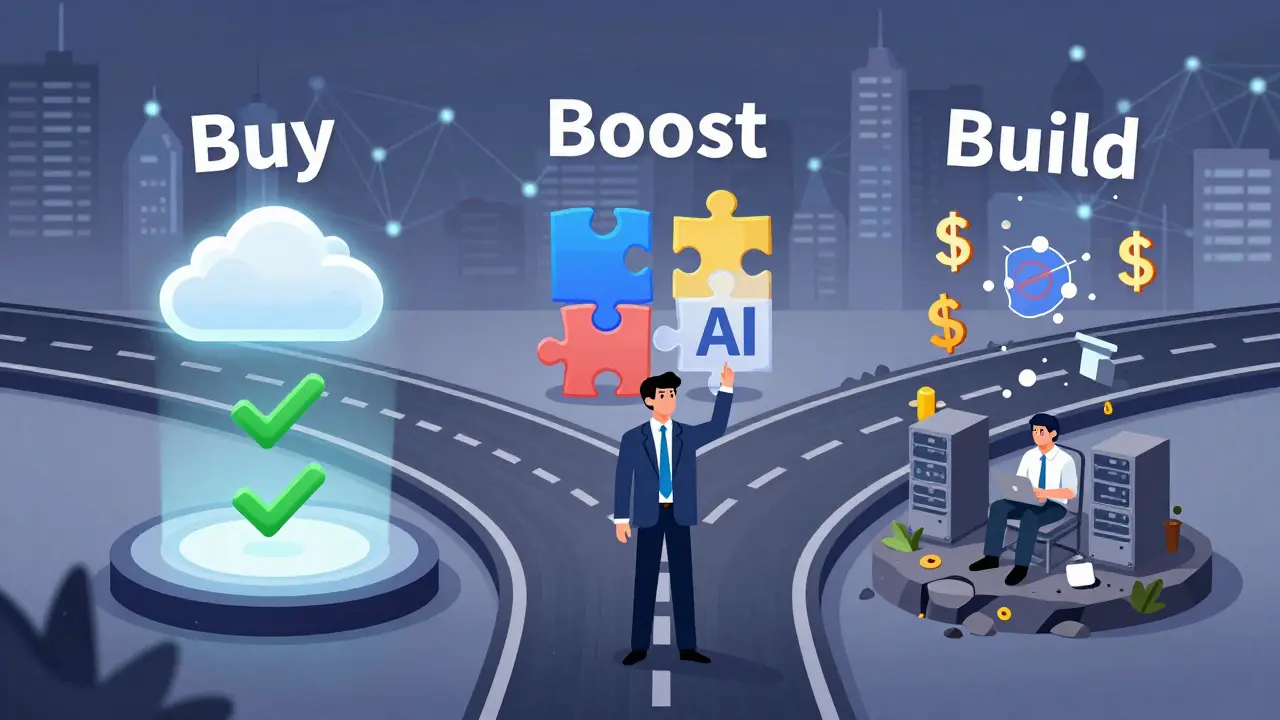

Build vs Buy for Generative AI Platforms: Decision Framework for CIOs

CIOs must choose between building or buying generative AI platforms based on cost, speed, risk, and use case. Learn the three strategies - buy, boost, build - and which one fits your organization.

- Nov 14, 2025

- Collin Pace

- 8

- Permalink

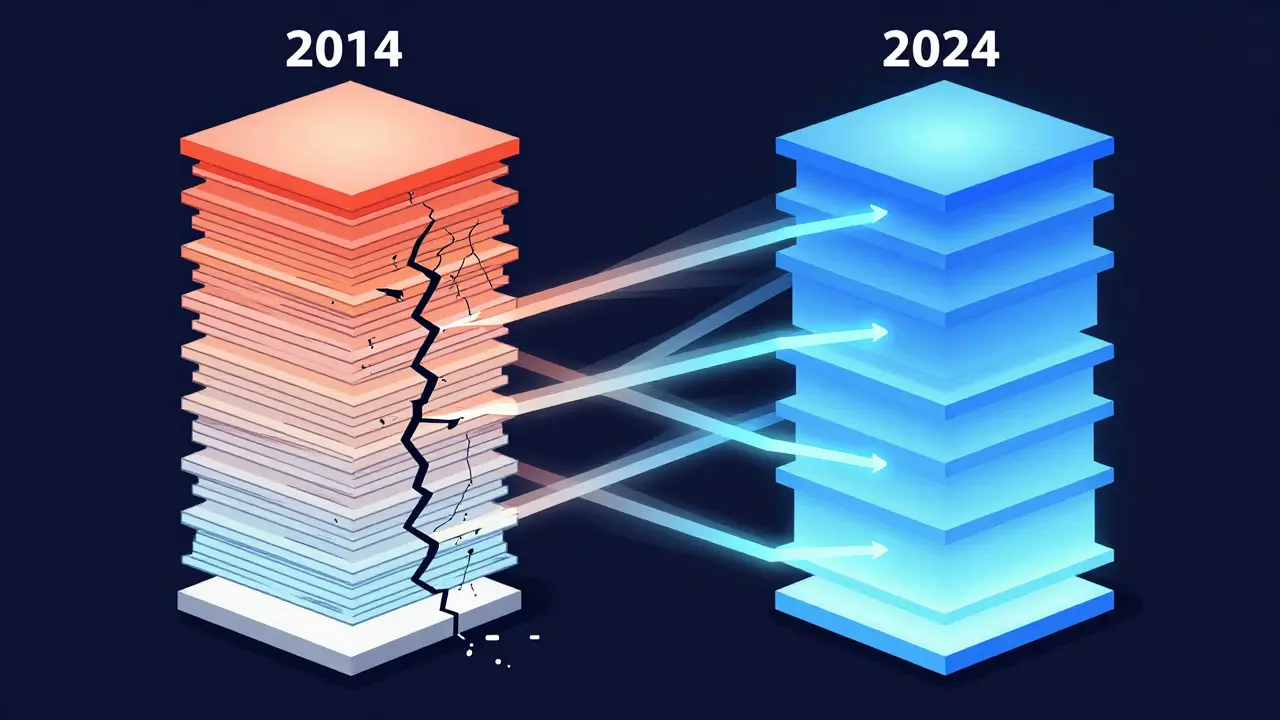

Transformer Pre-Norm vs Post-Norm Architectures: Which One Powers Modern LLMs?

Pre-Norm and Post-Norm are two ways to structure layer normalization in Transformers. Pre-Norm powers most modern LLMs because it trains stably at 100+ layers. Post-Norm works for small models but fails at scale.

- Oct 20, 2025

- Collin Pace

- 6

- Permalink

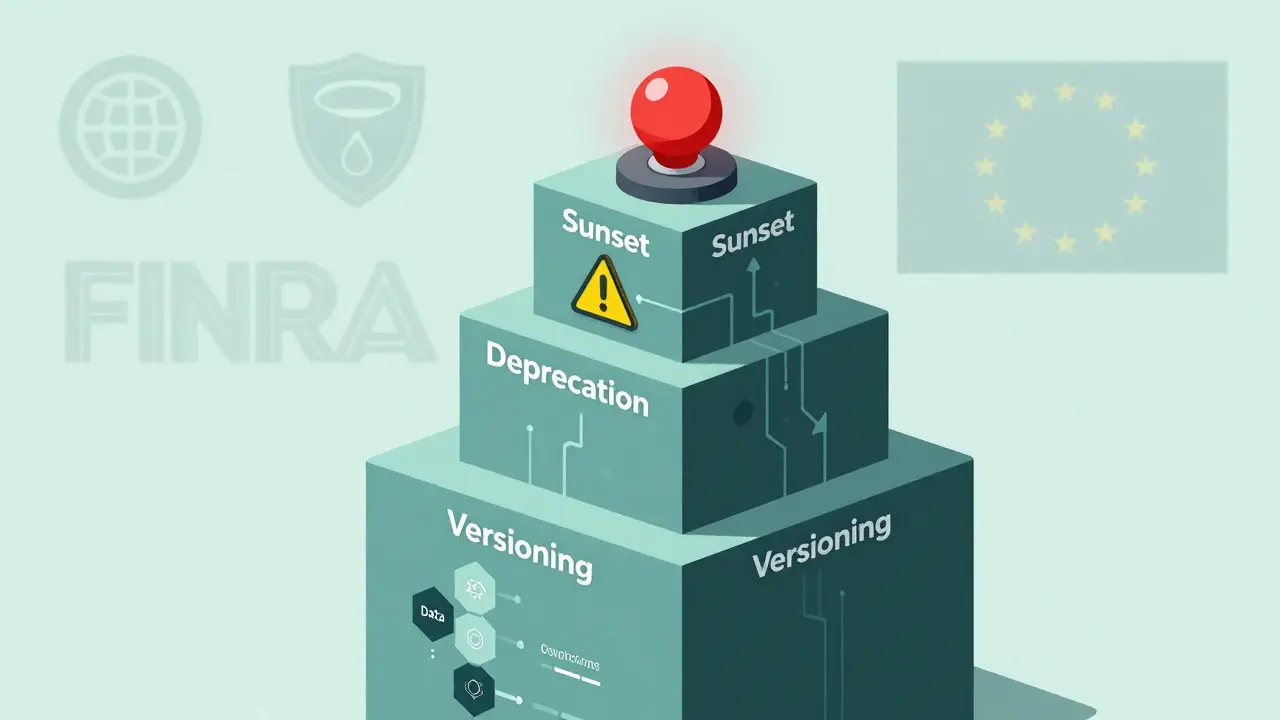

Model Lifecycle Management: Versioning, Deprecation, and Sunset Policies Explained

Learn how versioning, deprecation, and sunset policies keep AI models reliable, compliant, and safe. Real-world examples, industry standards, and actionable steps for managing AI lifecycles.

- Sep 24, 2025

- Collin Pace

- 0

- Permalink

Top Enterprise Use Cases for Large Language Models in 2025

In 2025, enterprise LLMs are transforming customer service, compliance, fraud detection, and document processing. Discover the top real-world use cases driving ROI, the critical factors for success, and why security and integration matter more than model size.

- Sep 19, 2025

- Collin Pace

- 6

- Permalink

Contextual Representations in Large Language Models: How LLMs Understand Meaning

Contextual representations let LLMs understand words based on their surroundings, not fixed meanings. From attention mechanisms to context windows, here’s how models like GPT-4 and Claude 3 make sense of language - and where they still fall short.

- Sep 16, 2025

- Collin Pace

- 0

- Permalink

How to Use Large Language Models for Marketing, Ads, and SEO

Learn how to use large language models for marketing, ads, and SEO without falling into common traps like hallucinations or lost brand voice. Real strategies, real results.

- Sep 5, 2025

- Collin Pace

- 8

- Permalink

Continuous Documentation: Keep Your READMEs and Diagrams in Sync with Your Code

Keep your READMEs and diagrams accurate by syncing them with your codebase using automation tools like GitHub Actions, ReadMe.io, and DeepDocs. Stop manual updates. Start living documentation.

- Aug 31, 2025

- Collin Pace

- 10

- Permalink

Security KPIs for Measuring Risk in Large Language Model Programs

Learn the essential security KPIs for measuring risk in large language model programs. Track detection, response, and resilience metrics to prevent prompt injection, data leaks, and model manipulation in production AI systems.

- Aug 23, 2025

- Collin Pace

- 5

- Permalink

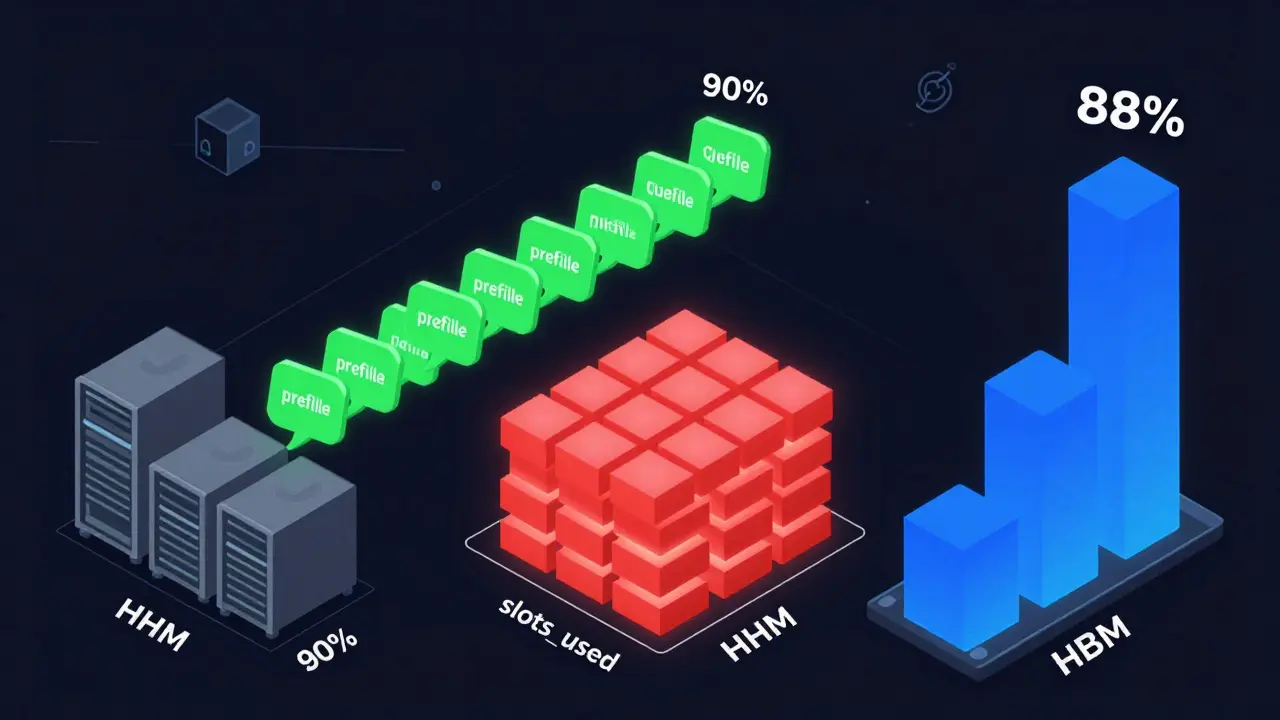

Autoscaling Large Language Model Services: How to Balance Cost, Latency, and Performance

Learn how to autoscale LLM services effectively using the right signals-prefill queue size, slots_used, and HBM usage-to cut costs by up to 60% without sacrificing latency. Avoid common pitfalls and choose the right strategy for your workload.

- Aug 6, 2025

- Collin Pace

- 10

- Permalink

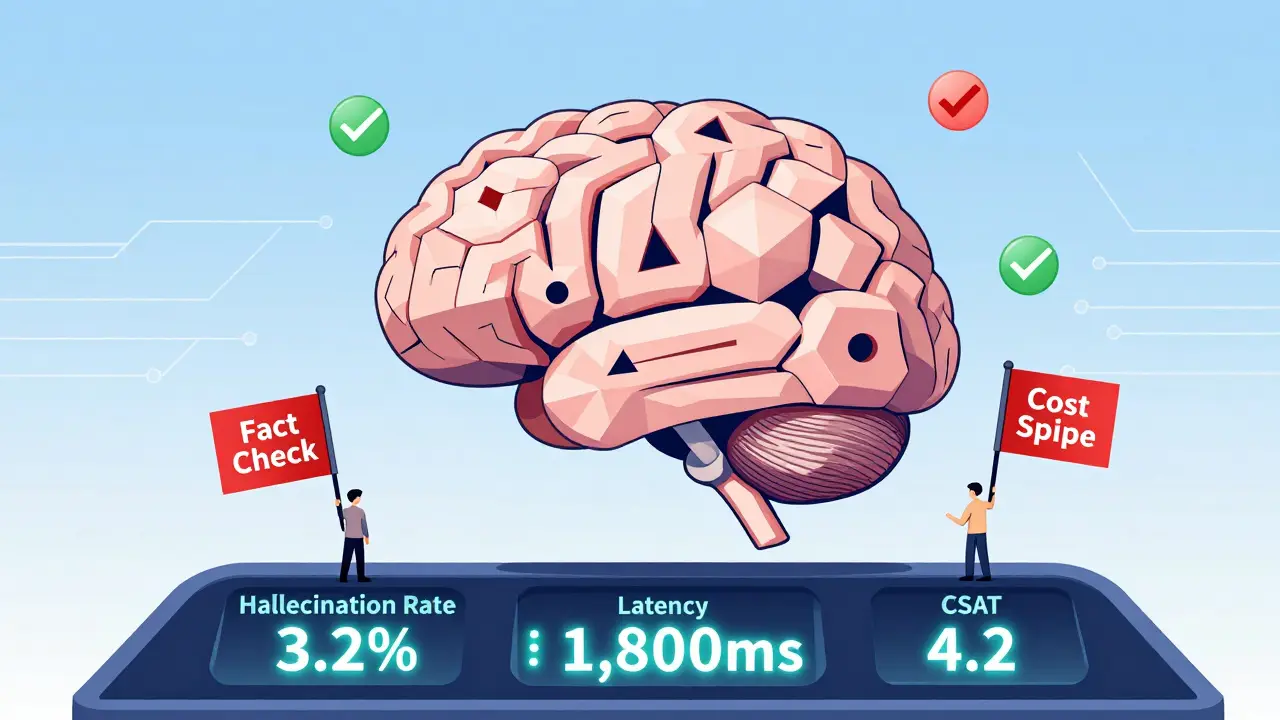

KPIs and Dashboards for Monitoring Large Language Model Health

Learn the essential KPIs and dashboard practices for monitoring large language model health in production. Track hallucinations, latency, cost, and user impact to avoid costly failures and build trustworthy AI systems.

- Jul 29, 2025

- Collin Pace

- 8

- Permalink

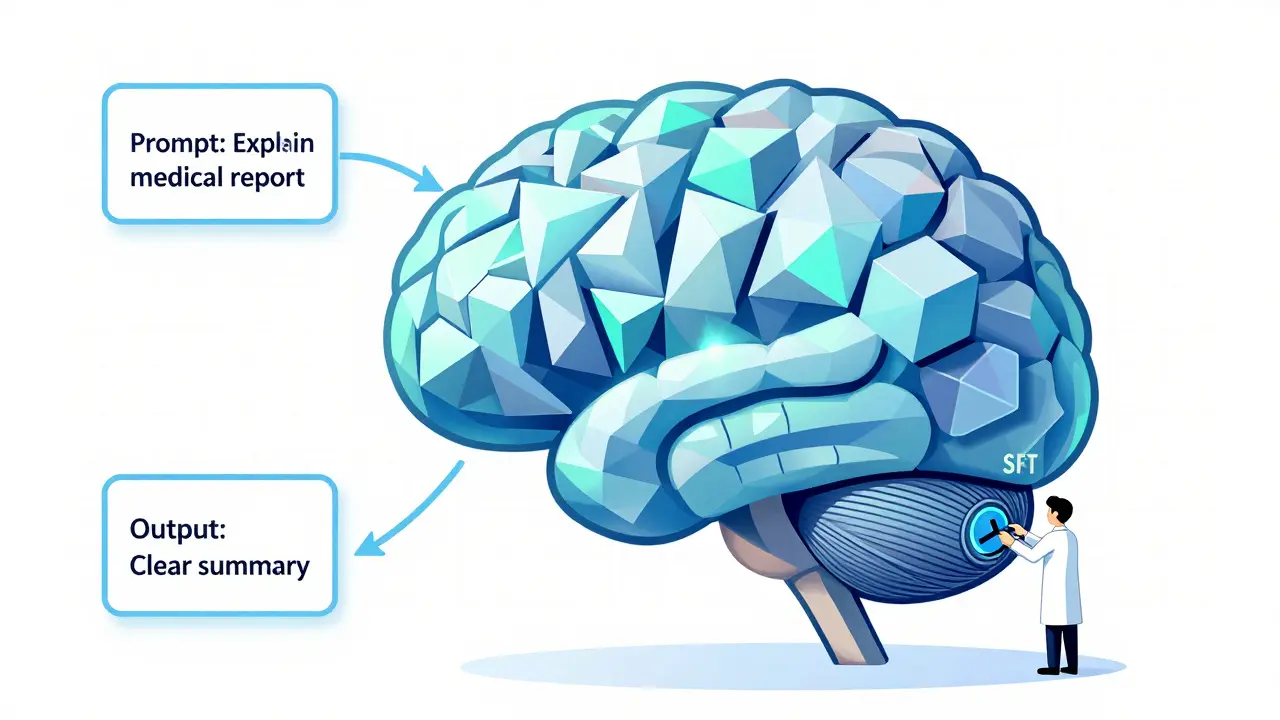

Supervised Fine-Tuning for Large Language Models: A Practical Guide for Real-World Use

Supervised fine-tuning turns generic LLMs into reliable tools using real examples. Learn how to do it right with minimal cost, avoid common mistakes, and get real results without needing an AI PhD.

- Jul 18, 2025

- Collin Pace

- 6

- Permalink